SC25: HACCing over 500 Petaflops on Frontier

Hello you fine Internet folks,

Hello you fine Internet folks,

Here at Supercomputing the Gordon Bell Prize is announced every year. The Gordon Bell Prize is awarded every year to recognize outstanding achievement in high-performance computing applications.

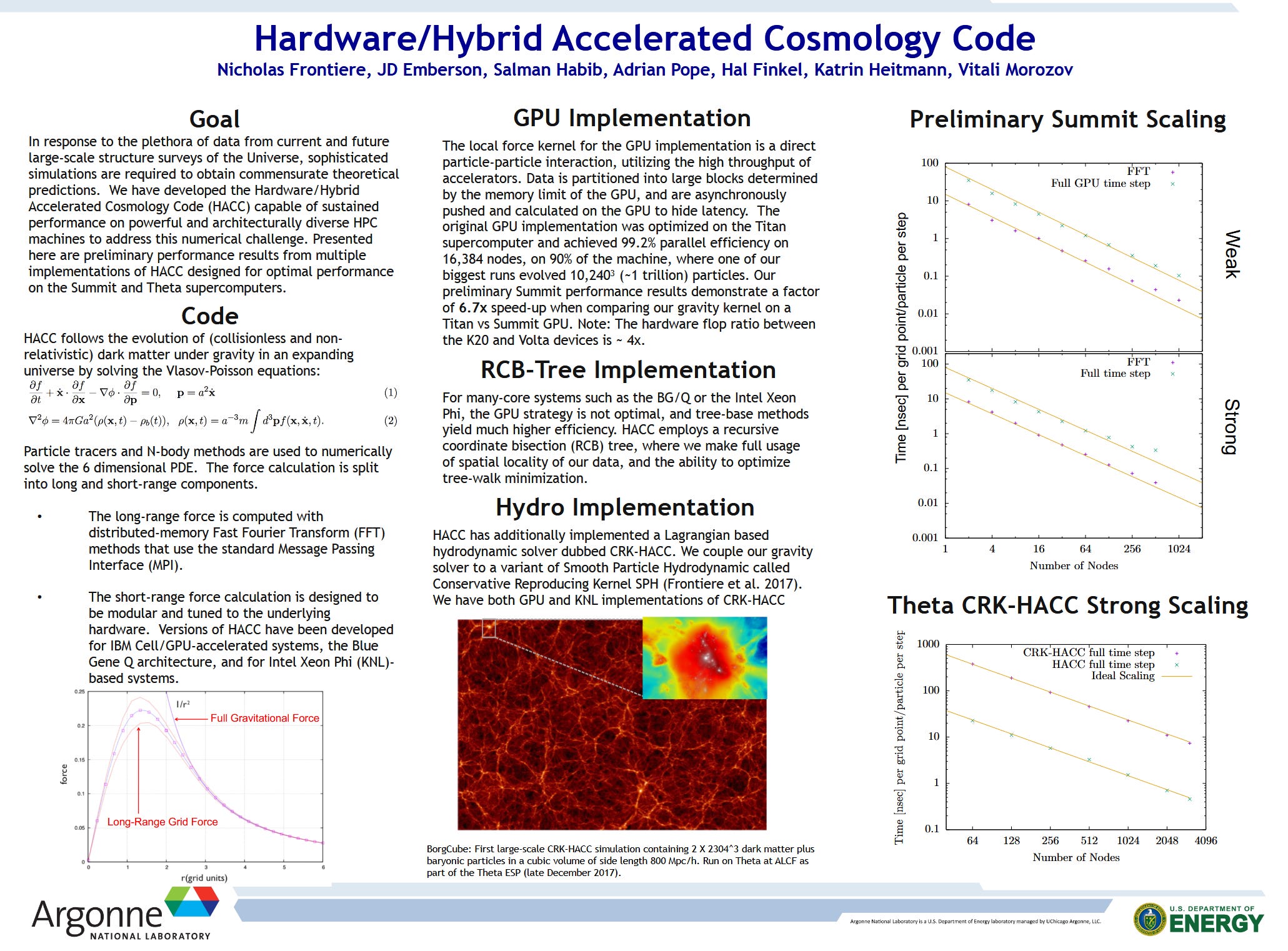

One of this year’s finalist is the largest ever simulation of the Universe run on the Frontier Supercomputer at the Department of Energy’s Oak Ridge National Laboratory (ORNL). The simulation was run using the Hardware/Hybrid Accelerated Cosmology Code also known as HACC.

This simulation of the observable universe tracked over 4 trillion particles across 15 billion light years of space. The prior state of the art observable universe simulations only went up to about 250 billion particles which is a fifteenth the number of particles of this new simulation.

This HACC simulation shows the universe about 10 billion years after the Big Bang.

But, not only was this the largest universe simulation ever, the ORNL team managed to get over 500 Petaflops on nearly 9,000 nodes of Frontier’s 9,402 nodes. As a reminder, Frontier manages to get approximately 1,353 Petaflops on High Performance Linpack (HPL). This means that for this simulation the ORNL team managed to get about 37% of the Rmax HPL performance out of Frontier which is very impressive for a non-synthetic workload.

It is awesome to see the Department of Energy’s (DOE) supercomputers being used for amazing science like this! With the announcement of the Discovery Supercomputer that is due in 2028/2029, I can’t wait to see the science that comes out of that system when it is turned over to the scientific community!

If you like the content then consider heading over to the Patreon or PayPal if you want to toss a few bucks to Chips and Cheese. Also consider joining the Discord.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Analyst News

'Empathetic' Salesforce bots to help those fired by uncaring humans

<h4>I’m sorry, Dave. I can’t give you your job back, but here’s the form you fill out to collect benefits</h4> <p>There’s a joke in Boston that goes: the people in Southie will steal your wallet and help you look for it.…</p>

Oracle cuts jobs across sales, engineering, security

<h4>Big Red declines comment as reports point to layoffs in the thousands</h4> <p>Oracle laid off thousands of employees on Tuesday as it ramps spending on AI infrastructure projects internally and with major technology partners.…</p>

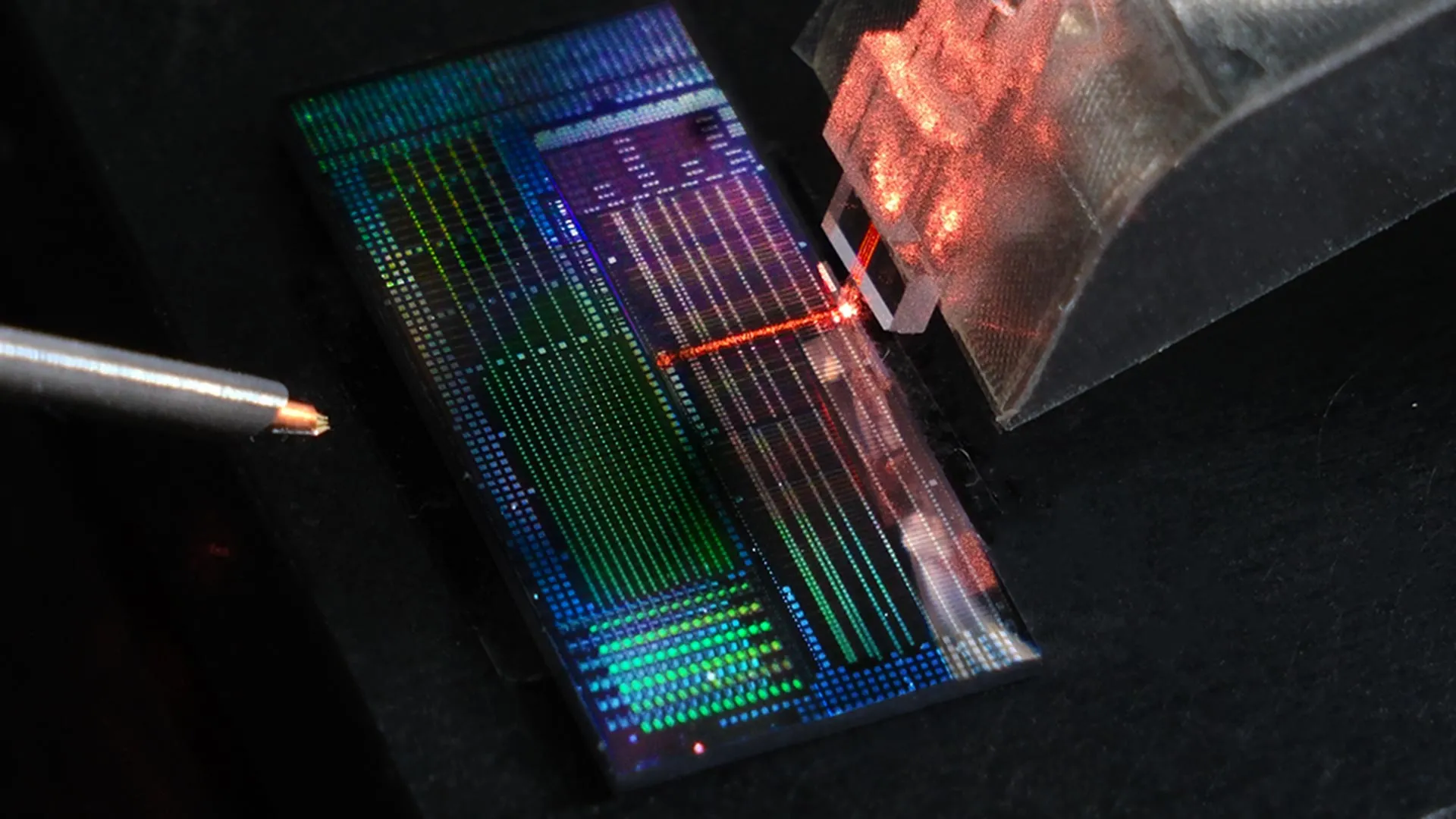

Caltech’s massive 6,100-qubit array brings the quantum future closer

Caltech scientists have built a record-breaking array of 6,100 neutral-atom qubits, a critical step toward powerful error-corrected quantum computers. The qubits maintained long-lasting superposition and exceptional accuracy, even while being moved within the array. This balance of scale and stability points toward the next milestone: linking qubits through entanglement to unlock true quantum computation.

This tiny chip could change the future of quantum computing

A new microchip-sized device could dramatically accelerate the future of quantum computing. It controls laser frequencies with extreme precision while using far less power than today’s bulky systems. Crucially, it’s made with standard chip manufacturing, meaning it can be mass-produced instead of custom-built. This opens the door to quantum machines far larger and more powerful than anything possible today.

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!