Exclusive | Caltech Researchers Claim Radical Compression of High-Fidelity AI Models - WSJ

<a href="https://news.google.com/rss/articles/CBMiuANBVV95cUxOMjNDNy1fX2U4LUVvOXhtQ2tDQk00cy1GTGJJNHFnOXl0M3VuMkkyQjI4dElrWHdUS292cWdMN3dZMzBzM2FqclFnVmREQzYxejBfdE00bjdwcnVOR19SX0dzcklhN2pKYlNhUmlNXzkxbVFfWG1jeDYySjEyQ3drWDZqZHJfVkJMWHZTNDBKbXlJMFhOTm1MZDh2Sm1NTVlTS3ZGRDNQNkF1U1ptOXM2OHQyek1jVUN0cXY5dHFVaUdxSEYtenlyZnJWc3dCWGFmdkhDSzdkOWNGS2ZweXpmcUtBb2phSXdXaV91enB3NlZ4NzBuTndHUXI4TVRLMW0tNDRYRDRicTdCZ0xxZF9CTV96RDlBWUdFbVdkOU9YYTljSDFPcHR6WDZNbFM4MUpBcGpvMHNGV1FBeDc1WTNHcXdkNjlNRUhQTlB6OXhBZkhkcHI0QXdtY0p0NFpaSlZmUGdLM3NNMEdUbVY4VGFNMVBXZVFseXZfN1FwcTFJSW9FblZPYl82LWY0WUppZ05SSk5YSkV6MkhlckpTS2tFZTd5UVRHNlV4TThaS0dtdDdta1M4QUl6Wg?oc=5" target="_blank">Exclusive | Caltech Researchers Claim Radical Compression of High-Fidelity AI Models</a> <font color="#6f6f6f">WSJ</font>

Could not retrieve the full article text.

Read on Google News: LLM →Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelresearch

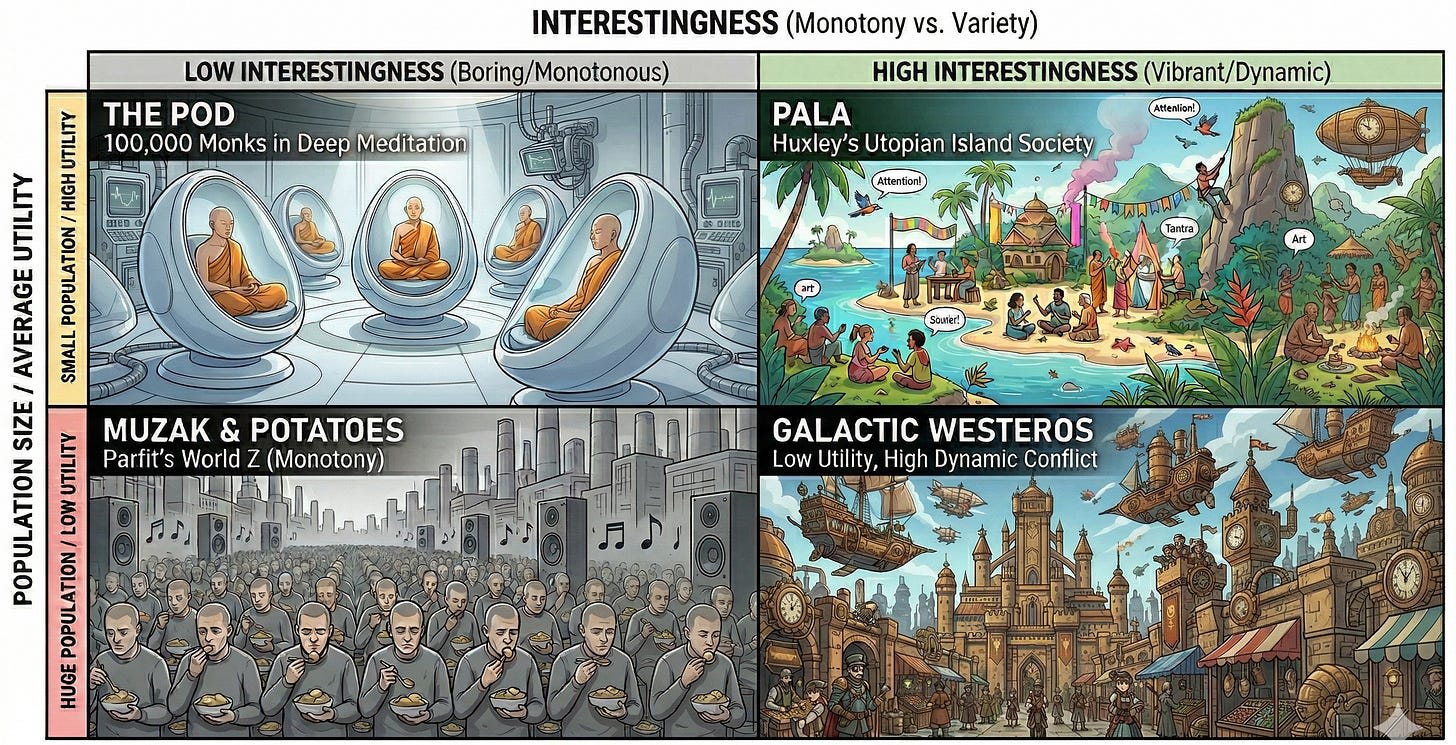

God Mode is Boring: Musings on Interestingness

(Crossposted from my Substack ) There is a preference that I think most people have, but which is extremely underdescribed. It is underdescribed because it is not very legible. But I believe that once I point it out, you will be able to easily recognize it. In a sense, I am doing something sinful here. A real description of interestingness should probably be done through song, or dance, or poetry. But I lack every artistic talent that would do the job justice. What I can do is analyze systems and write prose. Hopefully at least the LLMs will appreciate it. I am writing this with some anxiety. If it is a small sin to create an analytical post about interestingness, it is a cardinal sin to create a boring analytical post about interestingness. It is impossible to really cage within language,

Why do I believe preserving structure is enough?

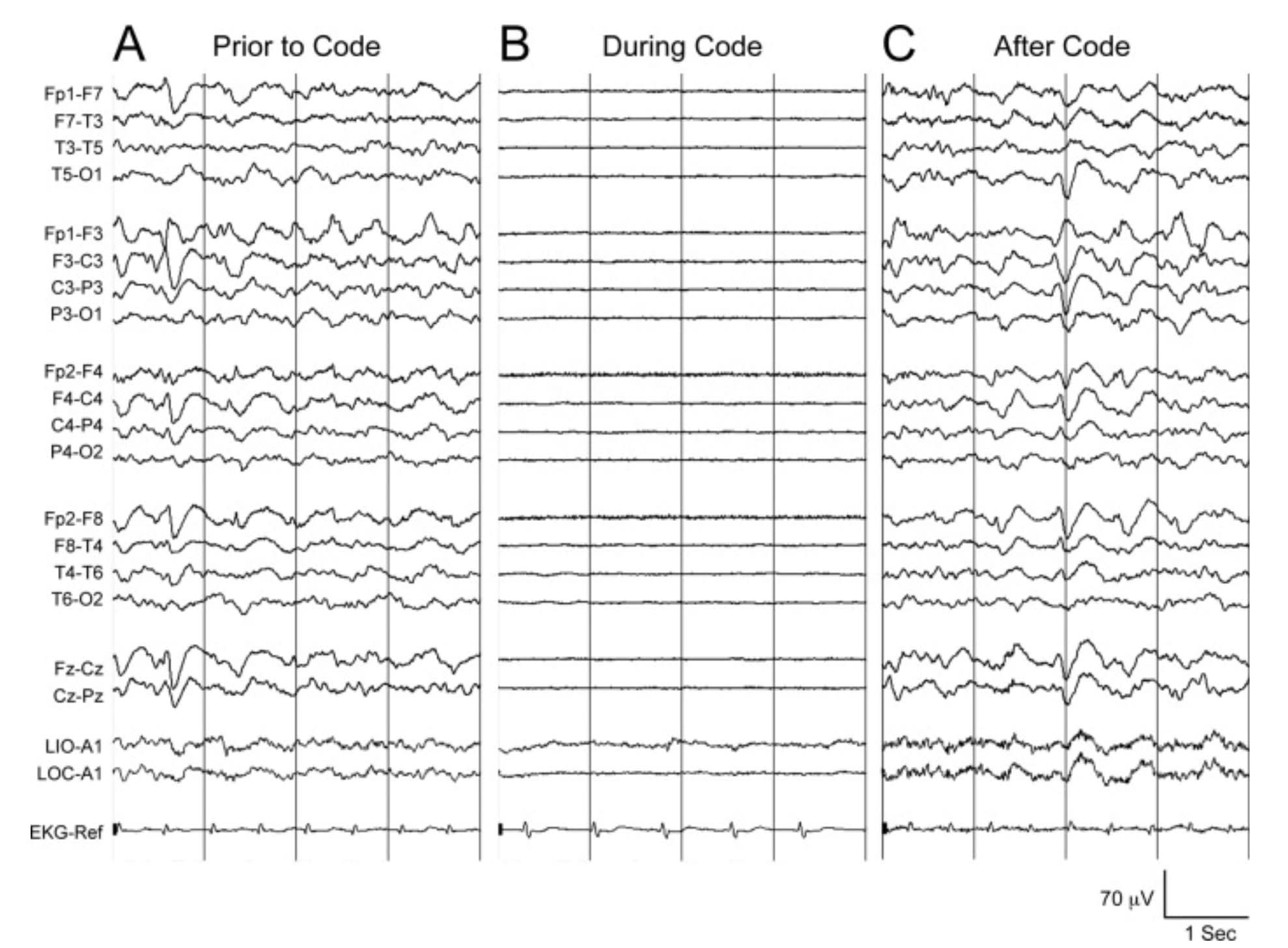

There's a lot even our best neuroscientists don't know about the human brain. How can we have any reasonable hope for preservation given those unknowns? What if there are crucial memory mechanisms that are so poorly understood, we don't even know to check whether our methods preserve them? As it turns out, there's some interesting empirical evidence about the general shape , and limits, of those unknowns. In Ted Chiang's short story Exhalation , a race of aliens have brains which run on compressed air, performing computations and storing information in elaborate arrangements of hinged gold-foil leaves. The leaves are held in position by a constant stream of air flowing through the brain's tubules, encoding alien thoughts and memories. That ephemeral suspension pattern is the whole self—any

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Interviews with Codex lead Alexander Embiricos, OpenClaw s Peter Steinberger, and others about OpenAI s upcoming superapp that combines ChatGPT with Codex (Alex Heath/Sources)

Alex Heath / Sources : Interviews with Codex lead Alexander Embiricos, OpenClaw's Peter Steinberger, and others about OpenAI's upcoming superapp that combines ChatGPT with Codex Why Codex is becoming the foundation for everything. Also: Fidji Simo's internal memo about taking a leave of absence. Paid

Asthenosphere

================================================================ ASTHENOSPHERE NPU INFERENCE METRICS Hardware: Device: AMD Phoenix XDNA gen1 (AIE2) Tiles: 12/12 (complete transformer pipeline) Device ID: /dev/accel/accel0 Status: ACTIVE Reliability: 100% Pipeline: PreScale > Q proj > RoPE > Attention > O proj > Attn ResAdd PreScale2 > Gate+SiLU+Up > EltMul > Down > FFN ResAdd > Score Head 14 ops, zero CPU/GPU during NPU compute SESSION AVERAGES (7 messages) Avg tokens/msg: 64.7 Avg elapsed/msg: 83ms Avg eff tok/s: 3866 Avg acceptance: 91.8% Avg cost/msg: 21.3 Motes ALL-TIME AVERAGES (7 messages) Avg tokens/msg: 64.7 Avg elapsed/msg: 83ms Avg eff tok/s: 3866 Avg acceptance: 91.8% Avg cost/msg: 21.3 Motes PER-DISPATCH LOG (7 entries) Time Tokens Dispatches Elapsed Eff tok/s Accept% Motes 16:

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!