Why not to use Cosine Similarity between Label Representations

arXiv:2603.29488v1 Announce Type: new Abstract: Cosine similarity is often used to measure the similarity of vectors. These vectors might be the representations of neural network models. However, it is not guaranteed that cosine similarity of model representations will tell us anything about model behaviour. In this paper we show that when using a softmax classifier, be it an image classifier or an autoregressive language model, measuring the cosine similarity between label representations (called unembeddings in the paper) does not give any information on the probabilities assigned by the model. Specifically, we prove that for any softmax classifier model, given two label representations, it is possible to make another model which gives the same probabilities for all labels and inputs, bu

View PDF HTML (experimental)

Abstract:Cosine similarity is often used to measure the similarity of vectors. These vectors might be the representations of neural network models. However, it is not guaranteed that cosine similarity of model representations will tell us anything about model behaviour. In this paper we show that when using a softmax classifier, be it an image classifier or an autoregressive language model, measuring the cosine similarity between label representations (called unembeddings in the paper) does not give any information on the probabilities assigned by the model. Specifically, we prove that for any softmax classifier model, given two label representations, it is possible to make another model which gives the same probabilities for all labels and inputs, but where the cosine similarity between the representations is now either 1 or -1. We give specific examples of models with very high or low cosine simlarity between representations and show how to we can make equivalent models where the cosine similarity is now -1 or 1. This translation ambiguity can be fixed by centering the label representations, however, labels with representations with low cosine similarity can still have high probability for the same inputs. Fixing the length of the representations still does not give a guarantee that high(or low) cosine similarity will give high(or low) probability to the labels for the same inputs. This means that when working with softmax classifiers, cosine similarity values between label representations should not be used to explain model probabilities.

Subjects:

Machine Learning (cs.LG)

Cite as: arXiv:2603.29488 [cs.LG]

(or arXiv:2603.29488v1 [cs.LG] for this version)

https://doi.org/10.48550/arXiv.2603.29488

arXiv-issued DOI via DataCite (pending registration)

Submission history

From: Beatrix Miranda Ginn Nielsen [view email] [v1] Tue, 31 Mar 2026 09:33:12 UTC (177 KB)

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modellanguage modelneural network

Cooperative Local Differential Privacy: Securing Time Series Data in Distributed Environments

arXiv:2511.09696v2 Announce Type: replace Abstract: The rapid growth of smart devices such as phones, wearables, IoT sensors, and connected vehicles has led to an explosion of continuous time series data that offers valuable insights in healthcare, transportation, and more. However, this surge raises significant privacy concerns, as sensitive patterns can reveal personal details. While traditional differential privacy (DP) relies on trusted servers, local differential privacy (LDP) enables users to perturb their own data. However, traditional LDP methods perturb time series data by adding user-specific noise but exhibit vulnerabilities. For instance, noise applied within fixed time windows can be canceled during aggregation (e.g., averaging), enabling adversaries to infer individual statis

LaSM: Layer-wise Scaling Mechanism for Defending Pop-up Attack on GUI Agents

arXiv:2507.10610v2 Announce Type: replace Abstract: Graphical user interface (GUI) agents built on multimodal large language models (MLLMs) have recently demonstrated strong decision-making abilities in screen-based interaction tasks. However, they remain highly vulnerable to pop-up-based environmental injection attacks, where malicious visual elements divert model attention and lead to unsafe or incorrect actions. Existing defense methods either require costly retraining or perform poorly under inductive interference. In this work, we systematically study how such attacks alter the attention behavior of GUI agents and uncover a layer-wise attention divergence pattern between correct and incorrect outputs. Based on this insight, we propose \textbf{LaSM}, a \textit{Layer-wise Scaling Mechan

Triple-Identity Authentication: The Future of Secure Access

arXiv:2505.02004v4 Announce Type: replace Abstract: In password-based authentication systems, the username fields are essentially unprotected, while the password fields are susceptible to attacks. In this article, we shift our research focus from traditional authentication paradigm to the establishment of gatekeeping mechanisms for the systems. To this end, we introduce a Triple-Identity Authentication scheme. First, we combine each user credential (i.e., login name, login password, and authentication password) with the International Mobile Equipment Identity (IMEI) and International Mobile Subscriber Identity (IMSI) of a user's smartphone to create a combined identity represented as "credential+IMEI+IMSI", defined as a system attribute of the user. Then, we grant the password-based local

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

LaSM: Layer-wise Scaling Mechanism for Defending Pop-up Attack on GUI Agents

arXiv:2507.10610v2 Announce Type: replace Abstract: Graphical user interface (GUI) agents built on multimodal large language models (MLLMs) have recently demonstrated strong decision-making abilities in screen-based interaction tasks. However, they remain highly vulnerable to pop-up-based environmental injection attacks, where malicious visual elements divert model attention and lead to unsafe or incorrect actions. Existing defense methods either require costly retraining or perform poorly under inductive interference. In this work, we systematically study how such attacks alter the attention behavior of GUI agents and uncover a layer-wise attention divergence pattern between correct and incorrect outputs. Based on this insight, we propose \textbf{LaSM}, a \textit{Layer-wise Scaling Mechan

SHIFT: Stochastic Hidden-Trajectory Deflection for Removing Diffusion-based Watermark

arXiv:2603.29742v1 Announce Type: cross Abstract: Diffusion-based watermarking methods embed verifiable marks by manipulating the initial noise or the reverse diffusion trajectory. However, these methods share a critical assumption: verification can succeed only if the diffusion trajectory can be faithfully reconstructed. This reliance on trajectory recovery constitutes a fundamental and exploitable vulnerability. We propose $\underline{\mathbf{S}}$tochastic $\underline{\mathbf{Hi}}$dden-Trajectory De$\underline{\mathbf{f}}$lec$\underline{\mathbf{t}}$ion ($\mathbf{SHIFT}$), a training-free attack that exploits this common weakness across diverse watermarking paradigms. SHIFT leverages stochastic diffusion resampling to deflect the generative trajectory in latent space, making the reconstru

The Manipulate-and-Observe Attack on Quantum Key Distribution

arXiv:2603.29669v1 Announce Type: cross Abstract: Quantum key distribution is often regarded as an unconditionally secure method to exchange a secret key by harnessing fundamental aspects of quantum mechanics. Despite the robustness of key exchange, classical post-processing reveals vulnerabilities that an eavesdropper could target. In particular, many reconciliation protocols correct errors by comparing the parities of subsets between both parties. These communications occur over insecure channels, leaking information that an eavesdropper could exploit. Currently there is no holistic threat model that addresses how parity-leakage during reconciliation might be actively manipulated. In this paper we introduce a new form of attack, namely the Manipulate-and-Observe attack in which the adver

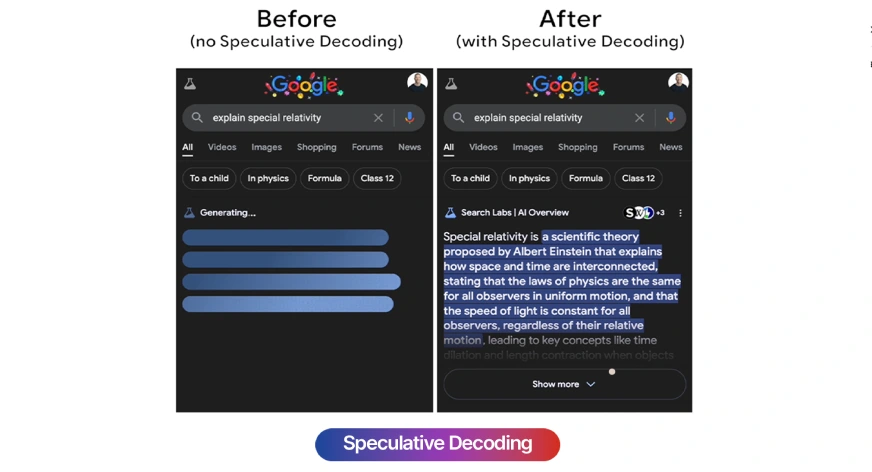

Speculative Decoding: How LLMs Generate Text 3x Faster

You probably use Google on a daily basis, and nowadays, you might have noticed AI-powered search results that compile answers from multiple sources. But you might have wondered how the AI can gather all this information and respond at such blazing speeds, especially when compared to the medium-sized and large models we typically use. Smaller […] The post Speculative Decoding: How LLMs Generate Text 3x Faster appeared first on Analytics Vidhya .

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!