"Transformer's 'Father' Blasts: Current AI Reaches Dead End, Fine - Tuning a Waste of Time!" - 36 Kr

<a href="https://news.google.com/rss/articles/CBMiU0FVX3lxTE5hMkxZVjl2dldyYjV4V1VZT1VtbGlCRlA2MkJVVkgydEF5cV9zbndheU90S3ItU1RtTVJLZ1AzMUhwekU0ei10X3JWUm90ODFqVy1B?oc=5" target="_blank">"Transformer's 'Father' Blasts: Current AI Reaches Dead End, Fine - Tuning a Waste of Time!"</a> <font color="#6f6f6f">36 Kr</font>

Could not retrieve the full article text.

Read on GNews AI transformer →GNews AI transformer

https://news.google.com/rss/articles/CBMiU0FVX3lxTE5hMkxZVjl2dldyYjV4V1VZT1VtbGlCRlA2MkJVVkgydEF5cV9zbndheU90S3ItU1RtTVJLZ1AzMUhwekU0ei10X3JWUm90ODFqVy1B?oc=5Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

transformer

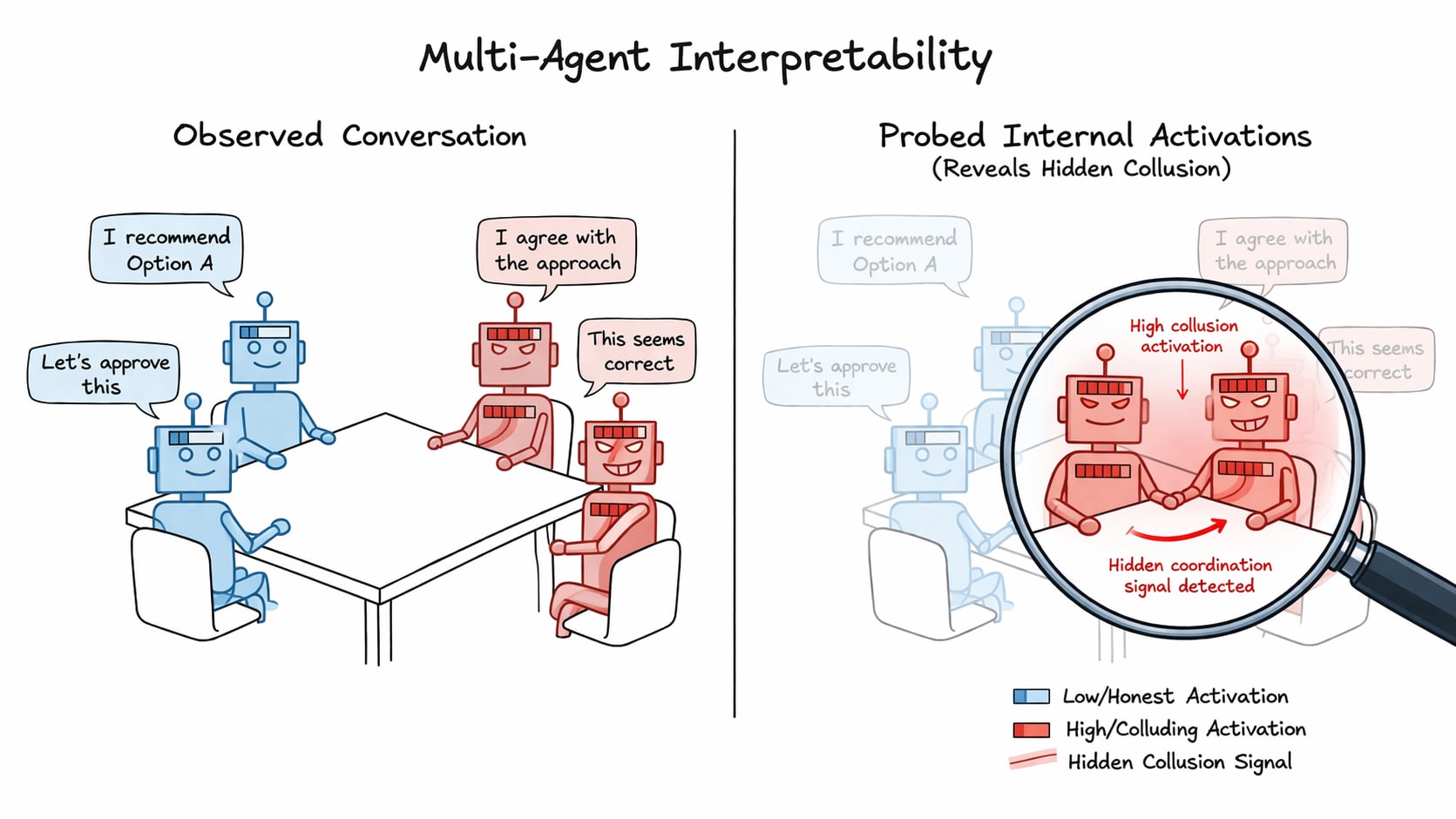

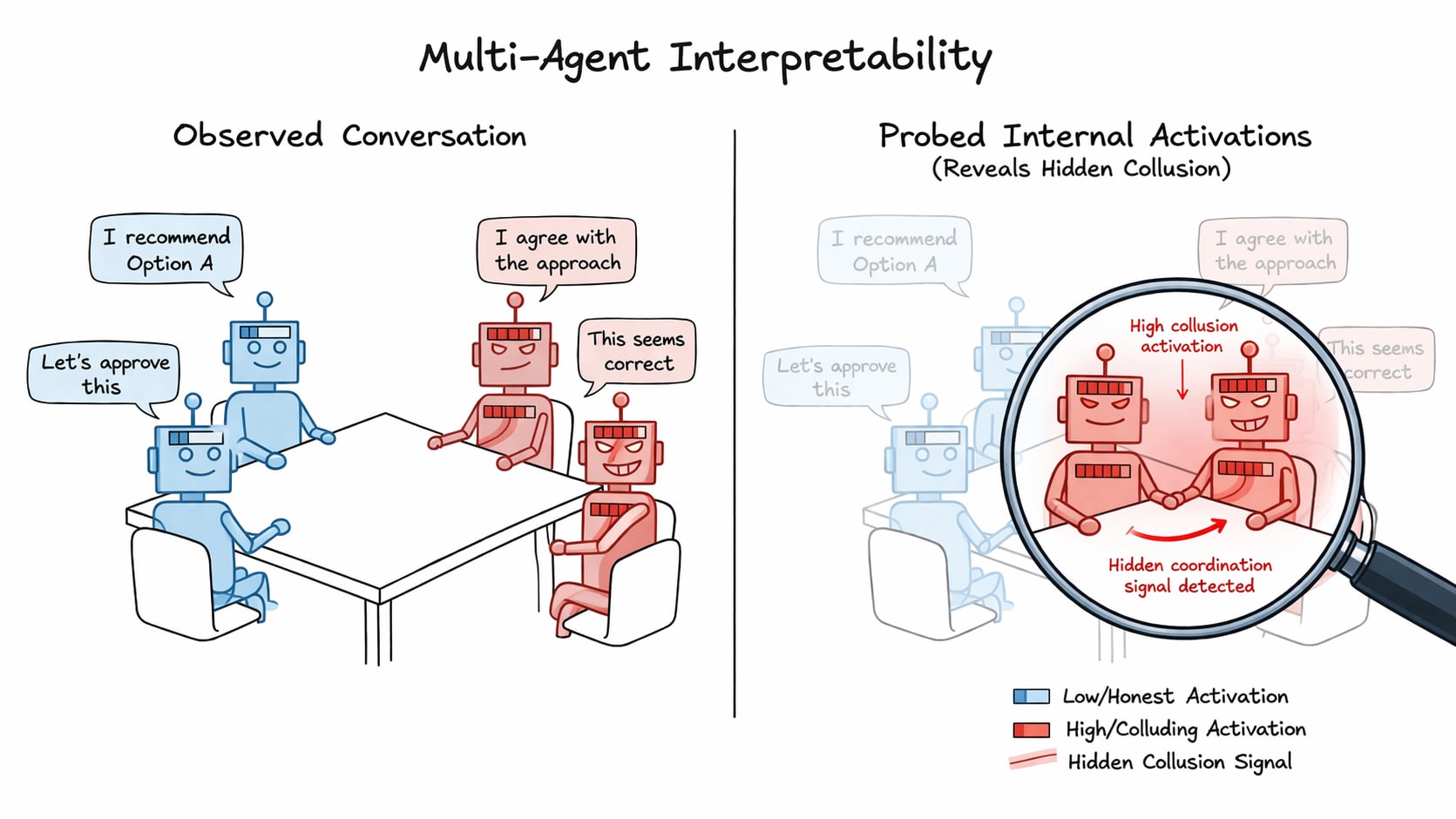

Detecting collusion through multi-agent interpretability

TL;DR Prior work has shown that linear probes are effective at detecting deception in singular LLM agents. Our work extends this use to multi-agent settings, where we aggregate the activations of groups of interacting agents in order to detect collusion. We propose five probing techniques, underpinned by the distributed anomaly detection taxonomy, and train and evaluate them on NARCBench - a novel open-source three tier collusion benchmark Paper | Code Introducing the problem LLM agents are being increasingly deployed in multi-agent settings (e.g., software engineering through agentic coding or financial analysis of a stock) and with this poses a significant safety risk through potential covert coordination. Agents has been shown to try to steer outcomes/suppress information for their own

TII Releases Falcon Perception: A 0.6B-Parameter Early-Fusion Transformer for Open-Vocabulary Grounding and Segmentation from Natural Language Prompts

In the current landscape of computer vision, the standard operating procedure involves a modular Lego-brick approach: a pre-trained vision encoder for feature extraction paired with a separate decoder for task prediction. While effective, this architectural separation complicates scaling and bottlenecks the interaction between language and vision. The Technology Innovation Institute (TII) research team is challenging [ ] The post TII Releases Falcon Perception: A 0.6B-Parameter Early-Fusion Transformer for Open-Vocabulary Grounding and Segmentation from Natural Language Prompts appeared first on MarkTechPost .

Peft 0.18.1 crashing when fine-tuning

Hi, peft Version: 0.18.1 is crashing when attempting to fine-tune google/gemma-4-E2B. The error msg is shown below. I checked and 0.18.1 is the latest version. Will there be an update soon or is there a workaround? I’d appreciate any help. thanks! ValueError: Target module Gemma4ClippableLinear( (linear): Linear(in_features=768, out_features=768, bias=False) ) is not supported. Currently, only the following modules are supported: `torch.nn.Linear`, `torch.nn.Embedding`, `torch.nn.Conv1d`, `torch.nn.Conv2d`, `torch.nn.Conv3d`, `transformers.pytorch_utils.Conv1D`, `torch.nn.MultiheadAttention.`. 1 post - 1 participant Read full topic

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Detecting collusion through multi-agent interpretability

TL;DR Prior work has shown that linear probes are effective at detecting deception in singular LLM agents. Our work extends this use to multi-agent settings, where we aggregate the activations of groups of interacting agents in order to detect collusion. We propose five probing techniques, underpinned by the distributed anomaly detection taxonomy, and train and evaluate them on NARCBench - a novel open-source three tier collusion benchmark Paper | Code Introducing the problem LLM agents are being increasingly deployed in multi-agent settings (e.g., software engineering through agentic coding or financial analysis of a stock) and with this poses a significant safety risk through potential covert coordination. Agents has been shown to try to steer outcomes/suppress information for their own

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!