Show HN: Semantic atlas of 188 constitutions in 3D (30k articles, embeddings)

I built this after noticing that existing tools for comparing constitutional law either have steep learning curves or only support keyword search. By combining Gemini embeddings with UMAP projection, you can navigate 30,828 constitutional articles from 188 countries in 3D and find conceptually related provisions even when the wording differs. Feedback welcome, especially from legal researchers or comparative law folks. Source and pipeline: github.com/joaoli13/constitutional-map-ai Comments URL: https://news.ycombinator.com/item?id=47609372 Points: 4 # Comments: 0

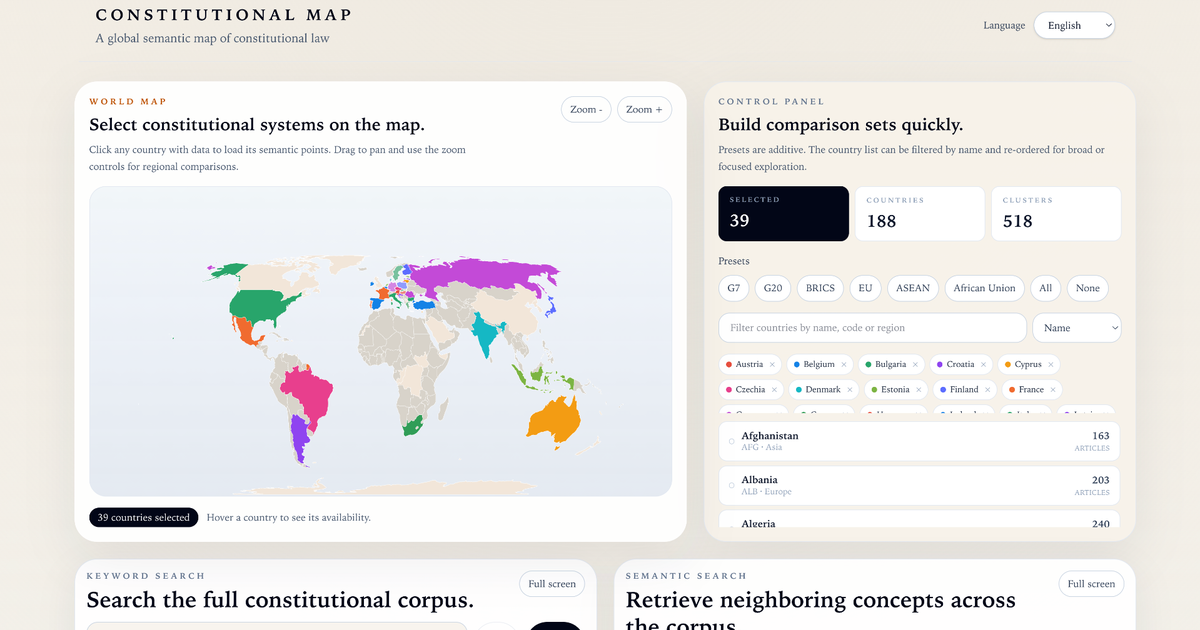

World Map

Select constitutional systems on the map

Click any country with data to load its semantic points. Drag to pan and use the zoom controls for regional comparisons.

No countries selectedHover a country to see its availability.

Control Panel

Build comparison sets quickly

Presets are additive. The country list can be filtered by name and re-ordered for broad or focused exploration.

Selected

0

Countries

189

Clusters

509

Presets

No countries selected yet.

UNIFIED SEARCH

KEYWORD SEARCH

Search terms

Select one or more countries to restrict the search.

Run a search to see ranked article matches.

SEMANTIC SEARCH

Search concepts or ideas

Queries may be written in any language, but English usually works best against this corpus.

Select one or more countries to restrict retrieval.

Run a semantic search to inspect semantically nearby constitutional articles.

3D Semantic Space

Navigate the semantic cluster field

0 visible points from 0 loaded segments.

Country Stats

Semantic coverage by selected country

Select countries on the map or from the control panel to inspect their metrics.

Reading Guide

How to read the visualization

What You Are Seeing

Each point in the 3D view represents a constitutional article or other meaningful legal unit. Nearby points are not nearby because they come from the same country, but because the language of those passages is semantically similar. Use country selection to compare how different constitutions occupy the same semantic terrain.

The map and the country list are selection tools. They decide which constitutions are loaded into the scene. Selecting more countries does not change the geometry of the embedding itself; it changes which parts of that global semantic space you can inspect.

Semantic Space

The embedding turns legal text into vectors, and the clustering step groups vectors that tend to discuss related constitutional themes. In country mode, color shows political origin. In cluster mode, color shows thematic neighborhood.

Large, dense clouds usually indicate recurring constitutional ideas such as rights, institutions, emergency powers, elections, or amendment rules. Isolated points often mark unusual provisions, rare wording, or country-specific constitutional design choices.

The platform offers two types of search: keyword search finds literal term occurrences, while semantic search retrieves conceptually nearby passages even without matching terms. Search results highlight regions of the semantic space in the 3D canvas, linking what you read to where it sits.

How to Read the Metrics

In the country statistics, Coverage measures how much of the global cluster landscape a constitution reaches. Entropy measures how evenly its segments are distributed across that landscape: high entropy suggests a broader semantic spread, while low entropy suggests concentration in fewer themes.

In the article detail panel, Global Cluster is the identifier of the thematic group assigned to that segment in the worldwide clustering. When the value is -1, it means the segment was left outside the defined thematic groupings in that global step. Probability indicates how confidently the clustering model placed that segment in that group: higher values mean a cleaner fit, while lower values usually mark more ambiguous or boundary cases.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

geminilegalresearch![[P] Trained a small BERT on 276K Kubernetes YAMLs using tree positional encoding instead of sequential](https://images.unsplash.com/photo-1677442135703-1787eea5ce01?w=600&q=80)

[P] Trained a small BERT on 276K Kubernetes YAMLs using tree positional encoding instead of sequential

I trained a BERT-style transformer on 276K Kubernetes YAML files, replacing standard positional encoding with learned tree coordinates (depth, sibling index, node type). The model uses hybrid bigram/trigram prediction targets to learn both universal structure and kind-specific patterns — 93/93 capability tests passing. Interesting findings: learned depth embeddings are nearly orthogonal (categorical, not smooth like sine/cosine), and 28/48 attention heads specialize on same-depth attention (up to 14.5x bias). GitHub: https://github.com/vimalk78/yaml-bert submitted by /u/vimalk78 [link] [comments]

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!