Copilot SDK in public preview

The GitHub Copilot SDK is now available in public preview. This gives you the building blocks to embed Copilot s agentic capabilities directly into your own applications, workflows, and platform services. The post Copilot SDK in public preview appeared first on The GitHub Blog .

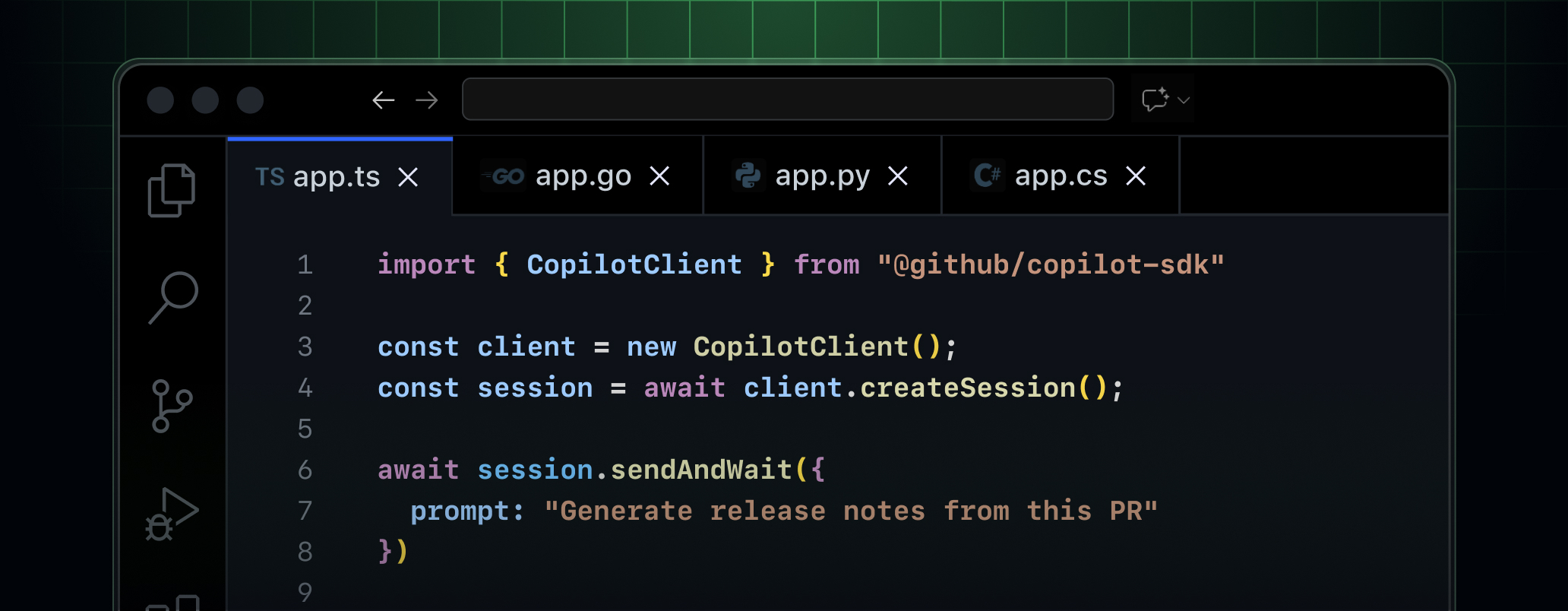

The GitHub Copilot SDK is now available in public preview. This gives you the building blocks to embed Copilot’s agentic capabilities directly into your own applications, workflows, and platform services.

The Copilot SDK exposes the same production-tested agent runtime that powers GitHub Copilot cloud agent and Copilot CLI. Instead of building your own AI orchestration layer, you get tool invocation, streaming, file operations, and multi-turn sessions out of the box.

Now available in five languages

Build with the SDK in your language of choice:

-

Node.js / TypeScript: npm install @github/copilot-sdk

-

Python: pip install github-copilot-sdk

-

Go: go get github.com/github/copilot-sdk/go

-

.NET: dotnet add package GitHub.Copilot.SDK

-

Java: Newly available to install via Maven.

Key capabilities

-

Custom tools and agents: Define domain-specific tools with handlers and let the agent decide when to invoke them. Build custom agents with tailored instructions for your use case.

-

Fine-grained system prompt customization: Customize sections of the Copilot system prompt using replace, append, prepend, or dynamic transform callbacks. There’s no need to rewrite the entire prompt.

-

Streaming and real-time responses: Stream responses token-by-token for responsive user experiences.

-

Blob attachments: Send images, screenshots, and binary data inline without writing to disk.

-

OpenTelemetry support: Built-in distributed tracing with W3C trace context propagation across all SDKs.

-

Permission framework: Gate sensitive operations with approval handlers, or mark read-only tools to skip permissions entirely.

-

Bring Your Own Key (BYOK): Use your own API keys for OpenAI, Azure AI Foundry, or Anthropic.

Get started

The Copilot SDK is available to all Copilot and non-Copilot subscribers, including Copilot Free for personal use and BYOK for enterprises. Each prompt counts toward your premium request quota for Copilot subscribers.

Check out the getting started guide to start building and join the discussion in the GitHub Community.

GitHub Copilot Changelog

https://github.blog/changelog/2026-04-02-copilot-sdk-in-public-previewSign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

availableapplicationplatform

Abacus AI Review (2026): ChatLLM, DeepAgent, Pricing, Features, and Whether It’s Worth It

If you’re wondering whether Abacus AI can replace multiple AI subscriptions and support real workflows — not just simple chat — this is… Continue reading on Medium »

Why Prompt Injection Hits Harder in MCP: Scope Constraints and Blast Radius

Why Prompt Injection Hits Harder in MCP: Scope Constraints and Blast Radius The GitHub issue tracker for the official MCP servers repository has developed a recurring theme over the last two months: security advisories. Not general hardening suggestions — specific reports of prompt-injection-driven file reads, SSRF, sandbox bypasses, and unconstrained string parameters across official servers. This is not a bug-report backlog. It's a design pattern gap. The reason prompt injection hits harder in MCP than in stateless APIs isn't just "LLMs can be tricked." It's that MCP tools are action-capable by design, and most server implementations give those tools unconstrained reach into the environment they run in. The structural problem: tools with no scope constraints A traditional API call is sco

I Mapped the OWASP Top 10 for AI Agents Against My Scanner — Here's What's Missing

OWASP just published the Top 10 for Agentic Applications — the first attempt to standardize what "agent security" actually means. I build clawhub-bridge , a security scanner for AI agent skills. 125 detection patterns across 9 modules, 240 tests, zero external dependencies. When a standardized framework drops for exactly the domain you work in, you run the comparison. Here's what I found. The Framework Code Name One-liner ASI01 Agent Goal Hijack Prompt injection redirects the agent's objective ASI02 Tool Misuse Exploitation Dangerous tool chaining, recursion, excessive execution ASI03 Identity Privilege Abuse Delegated authority, ambiguous identity, privilege escalation ASI04 Supply Chain Compromise Poisoned agents, tools, schemas from external sources ASI05 Unexpected Code Execution Gener

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Products

Why Prompt Injection Hits Harder in MCP: Scope Constraints and Blast Radius

Why Prompt Injection Hits Harder in MCP: Scope Constraints and Blast Radius The GitHub issue tracker for the official MCP servers repository has developed a recurring theme over the last two months: security advisories. Not general hardening suggestions — specific reports of prompt-injection-driven file reads, SSRF, sandbox bypasses, and unconstrained string parameters across official servers. This is not a bug-report backlog. It's a design pattern gap. The reason prompt injection hits harder in MCP than in stateless APIs isn't just "LLMs can be tricked." It's that MCP tools are action-capable by design, and most server implementations give those tools unconstrained reach into the environment they run in. The structural problem: tools with no scope constraints A traditional API call is sco

A 95% Facial Match Falls Apart If the Face Itself Is Fake

how deepfakes are changing the landscape of biometric verification For developers building computer vision (CV) and biometric pipelines, we’ve spent the last decade chasing the "perfect" F1 score. We’ve tuned our thresholds and optimized our Euclidean distance calculations to ensure that when a system says two faces match, they actually match. But as synthetic media reaches parity with reality, we are hitting the "Accuracy Paradox": a 99% accurate facial comparison algorithm produces a 100% false result if the input data is a deepfake. The technical implication for the dev community is a fundamental shift in how we architect identity systems. We are moving away from "biometric-only" verification toward a "biometric plus evidence" model. If you are currently building apps that rely on a sim

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!