Two-dimensional geometric template diffusion for boosting single-sequence protein structure prediction

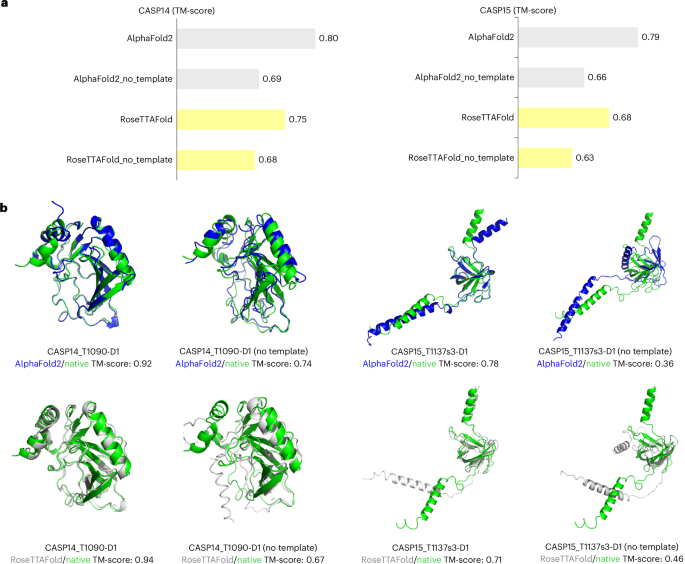

Nature Machine Intelligence, Published online: 01 April 2026; doi:10.1038/s42256-026-01210-2 Wang et al. introduce TDFold, which reformulates 3D protein structure prediction as a 2D image-like diffusion task. Its geometric template diffusion framework offers greater accuracy, speed and efficiency than leading models.

References

- Jumper, J. et al. Highly accurate protein structure prediction with alphafold. Nature 596, 583–589 (2021).

Article

Google Scholar

- Baek, M. et al. Accurate prediction of protein structures and interactions using a three-track neural network. Science 117, 871–876 (2021).

Article

Google Scholar

- Zhang, J. et al. Mufold: a new solution for protein 3d structure prediction. Proteins Struct. Funct. Bioinform. 78, 1137–1152 (2010).

Article

Google Scholar

- Hong, Y., Lee, J. & Ko, J. A-Prot: protein structure modeling using MSA transformer. BMC Bioinformatics 23, 93 (2022).

Article

Google Scholar

- Zhang, C. et al. DeepMSA: constructing deep multiple sequence alignment to improve contact prediction and fold-recognition for distant-homology proteins. Bioinformatics 36, 2105–2112 (2020).

Article

Google Scholar

- Marks, D. S., Hopf, T. A. & Sander, C. Protein structure prediction from sequence variation. Nat. Biotechnol. 30, 1072–1080 (2012).

Article

Google Scholar

- Ju, F. et al. CopulaNet: Learning residue co-evolution directly from multiple sequence alignment for protein structure prediction. Nat. Commun. 12, 2535 (2021).

Article

Google Scholar

- Yang, J. et al. Improved protein structure prediction using predicted interresidue orientations. Proc. Natl Acad. Sci. USA 117, 1496–1503 (2020).

Article

Google Scholar

- Xu, J., Mcpartlon, M. & Li, J. Improved protein structure prediction by deep learning irrespective of co-evolution information. Nat. Mach. Intell. 3, 601–609 (2021).

Article

Google Scholar

- Abramson, J. et al. Accurate structure prediction of biomolecular interactions with alphafold 3. Nature 630, 493–500 (2024).

Article

Google Scholar

- Kryshtafovych, A. et al. Critical assessment of methods of protein structure prediction (CASP)–round xiv. Proteins Struct. Funct. Bioinform. 89, 1607–1617 (2021).

Article

Google Scholar

- Jeanmougin, F. et al. Multiple sequence alignment with Clustal X. Trends Biochem. Sci. 23, 403–405 (1998).

Article

Google Scholar

- Fiser, A. Template-based protein structure modeling. Comput. Biol. 673, 73–94 (2010).

Article

Google Scholar

- Suzek, B. E. et al. UniRef clusters: a comprehensive and scalable alternative for improving sequence similarity searches. Bioinformatics 31, 926–932 (2015).

Article

Google Scholar

- wwPDB Consortium Protein data bank: the single global archive for 3d macromolecular structure data. Nucleic Acids Res. 47, 520–528 (2018).

Article

Google Scholar

- Lin, Z. et al. Evolutionary-scale prediction of atomic-level protein structure with a language model. Science 379, 1123–1130 (2023).

Article MathSciNet

Google Scholar

- Wang, W., Peng, Z. & Yang, J. Single-sequence protein structure prediction using supervised transformer protein language models. Nat. Comput. Sci. 2, 804–814 (2022).

Article

Google Scholar

- Chowdhury, R. et al. Single-sequence protein structure prediction using a language model and deep learning. Nat. Biotechnol. 40, 1617–1623 (2022).

Article

Google Scholar

- Wu, R. et al. High-resolution de novo structure prediction from primary sequence. Preprint at bioRxiv https://doi.org/10.1101/2022.07.21.500999 (2022).

- Fang, X. et al. A method for multiple-sequence-alignment-free protein structure prediction using a protein language model. Nat. Mach. Intell. 5, 1087–1096 (2023).

Article

Google Scholar

- Vaswani, A. et al. Attention is all you need. In Proc. 31st Conference on Neural Information Processing Systems 4–9 (NIPS, 2017).

- Rombach, R. et al. High-resolution image synthesis with latent diffusion models. In Proc. 2022 Conference on Computer Vision and Pattern Recognition 10684–10695 (CVPR, 2022).

- Ike, E. The role of text-to-image models in advanced style transfer applications: a case study with DALL-E 3. Preprint at https://arxiv.org/abs/2412.05325 (2024).

- Wang, Y., Li, Y. & Cui, Z. Incomplete multimodality-diffused emotion recognition. In Proc. 37th Conference on Neural Information Processing Systems 17117–17128 (NIPS, 2023).

- Peng, J. & Xu, J. Raptorx: exploiting structure information for protein alignment by statistical inference. Proteins Struct. Funct. Bioinform. 79, 161–171 (2011).

Article

Google Scholar

- Hu, E. et al. Lora: Low-rank adaptation of large language models. In Proc. 2022 International Conference on Learning Representations 1–13 (ICLR, 2022).

- Ronneberger, O., Fischer, P., & Brox, T. U-net: convolutional networks for biomedical image segmentation. In Proc. 2015 Conference on Medical Image Computing and Computer-Assisted Intervention 234–241 (MICCAI, 2015).

- Wang, X. et al. LightRoseTTA: high-efficient and accurate protein structure prediction using a light-weight deep graph model. Adv. Sci. 12, 2309051 (2025).

Article

Google Scholar

- Kryshtafovych, A. et al. Critical assessment of methods of protein structure prediction (casp)–round XV. Proteins Struct. Funct. Bioinform. 91, 1539–1549 (2023).

Article

Google Scholar

- Yuan, R. et al. CASP16 protein monomer structure prediction assessment. Proteins Struct. Funct. Bioinform. 94, 86–105 (2026).

Article

Google Scholar

- Zhang, Y. & Skolnick, J. Scoring function for automated assessment of protein structure template quality. Proteins 57, 702–710 (2004).

Article

Google Scholar

- Zemla, A. LGA: a method for finding 3d similarities in protein structures. Nucleic Acids Res. 31, 3370–3374 (2003).

Article

Google Scholar

- Mariani, V. et al. lDDT: A local superposition-free score for comparing protein structures and models using distance difference tests. Bioinformatics 29, 2722–2728 (2013).

Article

Google Scholar

- Gutub, A. Pixel indicator technique for RGB image steganography. J. Emerg. Technol. Web Intell. 2, 56–64 (2010).

Google Scholar

- Radford, A. et al. Learning transferable visual models from natural language supervision. In Proc. 2021 International Conference on Machine Learning 8748–8763 (ICML, 2021).

- Shi, Y. et al. Masked label prediction: unified message passing model for semi-supervised classification. In Proc. 30th International Joint Conference on Artificial Intelligence 19–26 (IJCAI, 2021).

- Morris, C. et al. Weisfeiler and leman go neural: higher-order graph neural networks. In Proc. 33th Conference on Artificial Intelligence 4602–4609 (AAAI, 2019).

- Fuchs, F. et al. SE(3)-Transformers: 3D roto-translation equivariant attention networks. In Proc. 34th Conference on Neural Information Processing Systems 1970–1981 (Curran Associates, Inc., 2020).

- Kullback, S. & Leibler, R. A. On information and sufficiency. Ann. Math. Statist. 22, 79–86 (1951).

Article MathSciNet

Google Scholar

- Lu, C. et al. Dpm-solver: a fast ode solver for diffusion probabilistic model sampling in around 10 steps. In Proc. 36th Conference on Neural Information Processing Systems 5775–5787 (NIPS, 2022).

- Zhao, W. et al. Unipc: a unified predictor-corrector framework for fast sampling of diffusion models. In Proc. 37th Conference on Neural Information Processing Systems 49842–49869 (NIPS, 2023).

- Song, J., Meng, C. & Ermon, S. Denoising diffusion implicit models. In Proc. 2021 International Conference on Learning Representations 1–20 (ICLR, 2021).

- Richardson, L. et al. Mgnify: the microbiome sequence data analysis resource in 2023. Nucleic Acids Res. 51, 753–759 (2023).

Article

Google Scholar

- Altschul, S., Gish, W., Miller, W., Myers, E. & Lipman, D. Basic local alignment search tool. J. Mol. Biol. 215, 403–410 (1990).

Article

Google Scholar

- Ho, J., Jain, A. & Abbeel, P. Denoising diffusion probabilistic models. In Proc. 34th Conference on Neural Information Processing Systems 6840–6851 (NIPS, 2020).

- Chung, K. Markov Chains (Springer, 1967).

- Norris, J. Markov Chains, Vol. 2 (Cambridge Univ. Press, 1998).

- Zhai, J. et al. Autoencoder and its various variants. In Proc. 2018 IEEE International Conference on Systems, Man, and Cybernetics (SMC) 415–419 (IEEE, 2018).

- Chen, S. et al. Extending context window of large language models via positional interpolation. Preprint at https://arxiv.org/abs/2306.15595 (2023).

- Thomas, N. et al. Tensor field networks: rotation- and translation-equivariant neural networks for 3D point clouds. Preprint at https://arxiv.org/abs/1802.08219 (2018).

- Matthews, B. L. C. Molecular Dynamics with Deterministic and Stochastic Numerical Methods (Springer, 2015).

- Wang, X. The benchmark datasets of TDFold. Zenodo https://doi.org/10.5281/zenodo.18453711 (2026).

- Wang, X. psp3dcg/TDFold: TDFold v1.0. Zenodo https://doi.org/10.5281/zenodo.18454943 (2026).

- Wang, X. CodeOcean capsule of TDFold’s code. CodeOcean https://doi.org/10.24433/CO.5553163.v1 (2026).

Download references

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelpredictionpublished

Tutorial - How to Toggle On/OFf the Thinking Mode Directly in LM Studio for Any Thinking Model

LM Studio is an exceptional tool for running local LLMs, but it has a specific quirk: the "Thinking" (reasoning) toggle often only appears for models downloaded directly through the LM Studio interface. If you use external GGUFs from providers like Unsloth or Bartowski, this capability is frequently hidden. Here is how to manually activate the Thinking switch for any reasoning model. ### Method 1: The Native Way (Easiest) The simplest way to ensure the toggle appears is to download models directly within LM Studio. Before downloading, verify that the **Thinking Icon** (the green brain symbol) is present next to the model's name. If this icon is visible, the toggle will work automatically in your chat window. ### Method 2: The Manual Workaround (For External Models) If you prefer to manage

Building the Memory Layer for a Voice AI Agent

Photo by Enchanted Tools on Unsplash Voice AI raises the bar for responsiveness completely. In a chatbot, a two or three second delay feels acceptable. In voice, that same delay feels strange. People start wondering if the app heard them, whether the microphone failed, or if they should repeat themselves. Voice is much less forgiving. That was the main thing I kept running into while experimenting with a voice journal app: a voice-first app powered by Sarvam AI for speech to text and text to speech conversion and Redis Agent Memory Server for memory. It’s a pretty straight forward app. A user speaks, the app transcribes the audio, decides whether the user wants to save something or ask something, fetches the right context, and then responds back in voice. What makes it interesting is build

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Tutorial - How to Toggle On/OFf the Thinking Mode Directly in LM Studio for Any Thinking Model

LM Studio is an exceptional tool for running local LLMs, but it has a specific quirk: the "Thinking" (reasoning) toggle often only appears for models downloaded directly through the LM Studio interface. If you use external GGUFs from providers like Unsloth or Bartowski, this capability is frequently hidden. Here is how to manually activate the Thinking switch for any reasoning model. ### Method 1: The Native Way (Easiest) The simplest way to ensure the toggle appears is to download models directly within LM Studio. Before downloading, verify that the **Thinking Icon** (the green brain symbol) is present next to the model's name. If this icon is visible, the toggle will work automatically in your chat window. ### Method 2: The Manual Workaround (For External Models) If you prefer to manage

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!