The Vibe Coding Playbook: A Jungle Guide for the Context-Driven Developer

<h2> The Tuxedo in the Jungle </h2> <p>We all enter the Vibe Coding domain from different places, carrying different luggage for this new journey. Some are well prepared and start the journey fully equipped. It’s not their first rodeo, as they are seasoned code masters and this is just a new tool.</p> <p>Others travel lightweight and haven’t packed in advance. Little or no prior programming skills at all. Scarce knowledge of important “side” topics that are pillars for the coding world. So, their journey feels more like making their way through a vast jungle - dressed in a tuxedo, no machete, just a small nail clipper for all the thick bushes ahead.</p> <p>I find myself somewhere in the middle. I started this adventure (vibe coding or as I prefer to address it: context coding) fairly recen

The Tuxedo in the Jungle

We all enter the Vibe Coding domain from different places, carrying different luggage for this new journey. Some are well prepared and start the journey fully equipped. It’s not their first rodeo, as they are seasoned code masters and this is just a new tool.

Others travel lightweight and haven’t packed in advance. Little or no prior programming skills at all. Scarce knowledge of important “side” topics that are pillars for the coding world. So, their journey feels more like making their way through a vast jungle - dressed in a tuxedo, no machete, just a small nail clipper for all the thick bushes ahead.

I find myself somewhere in the middle. I started this adventure (vibe coding or as I prefer to address it: context coding) fairly recently. I left my tuxedo in the wardrobe, but somehow found that a simple t-shirt, shorts and flip flops are not the best jungle attire either.

From FAQ.md to a Structured Playbook

After watching hundreds of YouTube tutorials on this subject, I still had (have) millions of questions and lots of blind spots. One day I just decided to sit down and spontaneously write down all my questions into a simple text file.

Then it turned to a FAQ.md file. Then came the insight to feed that file to the top 10 LLM models and collect back their answers - and put them in a separate directory of .md files.

That is how this vague idea turned into a vibe coding project, as I asked OpenCode to analyze all these .md files and produce structured content for vibe coding newbies like myself.

Perhaps the title - Vibe Coding Playbook - feels too grandiose for such a modest project.

But this is a work in progress and I really do hope that this little repo of mine could one day be a beneficial pit stop for all in need of some help on this long and exhausting drive.

My bigger hope is that there will be people who would help to make that come true. With more content or at least with competent feedback , comments and/or ideas.

What’s Inside the Repo?

So far the repo is really humble: a brief intro to Vibe Coding as a term, followed by 11 sections with short explanations of different aspects of this subject. All based on this FAQ.md and the answers from the Top 10 LLM models.

Recently I created also a Roadmap section with plans for future content to be added.

Using OpenCode as my coding partner, I did some basic styling, polished the content and added basic examples here and there. As I said, it is still rather a draft or beta version, than a finished work.

So far, the project took 6 days and 39 commits. But I still consider it...

A Work in Progress

Vibe (context) coding itself is a real jungle whose trees, bushes and lianas change colors - and even places - literally every day. So edits and adjustments are inevitable, for that I am sure.

Check out the repo here: https://github.com/plamen5rov/vibe-coding-playbook

I really can’t wait to read your reaction, observation, assessment, comments and even criticism.

There are at least two things that are far worse than criticism: silence and indifference.

I’d love to hear your thoughts.

Do you feel like you’re hacking through the jungle with a nail clipper, or have you found your "machete" yet?

Non-hateful comments are highly welcome.

DEV Community

https://dev.to/plamen5rov/the-vibe-coding-playbook-a-jungle-guide-for-the-context-driven-developer-3jnpSign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelversionmillion

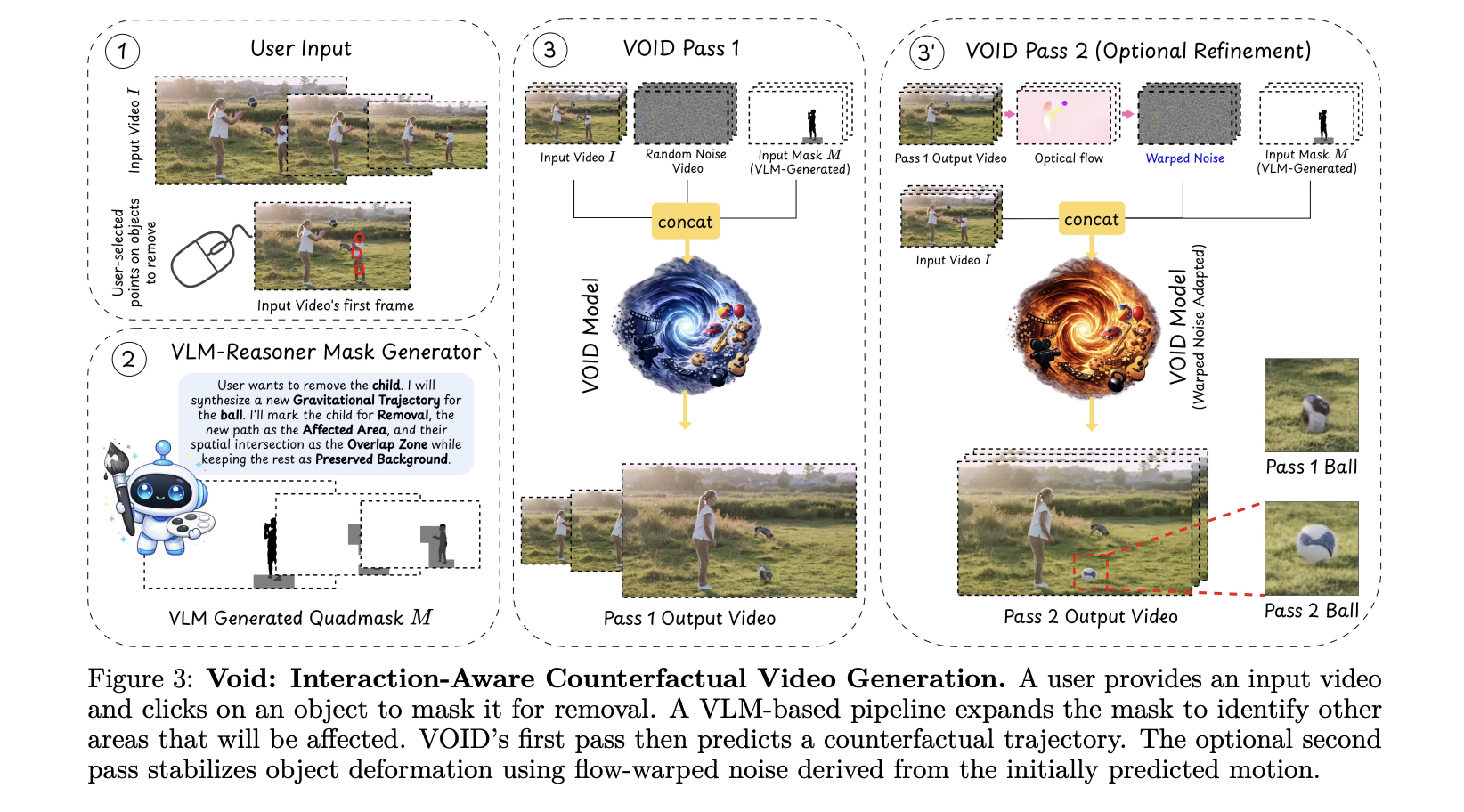

Netflix AI Team Just Open-Sourced VOID: an AI Model That Erases Objects From Videos — Physics and All

Video editing has always had a dirty secret: removing an object from footage is easy; making the scene look like it was never there is brutally hard. Take out a person holding a guitar, and you re left with a floating instrument that defies gravity. Hollywood VFX teams spend weeks fixing exactly this kind of problem. [ ] The post Netflix AI Team Just Open-Sourced VOID: an AI Model That Erases Objects From Videos — Physics and All appeared first on MarkTechPost .

Sharing Two Open-Source Projects for Local AI & Secure LLM Access 🚀

Hey everyone! I’m finally jumping into the dev.to community. To kick things off, I wanted to share two tools I’ve been developing at the University of Jaén that tackle two common headaches in the AI space: running out of VRAM, and keeping your API chats truly private. 🦥 Quansloth: TurboQuant Local AI Server The Problem: Standard LLM inference hits a "Memory Wall" with long documents. As context grows, your GPU runs out of memory (OOM) and crashes. The Solution: Quansloth is a fully private, air-gapped AI server that brings elite KV cache compression to consumer hardware. By bridging a Gradio Python frontend with a highly optimized llama.cpp CUDA backend, it prevents GPU crashes and lets you run massive contexts on a budget. Key Features: 75% VRAM Savings: Based on Google's TurboQuant (ICL

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

b8661

llama: add custom newline split for Gemma 4 ( #21406 ) macOS/iOS: macOS Apple Silicon (arm64) macOS Intel (x64) iOS XCFramework Linux: Ubuntu x64 (CPU) Ubuntu arm64 (CPU) Ubuntu s390x (CPU) Ubuntu x64 (Vulkan) Ubuntu arm64 (Vulkan) Ubuntu x64 (ROCm 7.2) Ubuntu x64 (OpenVINO) Windows: Windows x64 (CPU) Windows arm64 (CPU) Windows x64 (CUDA 12) - CUDA 12.4 DLLs Windows x64 (CUDA 13) - CUDA 13.1 DLLs Windows x64 (Vulkan) Windows x64 (SYCL) Windows x64 (HIP) openEuler: openEuler x86 (310p) openEuler x86 (910b, ACL Graph) openEuler aarch64 (310p) openEuler aarch64 (910b, ACL Graph)

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!