The Specialist’s Dilemma Is Breaking Scientific AI

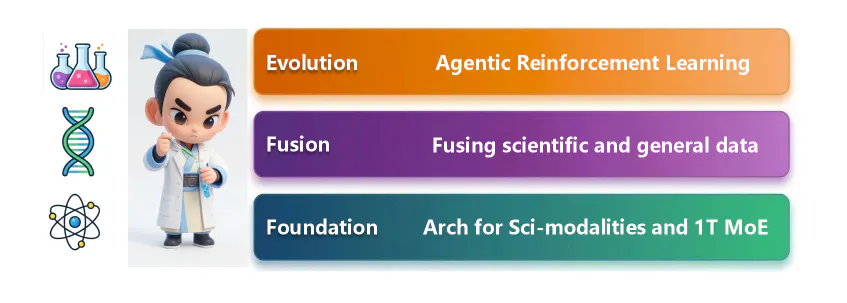

Intern-S1-Pro challenges the idea that AI must choose between general reasoning and scientific specialization across multiple domains. Read All

New Story

The Specialist’s Dilemma Is Breaking Scientific AI

byaimodels44byaimodels44@aimodels44

Among other things, launching AIModels.fyi ... Find the right AI model for your project - https://aimodels.fyi

SubscribeApril 3rd, 2026

audio element.Speed1xVoiceDr. One Ms. Hacker byaimodels44@aimodels44byaimodels44@aimodels44Among other things, launching AIModels.fyi ... Find the right AI model for your project - https://aimodels.fyi

Subscribe

Among other things, launching AIModels.fyi ... Find the right AI model for your project - https://aimodels.fyi

Subscribe← Previous

The Missing Data Problem Behind Broken Computer-Use Agents

About Author

Among other things, launching AIModels.fyi ... Find the right AI model for your project - https://aimodels.fyi

Read my storiesAbout @aimodels44

Comments

TOPICS

machine-learning#artificial-intelligence#software-architecture#software-engineering#infrastructure#data-science#performance#scientific-ai-models#specialist-vs-generalist-ai

THIS ARTICLE WAS FEATURED IN

Related Stories

10 Languages, 9 Premium Voices: Meet Qwen3-TTS CustomVoice

aimodels44

Feb 11, 2026

The Noonification: Use This 7-Step McKinsey Framework to Solve Any Problem (1/10/2023)

Noonification

Jan 10, 2023

The Noonification: A Taxonomy of Inclusiveness (1/11/2024)

Noonification

Jan 11, 2024

The Noonification: What is the InfiniteNature-Zero AI Model? (11/19/2022)

Noonification

Nov 19, 2022

10 Ways AI Has Changed Our Lives

Bella Williams

Mar 04, 2020

100 Days of AI, Day 8: Experimenting With Microsoft's Semantic Kernel Using GPT-4

Nataraj

Jan 31, 2024

10 Languages, 9 Premium Voices: Meet Qwen3-TTS CustomVoice

aimodels44

Feb 11, 2026

The Noonification: Use This 7-Step McKinsey Framework to Solve Any Problem (1/10/2023)

Noonification

Jan 10, 2023

The Noonification: A Taxonomy of Inclusiveness (1/11/2024)

Noonification

Jan 11, 2024

The Noonification: What is the InfiniteNature-Zero AI Model? (11/19/2022)

Noonification

Nov 19, 2022

10 Ways AI Has Changed Our Lives

Bella Williams

Mar 04, 2020

100 Days of AI, Day 8: Experimenting With Microsoft's Semantic Kernel Using GPT-4

Nataraj

Jan 31, 2024

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

reasoning

SAFE: Stepwise Atomic Feedback for Error correction in Multi-hop Reasoning

arXiv:2604.01993v1 Announce Type: new Abstract: Multi-hop QA benchmarks frequently reward Large Language Models (LLMs) for spurious correctness, masking ungrounded or flawed reasoning steps. To shift toward rigorous reasoning, we propose SAFE, a dynamic benchmarking framework that replaces the ungrounded Chain-of-Thought (CoT) with a strictly verifiable sequence of grounded entities. Our framework operates across two phases: (1) train-time verification, where we establish an atomic error taxonomy and a Knowledge Graph (KG)-grounded verification pipeline to eliminate noisy supervision in standard benchmarks, identifying up to 14% of instances as unanswerable, and (2) inference-time verification, where a feedback model trained on this verified dataset dynamically detects ungrounded steps in

SURE: Synergistic Uncertainty-aware Reasoning for Multimodal Emotion Recognition in Conversations

arXiv:2604.01916v1 Announce Type: new Abstract: Multimodal emotion recognition in conversations (MERC) requires integrating multimodal signals while being robust to noise and modeling contextual reasoning. Existing approaches often emphasize fusion but overlook uncertainty in noisy features and fine-grained reasoning. We propose SURE (Synergistic Uncertainty-aware REasoning) for MERC, a framework that improves robustness and contextual modeling. SURE consists of three components: an Uncertainty-Aware Mixture-of-Experts module to handle modality-specific noise, an Iterative Reasoning module for multi-turn reasoning over context, and a Transformer Gate module to capture intra- and inter-modal interactions. Experiments on benchmark MERC datasets show that SURE consistently outperforms state-o

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Frontier Research

Magic, Madness, Heaven, Sin: LLM Output Diversity is Everything, Everywhere, All at Once

arXiv:2604.01504v1 Announce Type: new Abstract: Research on Large Language Models (LLMs) studies output variation across generation, reasoning, alignment, and representational analysis, often under the umbrella of "diversity." Yet the terminology remains fragmented, largely because the normative objectives underlying tasks are rarely made explicit. We introduce the Magic, Madness, Heaven, Sin framework, which models output variation along a homogeneity-heterogeneity axis, where valuation is determined by the task and its normative objective. We organize tasks into four normative contexts: epistemic (factuality), interactional (user utility), societal (representation), and safety (robustness). For each, we examine the failure modes and vocabulary such as hallucination, mode collapse, bias,

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!