The Necessity of a Holistic Safety Evaluation Framework for AI-Based Automation Features

arXiv:2602.05157v2 Announce Type: replace Abstract: The intersection of Safety of Intended Functionality (SOTIF) and Functional Safety (FuSa) analysis of driving automation features has traditionally excluded Quality Management (QM) components (components that has no ASIL requirements allocated from vehicle-level HARA) from rigorous safety impact evaluations. While QM components are not typically classified as safety-relevant, recent developments in artificial intelligence (AI) integration reveal that such components can contribute to SOTIF-related hazardous risks. Compliance with emerging AI safety standards, such as ISO/PAS 8800, necessitates re-evaluating safety considerations for these components. This paper examines the necessity of conducting holistic safety analysis and risk assessm

View PDF HTML (experimental)

Abstract:The intersection of Safety of Intended Functionality (SOTIF) and Functional Safety (FuSa) analysis of driving automation features has traditionally excluded Quality Management (QM) components (components that has no ASIL requirements allocated from vehicle-level HARA) from rigorous safety impact evaluations. While QM components are not typically classified as safety-relevant, recent developments in artificial intelligence (AI) integration reveal that such components can contribute to SOTIF-related hazardous risks. Compliance with emerging AI safety standards, such as ISO/PAS 8800, necessitates re-evaluating safety considerations for these components. This paper examines the necessity of conducting holistic safety analysis and risk assessment on AI components, emphasizing their potential to introduce hazards with the capacity to violate risk acceptance criteria when deployed in safety-critical driving systems, particularly in perception algorithms. Using case studies, we demonstrate how deficiencies in AI-driven perception systems can emerge even in QM-classified components, leading to unintended functional behaviors with critical safety implications. By bridging theoretical analysis with practical examples, this paper argues for the adoption of comprehensive FuSa, SOTIF, and AI standards-driven methodologies to identify and mitigate risks in AI components. The findings demonstrate the importance of revising existing safety frameworks to address the evolving challenges posed by AI, ensuring comprehensive safety assurance across all component classifications spanning multiple safety standards.

Subjects:

Software Engineering (cs.SE); Systems and Control (eess.SY)

Cite as: arXiv:2602.05157 [cs.SE]

(or arXiv:2602.05157v2 [cs.SE] for this version)

https://doi.org/10.48550/arXiv.2602.05157

arXiv-issued DOI via DataCite

Submission history

From: Alireza Abbaspour [view email] [v1] Thu, 5 Feb 2026 00:22:24 UTC (384 KB) [v2] Mon, 30 Mar 2026 20:12:49 UTC (384 KB)

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

announcefeatureintegration

Mean field sequence: an introduction

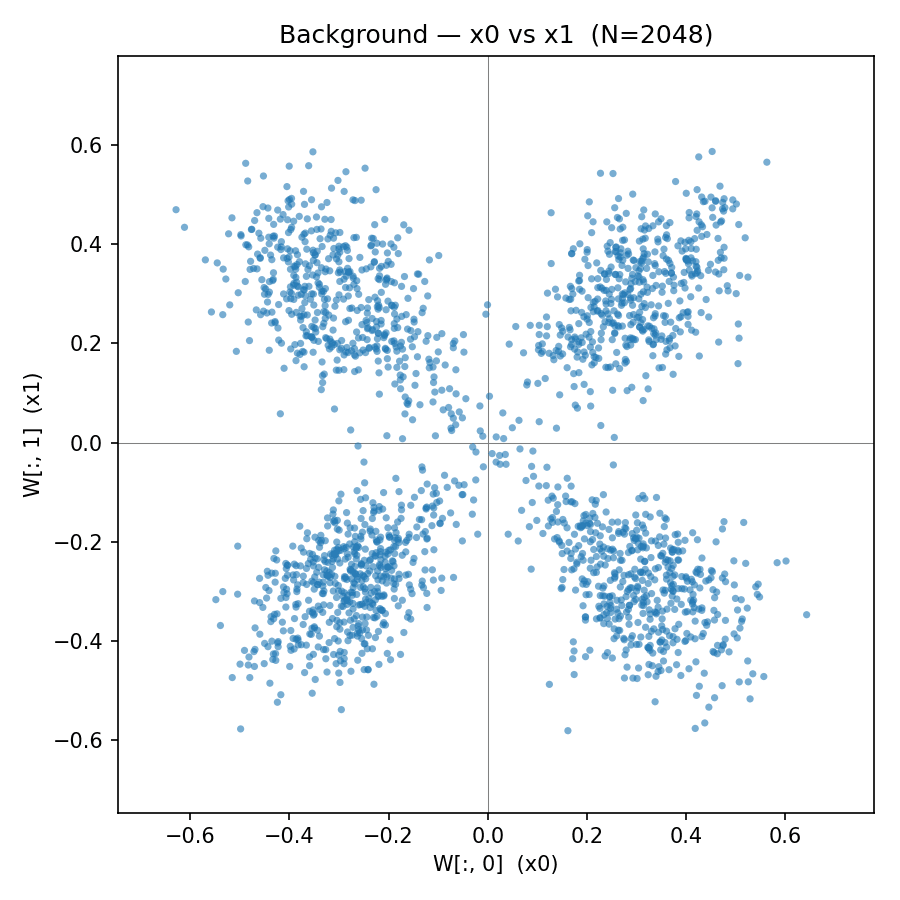

This is the first post in a planned series about mean field theory by Dmitry and Lauren (this post was generated by Dmitry with lots of input from Lauren, and was split into two parts, the second of which is written jointly). These posts are a combination of an explainer and some original research/ experiments. The goal of these posts is to explain an approach to understanding and interpreting model internals which we informally denote "mean field theory" or MFT. In the literature, the closest matching term is "adaptive mean field theory". We will use the term loosely to denote a rich emerging literature that applies many-body thermodynamic methods to neural net interpretability. It includes work on both Bayesian learning and dynamics (SGD), and work in wider "NNFT" (neural net field theor

Regional Content Creation 2026: Earn ₹20K-3L in Hindi/Tamil/Telugu

Regional Content Creation 2026: Earn ₹20K-3L in Hindi/Tamil/Telugu Why brands are paying premium for Indian language content (and how to cash in) The Biggest Opportunity in Indian Content (Nobody's Talking About) Here's a stat that'll blow your mind: 90% of new internet users in India prefer content in their native language. But here's the kicker: Only 5% of creators are making quality regional content. That's a 90% demand, 5% supply gap. And brands are desperate to fill it. Why Regional Content Wins in 2026 India 1. The English Internet is Saturated 📉 10M+ English YouTube creators in India Every niche is crowded (tech, finance, motivation) CPMs are dropping (₹40-₹80 RPM) Algorithm favors established players 2. Regional Internet is Exploding 📈 400M+ Indians speak Hindi (online) 80M+ Tami

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Research Papers

Request for arXiv cs.AI Endorsement – Life-Aligned AI Framework

Hi everyone, I’m preparing to submit a paper to arXiv (cs.AI, with cross-lists to q-bio.PE and physics.soc-ph) and am currently awaiting endorsement from a qualified author. Posting here in case anyone in this community can help or knows someone who can. Title: Life-Aligned AI: A Framework for Grounding Artificial Intelligence in the Empirical Conditions of Flourishing Here’s the main idea: Current alignment approaches work backwards — rules imposed in advance by minds that the systems they constrain may eventually exceed. This paper proposes a different starting point: training AI on living systems — the only adaptive framework continuously pressure-tested across four billion years under conditions of genuine consequence — and letting the operating principles emerge from genuine reasoning

![Thoughts on AI and Research [pdf]](https://d2xsxph8kpxj0f.cloudfront.net/310419663032563854/konzwo8nGf8Z4uZsMefwMr/default-img-robot-hand-JvPW6jsLFTCtkgtb97Kys5.webp)

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!