Sublinear-query relative-error testing of halfspaces

arXiv:2604.01557v1 Announce Type: new Abstract: The relative-error property testing model was introduced in [CDHLNSY24] to facilitate the study of property testing for "sparse" Boolean-valued functions, i.e. ones for which only a small fraction of all input assignments satisfy the function. In this framework, the distance from the unknown target function $f$ that is being tested to a function $g$ is defined as $\mathrm{Vol}(f \mathop{\triangle} g)/\mathrm{Vol}(f)$, where the numerator is the fraction of inputs on which $f$ and $g$ disagree and the denominator is the fraction of inputs that satisfy $f$. Recent work [CDHNSY26] has shown that over the Boolean domain $\{0,1\}^n$, any relative-error testing algorithm for the fundamental class of halfspaces (i.e. linear threshold functions) must

View PDF

Abstract:The relative-error property testing model was introduced in [CDHLNSY24] to facilitate the study of property testing for "sparse" Boolean-valued functions, i.e. ones for which only a small fraction of all input assignments satisfy the function. In this framework, the distance from the unknown target function $f$ that is being tested to a function $g$ is defined as $\mathrm{Vol}(f \mathop{\triangle} g)/\mathrm{Vol}(f)$, where the numerator is the fraction of inputs on which $f$ and $g$ disagree and the denominator is the fraction of inputs that satisfy $f$. Recent work [CDHNSY26] has shown that over the Boolean domain ${0,1}^n$, any relative-error testing algorithm for the fundamental class of halfspaces (i.e. linear threshold functions) must make $\Omega(\log n)$ oracle calls. In this paper we complement the [CDHNSY26] lower bound by showing that halfspaces can be relative-error tested over $\mathbb{R}^n$ under the standard $N(0,I_n)$ Gaussian distribution using a sublinear number of oracle calls -- in particular, substantially fewer than would be required for learning. Our results use a wide range of tools including Hermite analysis, Gaussian isoperimetric inequalities, and geometric results on noise sensitivity and surface area.

Subjects:

Data Structures and Algorithms (cs.DS); Computational Complexity (cs.CC)

Cite as: arXiv:2604.01557 [cs.DS]

(or arXiv:2604.01557v1 [cs.DS] for this version)

https://doi.org/10.48550/arXiv.2604.01557

arXiv-issued DOI via DataCite (pending registration)

Submission history

From: Yizhi Huang [view email] [v1] Thu, 2 Apr 2026 03:01:25 UTC (197 KB)

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelannounceanalysis

Tutorial - How to Toggle On/OFf the Thinking Mode Directly in LM Studio for Any Thinking Model

LM Studio is an exceptional tool for running local LLMs, but it has a specific quirk: the "Thinking" (reasoning) toggle often only appears for models downloaded directly through the LM Studio interface. If you use external GGUFs from providers like Unsloth or Bartowski, this capability is frequently hidden. Here is how to manually activate the Thinking switch for any reasoning model. ### Method 1: The Native Way (Easiest) The simplest way to ensure the toggle appears is to download models directly within LM Studio. Before downloading, verify that the **Thinking Icon** (the green brain symbol) is present next to the model's name. If this icon is visible, the toggle will work automatically in your chat window. ### Method 2: The Manual Workaround (For External Models) If you prefer to manage

Building the Memory Layer for a Voice AI Agent

Photo by Enchanted Tools on Unsplash Voice AI raises the bar for responsiveness completely. In a chatbot, a two or three second delay feels acceptable. In voice, that same delay feels strange. People start wondering if the app heard them, whether the microphone failed, or if they should repeat themselves. Voice is much less forgiving. That was the main thing I kept running into while experimenting with a voice journal app: a voice-first app powered by Sarvam AI for speech to text and text to speech conversion and Redis Agent Memory Server for memory. It’s a pretty straight forward app. A user speaks, the app transcribes the audio, decides whether the user wants to save something or ask something, fetches the right context, and then responds back in voice. What makes it interesting is build

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Research Papers

![[D] ICML reviewer making up false claim in acknowledgement, what to do?](https://d2xsxph8kpxj0f.cloudfront.net/310419663032563854/konzwo8nGf8Z4uZsMefwMr/default-img-matrix-rain-CvjLrWJiXfamUnvj5xT9J9.webp)

[D] ICML reviewer making up false claim in acknowledgement, what to do?

In a rebuttal acknowledgement we received, the reviewer made up a claim that our method performs worse than baselines with some hyperparameter settings. We did do a comprehensive list of hyperparameter comparisons and the reviewer's claim is not supported by what's presented in the paper. In this case what can we do? submitted by /u/dontknowwhattoplay [link] [comments]

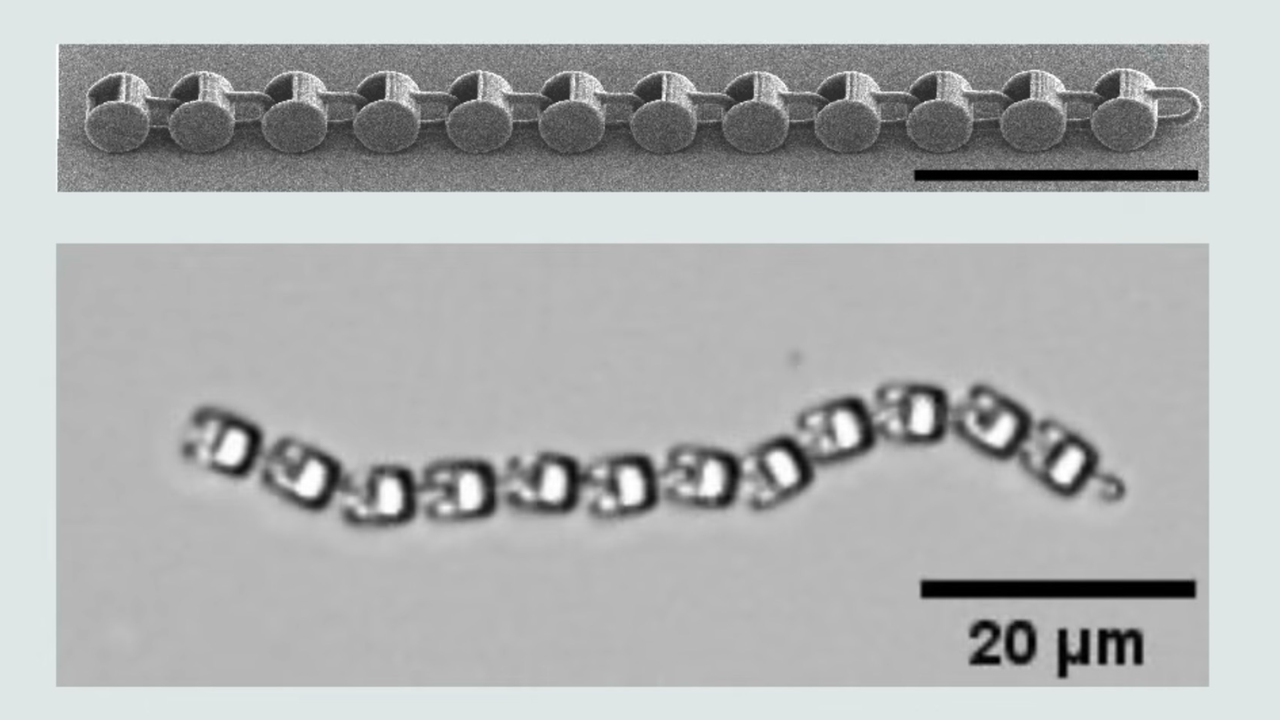

Researchers 3D print robot the size of a single-cell organism — devices move and navigate even without a ‘brain,’ uses their shape and the environment to get going

Researchers 3D print robot the size of a single-cell organism — devices move and navigate even without a ‘brain,’ uses their shape and the environment to get going

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!