Site Audit Checklist: Onboarding a New Client for Performance Monitoring

<p>Most onboarding checklists are either too light ("run a test and send a report") or too heavy (a long enterprise worksheet no one follows). Agency teams need something in between: a practical checklist you can run repeatedly, with enough structure to avoid blind spots.</p> <p>This guide is built for teams onboarding client sites into ongoing performance monitoring. The goal is clear: move from "new client handover" to "monitoring is live, scoped, and actionable" without spending two weeks in setup mode.</p> <p>If you need the monthly review workflow after onboarding, pair this with <a href="https://dev.to/blog/monthly-performance-review-template-for-agency-teams">Monthly Performance Review Template for Agency Teams</a>.</p> <h2> What teams usually need from a site audit checklist </h2>

Most onboarding checklists are either too light ("run a test and send a report") or too heavy (a long enterprise worksheet no one follows). Agency teams need something in between: a practical checklist you can run repeatedly, with enough structure to avoid blind spots.

This guide is built for teams onboarding client sites into ongoing performance monitoring. The goal is clear: move from "new client handover" to "monitoring is live, scoped, and actionable" without spending two weeks in setup mode.

If you need the monthly review workflow after onboarding, pair this with Monthly Performance Review Template for Agency Teams.

What teams usually need from a site audit checklist

Most teams need three things from an onboarding checklist:

-

A template they can copy directly.

-

A sequence of actions in the right order.

-

Clarity on what matters most in the first week.

This article covers all three. It starts with a copy/paste checklist, then explains how to use each section so your first monitoring cycle produces decisions, not just numbers.

Before you touch tools: lock scope and ownership

Do not begin with a full crawl and a 200-row spreadsheet. Start with agreement.

For each client, lock these five items first:

-

Primary domain and critical subdomains

-

Priority templates (homepage, lead form, pricing, service/product, checkout)

-

Mobile and desktop coverage

-

Reporting cadence (weekly internal, monthly client-facing)

-

Alert recipients and first-response owner

If you skip this step, the first client conversation usually becomes "why are these pages here?" instead of "what do we fix first?"

Site audit checklist (copy/paste template)

Use this in your docs tool, ticketing system, or onboarding runbook.

// Site Audit Checklist — Performance Monitoring Onboarding // Client: [NAME] // Domain(s): [DOMAIN] // Owner: [NAME] // Date: [YYYY-MM-DD]// Site Audit Checklist — Performance Monitoring Onboarding // Client: [NAME] // Domain(s): [DOMAIN] // Owner: [NAME] // Date: [YYYY-MM-DD]- Access and context

- Confirm primary domain + environments (prod/stage)

- Confirm CMS / stack basics (WordPress, Shopify, custom, etc.)

- Confirm deployment owner / technical contact

- Confirm analytics and consent constraints

- URL inventory

- Pull URLs from sitemap(s)

- Add business-critical URLs manually (pricing, lead form, key landing pages)

- Remove obvious noise pages (search params, utility pages, test paths)

- Group pages by template type where possible

- Measurement setup

- Enable mobile + desktop testing

- Set test frequency by site priority

- Confirm test quota and page limits match plan

- Confirm data retention expectation (30/90/365 days or plan default)

- Baseline capture (first run)

- Run initial tests for priority pages

- Record baseline LCP / INP / CLS and performance score

- Mark currently failing pages and highest-severity regressions

- Note pages with no field data so expectations are clear

- Budgets and alerts

- Set initial thresholds (LCP, INP, CLS) per site/template

- Set alert channels (email / Slack / webhook where available)

- Confirm cooldown and escalation owner

- Confirm who receives alerts and who owns first response

- Reporting readiness

- Decide client-facing summary format (call, email, PDF/report link)

- Define monthly review owner and calendar slot

- Draft first "what we monitor and why" note for client

- Confirm next review date

- Handover

- Create top 3 actions from baseline findings

- Assign owner + due date for each action

- Log blockers (hosting, scripts, release dependencies)

- Share final onboarding summary internally`

Enter fullscreen mode

Exit fullscreen mode

How to run each section without adding overhead

The checklist above is the scaffold. This section explains how to keep it efficient.

1) Access and context

This is where most onboarding delays begin. The most common blocker is not technical complexity; it is missing ownership.

Minimum acceptable output from this section:

-

one technical contact who can approve changes,

-

one business contact who can prioritise pages,

-

one statement on environment scope (production only, or production + staging).

If that is not settled, pause setup and resolve it before running baseline tests.

2) URL inventory

Start with sitemap URLs, then force-add business-critical pages. Sitemaps are useful, but they often miss campaign pages, dynamic pricing paths, or recently launched funnels.

A practical first pass for most sites:

-

1 homepage URL

-

2-5 conversion URLs (pricing, lead form, checkout, booking)

-

5-10 high-traffic content or service templates

That gives you enough coverage to catch meaningful regressions without drowning your team in low-value alerts.

3) Measurement setup

Always enable both mobile and desktop. Even if the client says "our users are mostly desktop", mobile regressions still affect search visibility and user experience on mixed traffic.

Set test cadence based on risk:

-

high-change sites: daily

-

medium-change sites: weekly

-

stable sites: weekly or monthly

Avoid a false precision setup where every site gets the same frequency. Match cadence to release behaviour and business risk.

4) Baseline capture

A baseline is not "export all scores". It is a snapshot you can compare against in four weeks.

For each priority page, record:

-

current LCP, INP, CLS

-

current performance score

-

current status (within threshold / out of threshold)

-

one likely cause if out of threshold

-

one likely business impact

That last two lines are what make the baseline usable in client calls.

5) Budgets and alerts

Budgets and alerts are where monitoring becomes operational.

Do not over-tune on day one. Set initial thresholds, then adjust after one month of data. The objective is a stable signal, not perfect thresholds in the first week.

When setting alert channels, define response paths explicitly:

-

who receives first alert,

-

who triages,

-

who communicates externally,

-

what counts as escalation.

Without this, alerts become noise and trust drops quickly.

6) Reporting readiness

If the client is onboarding, they need clarity more than polish.

First-cycle reporting should answer:

-

What we monitor.

-

What is currently failing.

-

What we will fix first.

-

What we need from you (if anything).

You can upgrade format later (dashboards, PDFs, branded summaries). Start with consistency.

7) Handover

A clean handover has only three mandatory outputs:

-

top three actions,

-

owner and due date for each action,

-

known blockers.

If you end onboarding without those, you have setup but not momentum.

Priority matrix for first-week triage

Use this quick matrix when multiple regressions appear at once:

Impact Effort Priority

High business impact Low effort Do first

High business impact High effort Plan this cycle

Low business impact Low effort Batch with other fixes

Low business impact High effort Backlog unless it trends worse

This keeps your first month focused on visible wins rather than interesting low-impact fixes.

Common onboarding mistakes and how to avoid them

Tracking too many pages too early

A long page list feels thorough, but it slows triage and increases alert fatigue. Start with the minimum meaningful set, then expand after your first review cycle.

No alert owner

Shared inbox alerts with no owner create silent regressions. Assign one response owner before the first scheduled run.

Baseline with no narrative

"LCP is 3.8s" alone is not useful. Pair every key metric with context:

-

page type,

-

suspected cause,

-

likely user/business impact.

That turns metrics into decisions.

Promise mismatch on reporting

Do not promise polished monthly packs before setup stabilises. First cycle should prioritise baseline clarity and top actions.

Mixing diagnosis with onboarding

Onboarding is not root-cause analysis on every issue. Capture the issue, assign severity, and create an action list. Deep diagnosis can run in delivery sprint time.

Suggested first-month cadence after onboarding

Use a straightforward rhythm:

-

Week 1: onboarding, baseline, first top-three action list

-

Week 2: ship highest-impact fixes

-

Week 3: verify against fresh runs

-

Week 4: run monthly review and reset priorities

If thresholds still feel loose, use Performance Budget Thresholds Template before your first full client review.

Example onboarding summary you can send to a client

Use this short format once setup is complete:

Subject: Performance monitoring onboarding complete — next steps

We have completed onboarding for [DOMAIN].

Monitoring scope:

- [N] priority pages across [template groups]

- Mobile and desktop tracking enabled

- Baseline recorded for LCP, INP, CLS, and performance score

Current status:

- pages within thresholds

- [Y] pages needing attention

- Top risk: [short description]

Next three actions:

- [Action] — owner [name], due [date]

- [Action] — owner [name], due [date]

- [Action] — owner [name], due [date]`

Enter fullscreen mode

Exit fullscreen mode

This is usually enough for the first cycle. You can move to a fuller monthly format once trends are visible.

FAQ

How many pages should we include in the first onboarding pass?

Usually 10-20 priority URLs is enough for a reliable baseline. Expand only after your team can keep up with triage.

Should we onboard mobile first or both mobile and desktop?

Both. One-device monitoring hides regressions and creates reporting gaps.

Do we need complete page-type classification before monitoring starts?

No. Start with practical buckets (homepage, conversion pages, core templates), then refine over time.

What if the client has no clear target thresholds yet?

Use pragmatic starter thresholds, mark them provisional, and revise after one month of observed data.

How long should onboarding take per client?

For typical brochure/ecommerce sites, setup and first baseline can usually be done in 30-90 minutes if ownership and access are clear.

What should we do if alerts spike in week one?

Check whether scope is too broad or thresholds are too strict. Triage by business impact and fix ownership before widening coverage.

A good site audit checklist does more than capture URLs and scores. It creates operating rhythm: clear scope, clear owners, and clear next actions. That is what makes monitoring useful after month one.

DEV Community

https://dev.to/apogeewatcher/site-audit-checklist-onboarding-a-new-client-for-performance-monitoring-4bbdSign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

releaselaunchavailable

Exclusive | The Sudden Fall of OpenAI’s Most Hyped Product Since ChatGPT - wsj.com

<a href="https://news.google.com/rss/articles/CBMiogNBVV95cUxPTmtKMzM0aFA2dXlOc1ZnWlpCN1c0WEotQnNlSENhVlN3S3ZoRmhoeHMzMzU1TWpzTTh2N0Q2OUxkMkNfNF9UVll3WF9DWkJPTGpFOVV0ZXNTWkdLb2lJQk9wcGFHdDVHVE9YeFhrSHJHOTJ4YjFILUltV0V4YUFTaGJjTDNUMHVWSUpQc21pNjVRWUwtYUdvSzA3VS1udmh0MnBuN0ctaE9fWEJuZXpLcUp6OFcxSjZxbmhmMkJhVUlTSGZCSWhhYnVLa21zRVZUMTZleWQzc05rVUZtTDhZTmtPanhQTk01c0VCa3JWVXVTNlR3R19oamx6dE0wSFUxUUhTTEIxSHBwVVZjcm9FbFJHalBKZ29IWmM0aGxhUm5KbS1weVhwWDZHR3c5Q084YWxGanpDQTJySHRxWVFNOFNaZGxMZjBoeUhqcUtPVVRKMHA4Rkl0SmFzalZiamNLTnR0MGpzSTZ1M3hQTXhMUmg0ZVp1MUJFcWZNZ19GT3Zid3JCb2dKZFNVX2EwcWZXMmc1ZEJVbXJUSm9nLTNxWjBB?oc=5" target="_blank">Exclusive | The Sudden Fall of OpenAI’s Most Hyped Product Since ChatGPT</a> <font color="#6f6f6f">wsj.com</font>

Exclusive | The Sudden Fall of OpenAI’s Most Hyped Product Since ChatGPT - wsj.com

<a href="https://news.google.com/rss/articles/CBMiogNBVV95cUxOejRwbUJkOHRhVGRaQ1FLeHQwYjVoakxuTFVPN3c5Z0RIdmdjTW5LdlpSbVppWGl2WTg5dzdEYmpsekFIMWxaamRWRUN3V0NBdS1rYklHT0ktOEZud0tYOHNqc1ZhMmo0UGMybHFWR0RIRWZWMGI3dzF4Umw5ZTNBOXY0OF9PNEJDbWxnUUNzQ3h2U0lubEFUQXpHY1ctSjkxV0pSUGZRTnVZUGdrMndIaGJLcERzM2t6RDA3ZXpYeWU2R3lQVXpUZW91NHpIbTlpbHRMYjg5MGxUa2QzRVJoU09LMjQzc1lEN3VVZ2hzRkxRdUtJVmtKTmMwMHRTUEJaazJmcTMycEdNY3J6T3BCLVBoZGVTV2ZoNXVKS09JUGFuaURobmlmY0l3X0xEVWFfTTBLOHdYVExhcXREcnNWUFZNb1NPR2dKc2ZFcEVrZWltcndFSFZlb0RtSlc1djc3SHlpQVVPUzNaOFpPLVhBeFlLQUxvYnctOG9SRzVTbjJkQVVsaWpIN0dnUDJvdEIzby0tbXhZNy1PQ0NSYy1URThn?oc=5" target="_blank">Exclusive | The Sudden Fall of OpenAI’s Most Hyped Product Since ChatGPT</a> <font color="#6f6f6f">wsj.com</font>

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Releases

Orders of magnitude: use semitones, not decibels

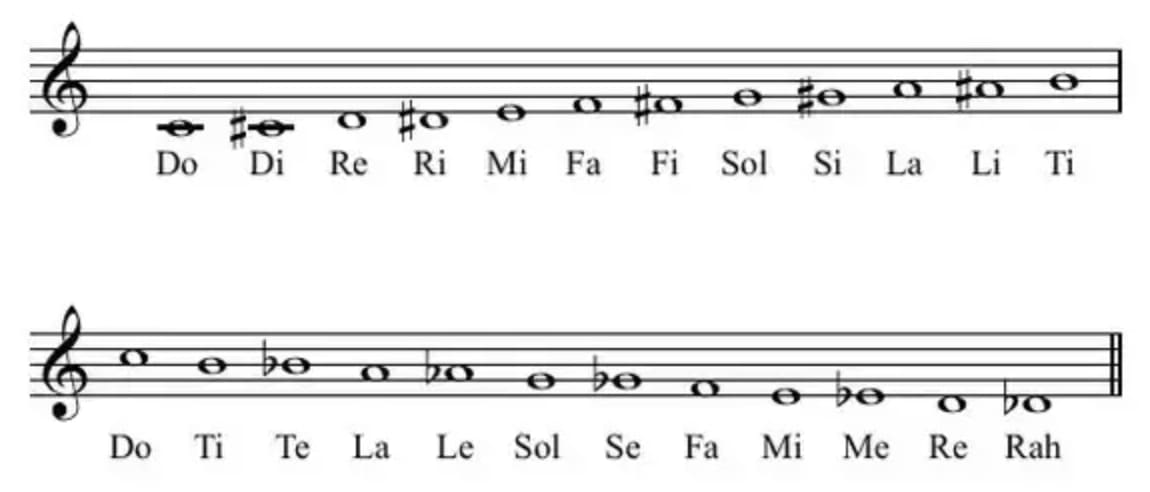

I'm going to teach you a secret. It's a secret known to few, a secret way of using parts of your brain not meant for mathematics ... for mathematics. It's part of how I (sort of) do logarithms in my head. This is a nearly purposeless skill. What's the growth rate? What's the doubling time? How many orders of magnitude bigger is it? How many years at this rate until it's quintupled? All questions of ratios and scale. Scale... hmm. 'Wait', you're thinking, 'let me check the date...'. Indeed. But please, stay with me for the logarithms. Musical intervals as ratios, and God's joke If you're a music nerd like me, you'll know that an octave (abbreviated 8ve), the fundamental musical interval, represents a doubling of vibration frequency. So if A440 is at 440Hz, then 220Hz and 880Hz are also 'A'.

Maintaining Open Source in the AI Era

<p>I've been maintaining a handful of open source packages lately: <a href="https://pypi.org/project/mailview/" rel="noopener noreferrer">mailview</a>, <a href="https://pypi.org/project/mailjunky/" rel="noopener noreferrer">mailjunky</a> (in both Python and Ruby), and recently dusted off an old Ruby gem called <a href="https://rubygems.org/gems/tvdb_api/" rel="noopener noreferrer">tvdb_api</a>. The experience has been illuminating - not just about package management, but about how AI is changing open source development in ways I'm still processing.</p> <h2> The Packages </h2> <p><strong>mailview</strong> started because I missed <a href="https://github.com/ryanb/letter_opener" rel="noopener noreferrer">letter_opener</a> from the Ruby world. When you're developing a web application, you don

Kia’s compact EV3 is coming to the US this year, with 320 miles of range

At the New York International Auto Show on Wednesday, Kia announced that its compact electric SUV, the EV3, will be available in the US "in late 2026." The EV3 has been available overseas since 2024, when it launched in South Korea and Europe. The 2027 model coming to the US appears to have the same […]

Self-Referential Generics in Kotlin: When Type Safety Requires Talking to Yourself

<p><a href="https://media2.dev.to/dynamic/image/width=800%2Cheight=%2Cfit=scale-down%2Cgravity=auto%2Cformat=auto/https%3A%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fuploads%2Farticles%2Fvjglglqe2f834rnz4t98.png" class="article-body-image-wrapper"><img src="https://media2.dev.to/dynamic/image/width=800%2Cheight=%2Cfit=scale-down%2Cgravity=auto%2Cformat=auto/https%3A%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fuploads%2Farticles%2Fvjglglqe2f834rnz4t98.png" alt=" " width="800" height="436"></a></p> <p>Kotlin's type system is expressive enough to let you write code that is simultaneously statically typed, runtime validated, and ergonomic at the call site. That combination usually requires some machinery — and understanding <em>why</em> the machinery exists, rather than just how to copy it, is the diff

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!