Setting Up a Production-Ready Laravel Stack: Nginx, PHP 8.4, MySQL, Valkey & Supervisor

<p>A Laravel application running on your local machine with <code>php artisan serve</code> and a SQLite database is a fundamentally different beast from a Laravel application serving thousands of requests per minute in production. The gap between development and production is not just about code. It is about infrastructure: a properly tuned web server, an optimized PHP runtime, a robust database engine, a fast cache layer, and a process manager that keeps your queue workers alive.</p> <p>Building this stack manually is a rite of passage for many developers, but it is also a minefield of configuration mistakes, security oversights, and wasted hours. Deploynix provisions this entire stack automatically when you create an App Server, configured with production-grade defaults that reflect year

A Laravel application running on your local machine with php artisan serve and a SQLite database is a fundamentally different beast from a Laravel application serving thousands of requests per minute in production. The gap between development and production is not just about code. It is about infrastructure: a properly tuned web server, an optimized PHP runtime, a robust database engine, a fast cache layer, and a process manager that keeps your queue workers alive.

Building this stack manually is a rite of passage for many developers, but it is also a minefield of configuration mistakes, security oversights, and wasted hours. Deploynix provisions this entire stack automatically when you create an App Server, configured with production-grade defaults that reflect years of Laravel deployment experience.

In this post, we will break down every component of the Deploynix production stack, explain how they fit together, and show you where and how to customize each piece.

The Architecture at a Glance

When you provision an App Server through Deploynix, you get a fully configured stack that looks like this:

Client Request | v [Nginx] --> [PHP 8.4 FPM] --> [Your Laravel App] | | v v [MySQL/MariaDB/ [Valkey] PostgreSQL] (Cache, Sessions, Queues) | v [Supervisor] (Queue Workers, Daemons)Client Request | v [Nginx] --> [PHP 8.4 FPM] --> [Your Laravel App] | | v v [MySQL/MariaDB/ [Valkey] PostgreSQL] (Cache, Sessions, Queues) | v [Supervisor] (Queue Workers, Daemons)Enter fullscreen mode

Exit fullscreen mode

Each component has a specific role, and they communicate through well-defined interfaces. Let us examine each one.

Nginx: The Front Door

Nginx is the first thing that touches an incoming HTTP request. It serves as a reverse proxy, sitting between the internet and your PHP application. Its job is to handle the things that PHP should not waste cycles on: serving static files, managing SSL/TLS termination, connection buffering, and request routing.

What Deploynix Configures

When you create a site, Deploynix generates an Nginx virtual host configuration tailored for Laravel. This includes:

The web root is set to your application's /public directory. This is critical because Laravel's index.php file lives here, and no other application files should be directly accessible from the web.

Static file handling is configured so that Nginx serves CSS, JavaScript, images, and fonts directly without ever touching PHP. This dramatically reduces load on your PHP-FPM pool. Nginx can serve thousands of static file requests per second with minimal CPU usage.

The try_files directive implements Laravel's routing convention: try the requested URI as a file first, then as a directory, and finally pass it to index.php as a fallback. This is the mechanism that makes Laravel's clean URLs work.

Gzip compression is enabled for text-based responses (HTML, CSS, JavaScript, JSON, XML), reducing bandwidth usage and improving page load times.

Security headers are added to prevent common attacks: X-Frame-Options, X-Content-Type-Options, and X-XSS-Protection headers are set by default.

Request size limits are configured to handle file uploads while preventing abuse. The default client_max_body_size is set to a reasonable value that you can adjust through the Deploynix dashboard.

Customization

You have full SSH access to customize Nginx configuration for any site. Common customizations include adding custom redirect rules, configuring additional headers, or setting up proxy passes to other services running on the server.

Deploynix validates the configuration syntax with nginx -t before reloading, preventing a bad config from taking down your server.

PHP 8.4 FPM: The Engine

PHP-FPM (FastCGI Process Manager) is the runtime that actually executes your Laravel code. Unlike the built-in PHP development server, FPM manages a pool of worker processes that handle requests concurrently, making it suitable for production workloads.

What Deploynix Configures

PHP 8.4 is installed with all the extensions that Laravel applications commonly need: mbstring, xml, curl, zip, bcmath, gd, intl, soap, readline, mysql (or pgsql), redis, and more. You will rarely need to install additional extensions, but if you do, you can do so through SSH or the web terminal.

FPM pool settings are tuned based on your server's resources. The number of worker processes, the process management strategy (dynamic vs. static), and memory limits are all set to sensible defaults that prevent your server from running out of memory while maximizing throughput.

OPcache is enabled and configured for production. OPcache stores precompiled PHP bytecode in shared memory, eliminating the need to parse and compile PHP files on every request. This alone can improve response times by 50-70% compared to running without OPcache.

Key php.ini settings are optimized for production:

-

memory_limit is set appropriately for your server size

-

max_execution_time is set to prevent runaway scripts

-

upload_max_filesize and post_max_size are configured for reasonable file uploads

-

display_errors is off (errors go to logs, not to users)

-

error_reporting captures all errors for logging

PHP Version Management

Deploynix supports multiple PHP versions on the same server. If you need to run one site on PHP 8.3 and another on PHP 8.4, each site can target a different PHP-FPM pool. This is particularly useful during PHP version transitions when you need to test compatibility before upgrading everything.

MySQL: The Data Layer

Your database is the most critical piece of infrastructure. It holds your application's state, and losing it means losing everything. Deploynix takes database configuration seriously.

What Deploynix Configures

When you select MySQL (or MariaDB or PostgreSQL) during server provisioning, Deploynix installs and configures the database server with production-appropriate settings.

Character set and collation default to utf8mb4 and utf8mb4_unicode_ci, matching Laravel's default configuration. This ensures proper support for emoji, multilingual content, and special characters.

InnoDB buffer pool is sized based on your server's available memory. This buffer is where MySQL caches data and indexes, and properly sizing it is one of the most impactful performance optimizations you can make.

Connection limits are set to prevent the database from being overwhelmed. The maximum number of connections is tuned to match the number of PHP-FPM workers plus a safety margin for CLI commands and queue workers.

Slow query logging is enabled so you can identify and optimize queries that are dragging down performance.

Binary logging is configured to support point-in-time recovery and replication, should you later add a dedicated database server.

Database Security

Deploynix creates a dedicated database user for each site with permissions limited to that site's database. The root password is generated randomly and stored securely. The database server is bound to localhost by default, meaning it is not accessible from the internet.

If you need remote database access (for example, from a separate application server), Deploynix can configure the firewall to allow connections from specific IP addresses only.

Backups

Deploynix supports automated database backups to multiple storage providers: AWS S3, DigitalOcean Spaces, Wasabi, and any S3-compatible storage service. You can configure backup frequency and retention policies to keep your data safe.

Each backup is a complete mysqldump (or pg_dump for PostgreSQL) that can be restored to any compatible database server. Deploynix also supports on-demand backups before risky operations like major migrations.

Valkey: The Speed Layer

Valkey is a Redis-compatible in-memory data store that Deploynix provisions as part of your App Server stack. If you have used Redis with Laravel before, Valkey is a drop-in replacement that speaks the same protocol and supports the same commands.

What Deploynix Configures

Cache store: Laravel's cache system can use Valkey as its backend, providing sub-millisecond read and write times for cached data. Set CACHE_STORE=redis in your environment variables.

Session storage: Storing sessions in Valkey instead of files or the database eliminates filesystem I/O and database queries for every authenticated request. Set SESSION_DRIVER=redis in your environment variables.

Queue backend: Laravel's queue system works beautifully with Valkey. Pushing jobs to a Valkey-backed queue is fast and reliable, and multiple queue workers can consume jobs concurrently. Set QUEUE_CONNECTION=redis in your environment variables.

Broadcasting: If your application uses real-time events with Laravel Reverb or other WebSocket solutions, Valkey serves as the message broker.

Memory management is configured with an appropriate maxmemory setting based on your server's resources, with an allkeys-lru eviction policy for cache data. This means when memory is full, the least recently used keys are automatically evicted.

Persistence is configured to balance data durability with performance. Valkey takes periodic snapshots of the dataset, allowing recovery after a server restart while minimizing the performance impact of disk writes.

Why Valkey Instead of Redis

Valkey is an open-source fork of Redis that is fully compatible with the Redis protocol and API. It was created by the Linux Foundation after Redis changed its licensing. For Laravel applications, the switch from Redis to Valkey is transparent. You use the same redis driver in Laravel, the same PHP extension (phpredis), and the same commands. Deploynix chose Valkey because it is truly open-source under the BSD license.

Supervisor: The Process Guardian

Supervisor is a process control system that ensures your background processes stay running. In a Laravel context, the most important process it manages is your queue worker, but it can manage any long-running process your application needs.

What Deploynix Configures

Deploynix does not create queue workers automatically during provisioning, because not every application needs them. Instead, the Daemons section of your site dashboard provides an interface for creating Supervisor-managed processes.

When you create a daemon, you specify:

-

The command to run (e.g., php artisan queue:work redis --sleep=3 --tries=3 --max-time=3600)

-

The number of processes (how many concurrent workers)

-

The user to run the process as

-

Whether to auto-restart on failure

Deploynix generates the Supervisor configuration, loads it, and starts the process. If the process crashes, Supervisor automatically restarts it. If the server reboots, Supervisor starts all configured processes automatically.

Common Daemon Configurations

Queue worker: The most common daemon. Processes jobs from your application's queue. Running multiple workers allows concurrent job processing.

php artisan queue:work redis --sleep=3 --tries=3 --max-time=3600

Enter fullscreen mode

Exit fullscreen mode

Laravel Reverb: If your application uses WebSockets for real-time features, you can run the Reverb server as a Supervisor-managed daemon.

Custom processes: Any long-running process your application needs, such as a WebSocket server, a stream processor, or a monitoring agent, can be managed through Supervisor.

How the Components Communicate

Understanding the request lifecycle helps you debug issues and optimize performance.

A web request flows like this: The client's browser sends an HTTPS request. Nginx terminates SSL and checks if the requested resource is a static file. If it is, Nginx serves it directly. If not, Nginx passes the request to PHP-FPM via a Unix socket. PHP-FPM assigns the request to a worker process, which boots your Laravel application (or uses OPcache'd bytecode). Your application may query MySQL for data, read from Valkey for cached values, and dispatch jobs to the Valkey-backed queue. The response flows back through PHP-FPM to Nginx to the client.

A queued job flows differently: Your web request dispatches a job to the Valkey queue and returns immediately. A Supervisor-managed queue worker picks up the job, processes it (which may involve database queries, API calls, or other work), and marks it as complete. If the job fails, it is retried according to your configuration.

Scheduled tasks are triggered by a single cron entry that runs php artisan schedule:run every minute. Laravel's scheduler then determines which commands need to execute based on their configured frequency.

Scaling Beyond a Single Server

The single App Server stack is perfect for getting started and can handle a surprising amount of traffic. But when you outgrow a single server, Deploynix supports splitting your stack across multiple specialized servers:

-

Web Servers handle HTTP requests and run PHP-FPM

-

Database Servers are dedicated to running MySQL, MariaDB, or PostgreSQL

-

Cache Servers run Valkey independently

-

Worker Servers process queued jobs

-

Load Balancers distribute traffic across multiple web servers using Round Robin, Least Connections, or IP Hash methods

This separation allows you to scale each component independently based on where your bottlenecks are.

Customization and Tuning

While the defaults work well for most applications, Deploynix does not lock you into a black box. You have full SSH access to your servers and can customize any configuration file. The web terminal in your dashboard provides instant access without needing a local SSH client.

Common customizations include adjusting PHP-FPM pool sizes for high-traffic applications, tuning MySQL's InnoDB settings for write-heavy workloads, increasing Valkey's memory allocation for cache-heavy applications, and modifying Nginx configurations for specific routing requirements.

Conclusion

A production-ready Laravel stack is more than just "a server with PHP installed." It is a carefully orchestrated set of components, each optimized for its role and configured to work together seamlessly. Deploynix provisions this entire stack in minutes, with sensible defaults that cover the vast majority of Laravel applications.

You get the performance of a hand-tuned server without the hours of configuration and the risk of security oversights. And when you need to customize, everything is accessible and transparent.

Provision your first production server at deploynix.io and see the difference a properly configured stack makes.

DEV Community

https://dev.to/deploynix/setting-up-a-production-ready-laravel-stack-nginx-php-84-mysql-valkey-supervisor-15liSign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

availableversionopen-source

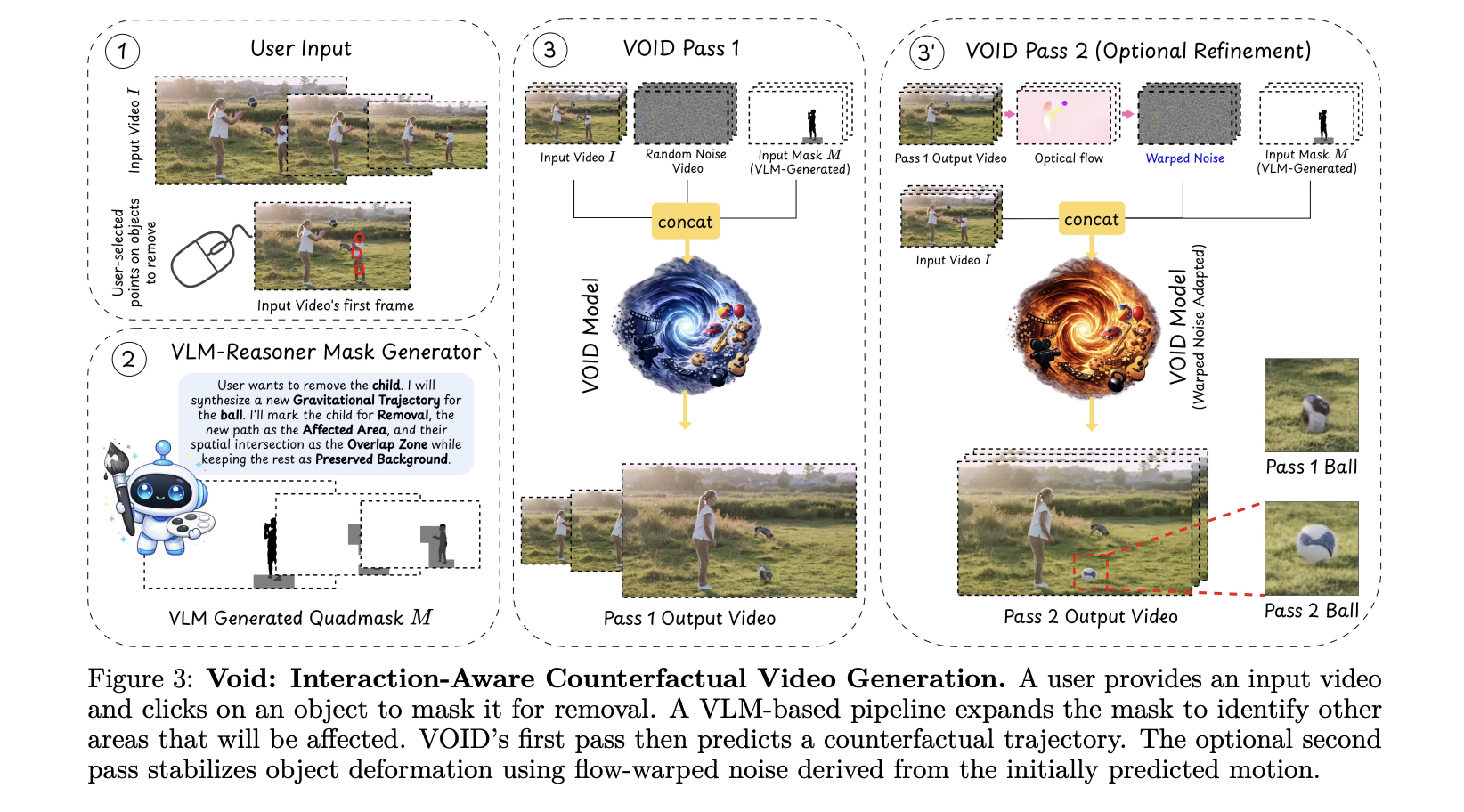

Netflix AI Team Just Open-Sourced VOID: an AI Model That Erases Objects From Videos — Physics and All

Video editing has always had a dirty secret: removing an object from footage is easy; making the scene look like it was never there is brutally hard. Take out a person holding a guitar, and you re left with a floating instrument that defies gravity. Hollywood VFX teams spend weeks fixing exactly this kind of problem. [ ] The post Netflix AI Team Just Open-Sourced VOID: an AI Model That Erases Objects From Videos — Physics and All appeared first on MarkTechPost .

Sharing Two Open-Source Projects for Local AI & Secure LLM Access 🚀

Hey everyone! I’m finally jumping into the dev.to community. To kick things off, I wanted to share two tools I’ve been developing at the University of Jaén that tackle two common headaches in the AI space: running out of VRAM, and keeping your API chats truly private. 🦥 Quansloth: TurboQuant Local AI Server The Problem: Standard LLM inference hits a "Memory Wall" with long documents. As context grows, your GPU runs out of memory (OOM) and crashes. The Solution: Quansloth is a fully private, air-gapped AI server that brings elite KV cache compression to consumer hardware. By bridging a Gradio Python frontend with a highly optimized llama.cpp CUDA backend, it prevents GPU crashes and lets you run massive contexts on a budget. Key Features: 75% VRAM Savings: Based on Google's TurboQuant (ICL

Day 61 of #100DaysOfCode — Python Refresher Part 1

When I started my #100DaysOfCode journey, I began with frontend development using React, then moved into backend development with Node.js and Express. After that, I explored databases to understand how data is stored and managed, followed by building full-stack applications with Next.js. It is now time to start learning Python, not from scratch, but as a refresher to strengthen my fundamentals and expand my backend skillset. Learning Python strengthens my core programming skills and offers a new perspective beyond JavaScript. It aligns with backend development, data handling, and automation, allowing me to build on my existing knowledge and become a more versatile developer. Today, for Day 61, I focused on revisiting the core building blocks of Python. Core Syntax Variables Data Types Vari

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Products

🦀 Rust Foundations — The Stuff That Finally Made Things Click

"Rust compiler and Clippy are the biggest tsunderes — they'll shout at you for every small mistake, but in the end… they just want your code to be perfect." Why I Even Started Rust I didn't pick Rust out of curiosity or hype. I had to. I'm working as a Rust dev at Garden Finance , where I built part of a Wallet-as-a-Service infrastructure. Along with an Axum backend, we had this core Rust crate ( standard-rs ) handling signing and broadcasting transactions across: Bitcoin EVM chains Sui Solana Starknet And suddenly… memory safety wasn't "nice to have" anymore. It was everything. Rust wasn't just a language — it was a guarantee . But yeah… in the beginning? It felt like the compiler hated me :( So I'm writing this to explain Rust foundations in the simplest way possible — from my personal n

Why Standard HTTP Libraries Are Dead for Web Scraping (And How to Fix It)

If you are building a data extraction pipeline in 2026 and your core network request looks like Ruby’s Net::HTTP.get(URI(url)) or Python's requests.get(url) , you are already blocked. The era of bypassing bot detection by rotating datacenter IPs and pasting a fake Mozilla/5.0 User-Agent string is long gone. Modern Web Application Firewalls (WAFs) like Cloudflare, Akamai, and DataDome don’t just read your headers anymore—they interrogate the cryptographic foundation of your connection. Here is a deep dive into why standard HTTP libraries actively sabotage your scraping infrastructure, and how I built a polyglot sidecar architecture to bypass Layer 4–7 fingerprinting entirely. The Fingerprint You Didn’t Know You Had When your code opens a secure connection to a server, long before the first

Tired of Zillow Blocking Scrapers — Here's What Actually Works in 2026

If you've ever tried scraping Zillow with BeautifulSoup or Selenium, you know the pain. CAPTCHAs, IP bans, constantly changing HTML selectors, headless browser detection — it's an arms race you're not going to win. I spent way too long fighting anti-bot systems before switching to an API-based approach. This post walks through how to pull Zillow property data, search listings, get Zestimates, and export everything to CSV/Excel — all with plain Python and zero browser automation. What You'll Need Python 3.7+ The requests library ( pip install requests ) A free API key from RealtyAPI That's it. No Selenium. No Playwright. No proxy rotation. Getting Started: Your First Property Lookup Let's start simple — get full property details for a single address: import requests url = " https://zillow.r

Why Gaussian Diffusion Models Fail on Discrete Data?

arXiv:2604.02028v1 Announce Type: new Abstract: Diffusion models have become a standard approach for generative modeling in continuous domains, yet their application to discrete data remains challenging. We investigate why Gaussian diffusion models with the DDPM solver struggle to sample from discrete distributions that are represented as a mixture of delta-distributions in the continuous space. Using a toy Random Hierarchy Model, we identify a critical sampling interval in which the density of noisified data becomes multimodal. In this regime, DDPM occasionally enters low-density regions between modes producing out-of-distribution inputs for the model and degrading sample quality. We show that existing heuristics, including self-conditioning and a solver we term q-sampling, help alleviate

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!