Report claims Arm chips will power 90% of AI servers based on custom processors in 2029 — x86 and RISC-V on the outside looking in

Report claims Arm chips will power 90% of AI servers based on custom processors in 2029 — x86 and RISC-V on the outside looking in

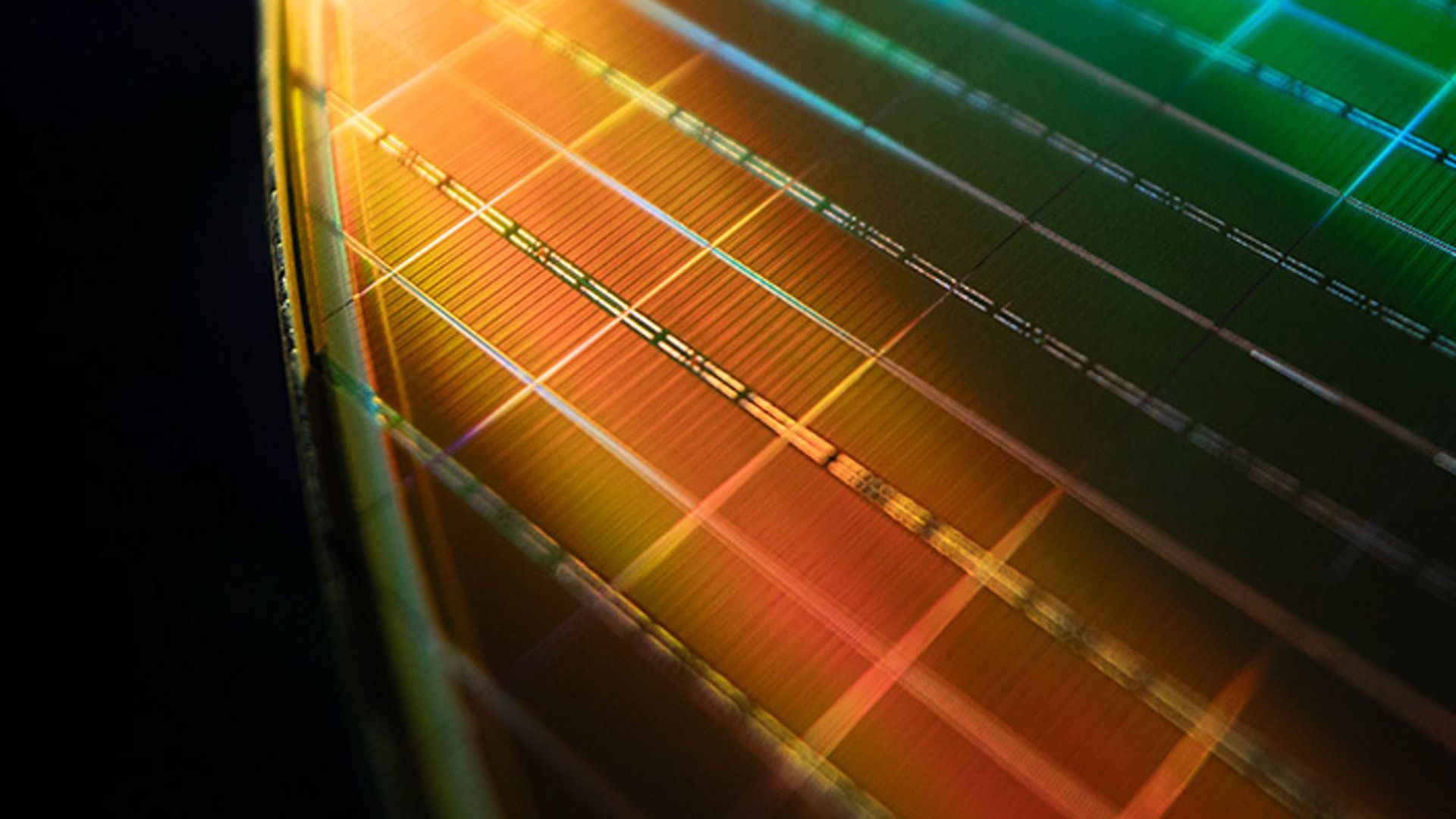

(Image credit: Micron)

Virtually all hyperscale cloud service providers (CSPs), as well as some of the leading developers of AI accelerators nowadays, have their own custom-silicon programs that are focused not only on developing AI accelerators, but also on custom general-purpose CPUs usually based on the Arm instruction set architecture (ISA). Over the next several years proliferation of custom CPUs based on the Arm ISA inside AI servers will increase to 90%, leaving x86 and Arm around 10%, according to Counterpoint Research.

x86 processors from AMD and Intel have long dominated general-purpose servers, which is why most of the AI servers initially relied on Opteron and Xeon processors. However, Arm-based custom CPUs that are tailored for specific data-intensive AI workloads are more cost and power-efficient. Furthermore, given the fact that AI workloads are emerging workloads, backward compatibility with x86 is not vital. To that end, AWS, Google, and Microsoft have developed their own proprietary Arm-based processors for their own workloads, whereas Meta is the alpha customer for Arm's own AGI processor.

Article continues below

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

report

Cloud Observability vs Monitoring: What's the Difference and Why It Matters

Cloud Observability vs Monitoring: What's the Difference and Why It Matters Your alerting fires at 2 AM. CPU is at 94%, error rate is at 6.2%, and latency is climbing. You page the on-call engineer. They open the dashboard. They see the numbers going up. What they cannot see is why — because the service throwing errors depends on three upstream services, one of which depends on a database that is waiting on a connection pool that was quietly exhausted by a batch job that ran 11 minutes ago. Monitoring told you something was wrong. Observability would have told you what. This is not a semantic argument. Teams with mature observability resolve incidents 2.8x faster than teams that rely on monitoring alone, according to DORA research. The gap matters in production. Understanding why the gap e

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Analyst News

Your Pipeline Is 22.2h Behind: Catching Finance Sentiment Leads with Pulsebit

Your Pipeline Is 22.2h Behind: Catching Finance Sentiment Leads with Pulsebit We recently uncovered a striking anomaly: a 24h momentum spike of +0.315 in the finance sector. This spike, driven by a narrative that’s shifting around the NSEI:LTM investment story, highlights how rapidly sentiment can change and how crucial it is to stay ahead. With the leading language being English and a lag of just 0.0 hours against the immediate data, we can see that the conversation is heating up. If you’re not tuned in to these fluctuations, you could easily miss critical developments. Your model missed this by 22.2 hours. That’s a significant delay in tracking sentiment shifts tied to the NSEI:LTM investment theme. Without a robust pipeline that accommodates multilingual origins and entity dominance, yo

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!