Preview tool helps makers visualize 3D-printed objects

By quickly generating aesthetically accurate previews of fabricated objects, the VisiPrint system could make prototyping faster and less wasteful.

Designers, makers, and others often use 3D printing to rapidly prototype a range of functional objects, from movie props to medical devices. Accurate print previews are essential so users know a fabricated object will perform as expected.

But previews generated by most 3D-printing software focus on function rather than aesthetics. A printed object may end up with a different color, texture, or shading than the user expected, resulting in multiple reprints that waste time, effort, and material.

To help users envision how a fabricated object will look, researchers from MIT and elsewhere developed an easy-to-use preview tool that puts appearance first.

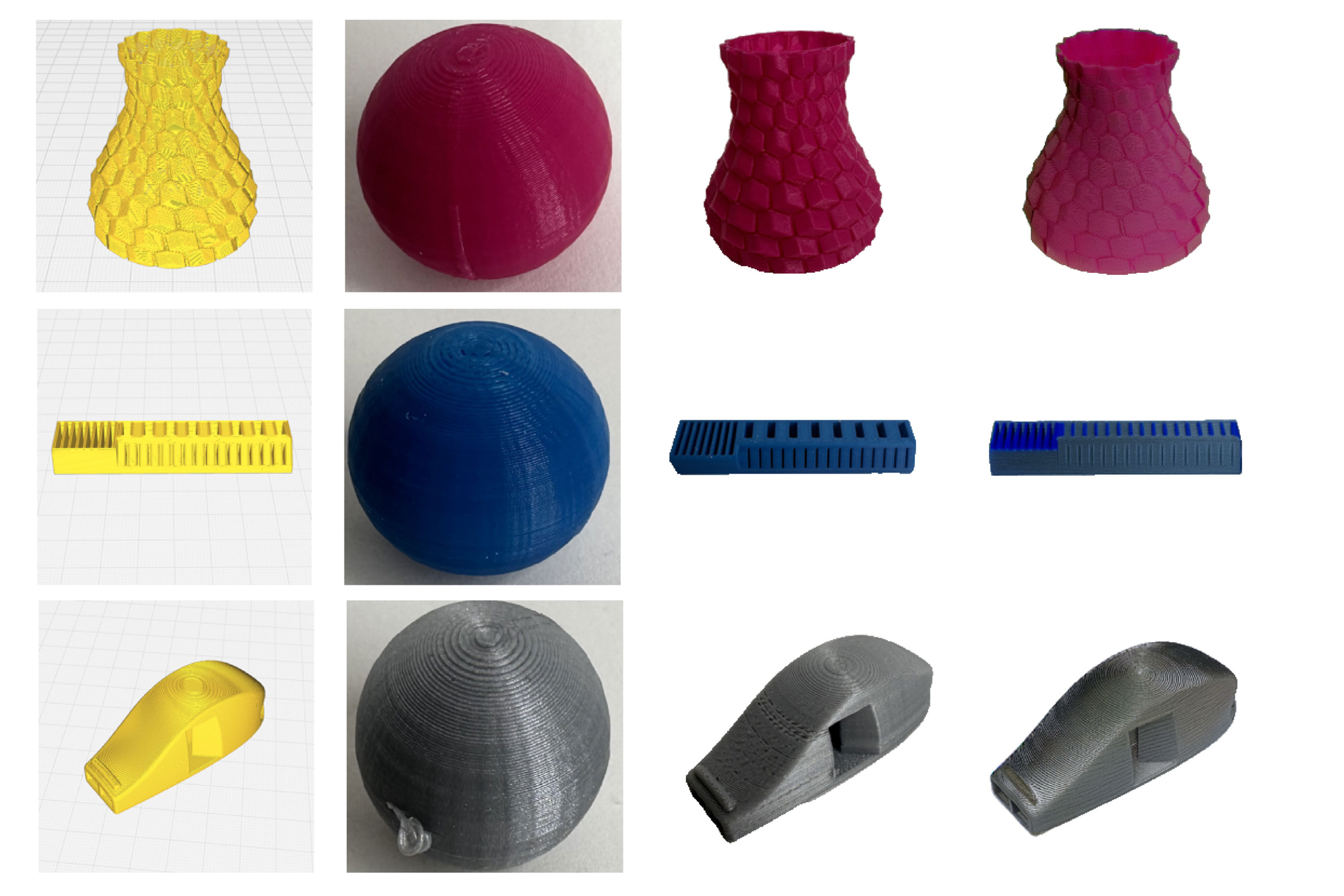

Users upload a screenshot of the object from their 3D-printing software, along with a single image of the print material. From these inputs, the system automatically generates a rendering of how the fabricated object is likely to look.

The artificial intelligence-powered system, called VisiPrint, is designed to work with a range of 3D-printing software and can handle any material example. It considers not only the color of the material, but also gloss, translucency, and how nuances of the fabrication process affect the object’s appearance.

Such aesthetics-focused previews could be especially useful in areas like dentistry, by helping clinicians ensure temporary crowns and bridges match the appearance of a patient’s teeth, or in architecture, to aid designers in assessing the visual impact of models.

“3D printing can be a very wasteful process. Some studies estimate that as much as a third of the material used goes straight to the landfill, often from prototypes the user ends of discarding. To make 3D printing more sustainable, we want to reduce the number of tries it takes to get the prototype you want. The user shouldn’t have to try out every printing material they have before they settle on a design,” says Maxine Perroni-Scharf, an electrical engineering and computer science (EECS) graduate student and lead author of a paper on VisiPrint.

She is joined on the paper by Faraz Faruqi, a fellow EECS graduate student; Raul Hernandez, an MIT undergraduate; SooYeon Ahn, a graduate student at the Gwangju Institute of Science and Technology; Szymon Rusinkiewicz, a professor of computer science at Princeton University; William Freeman, the Thomas and Gerd Perkins Professor of EECS at MIT and a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL); and senior author Stefanie Mueller, an associate professor of EECS and Mechanical Engineering at MIT, and a member of CSAIL. The research will be presented at the ACM CHI Conference on Human Factors in Computing Systems.

Accurate aesthetics

The researchers focused on fused deposition modeling (FDM), the most common type of 3D printing. In FDM, print material filament is melted and then squirted through a nozzle to fabricate an object one layer at a time.

Generating accurate aesthetic previews is challenging because the melting and extrusion process can change the appearance of a material, as can the height of each deposited layer and the path the nozzle follows during fabrication.

VisiPrint uses two AI models that work together to overcome those challenges.

The VisiPrint preview is based on two inputs: a screenshot of the digital design from a user’s 3D-printing software (called “slicer” software), and an image of the print material, which can be taken from an online source or captured from a printed sample.

From these inputs, a computer vision model extracts features from the material sample that are important for the object’s appearance.

It feeds those features to a generative AI model that computes the geometry and structure of the object, while incorporating the so-called “slicing” pattern the nozzle will follow as it extrudes each layer.

The key to the researchers’ approach is a special conditioning method. This involves carefully adjusting the inner workings of the model to guide it, so it follows the slicing pattern and obeys the constraints of the 3D-printing process.

Their conditioning method utilizes a depth map that preserves the shape and shading of the object, along with a map of the edges that reflects the internal contours and structural boundaries.

“If you don’t have the right balance of these two things, you could use up with bad geometry or an incorrect slicing pattern. We had to be careful to combine them in the right way,” Perroni-Scharf says.

A user-focused system

The team also produced an easy-to-use interface where one can upload the required images and evaluate the preview.

The VisiPrint interface enables more advanced makers to adjust multiple settings, such as the influence of certain colors on the final appearance.

In the end, the aesthetic preview is intended to complement the functional preview generated by slicer software, since VisiPrint does not estimate printability, mechanical feasibility, or likelihood of failure.

To evaluate VisiPrint, the researchers conducted a user study that asked participants to compare the system to other approaches. Nearly all participants said it provided better overall appearance as well as more textural similarity with printed objects.

In addition, the VisiPrint preview process took about a minute on average, which was more than twice as fast as any competing method.

“VisiPrint really shined when compared to other AI interfaces. If you give a more general AI model the same screenshots, it might randomly change the shape or use the wrong slicing pattern because it had no direct conditioning,” she says.

In the future, the researchers want to address artifacts that can occur when model previews have extremely fine details. They also want to add features that allow users to optimize parts of the printing process beyond color of the material.

“It is important to think about the way that we fabricate objects. We need to continue striving to develop methods that reduce waste. To that end, this marriage of AI with the physical making process is an exciting area of future work,” Perroni-Scharf says.

“‘What you see is what you get’ has been the main thing that made desktop publishing ‘happen’ in the 1980s, as it allowed users to get what they wanted at first try. It is time to get WYSIWYG for 3D printing as well. VisiPrint is a great step in this direction,” says Patrick Baudisch, a professor of computer science at the Hasso Plattner Institute, who was not involved with this work.

This research was funded, in part, by an MIT Morningside Academy for Design Fellowship and an MIT MathWorks Fellowship.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

review

‘It’s all very possible’: Michael Patrick King on The Comeback return’s shocking AI twist – and why And Just Like That will age well

<p>Could AI write an entire sitcom series? That’s the premise of the new season of comedy drama The Comeback. Its co-creator talks about being shocked by his research – and why the world needs to catch up with AJLT</p><p>TV veteran Michael Patrick King has had a long, lively career, writing, directing and producing on shows including Murphy Brown, Will & Grace and 2 Broke Girls. He’s best-known, though, for his work on the Sex and the City franchise, serving as its showrunner for the bulk of its run, writing and directing its two films, and masterminding its controversial <a href="https://www.theguardian.com/tv-and-radio/2025/aug/02/goodbye-and-just-like-that-right-time-to-end-cursed-spin-off">2020s revival And Just Like That</a>. But this month sees the return of one of his most loved

Azilen Technologies Recognized Across Two Leading AI Economies – USA & UK – for Enterprise AI Excellence - The National Law Review

<a href="https://news.google.com/rss/articles/CBMirgFBVV95cUxNaVNmYk1WWkJBaDU0M0VxcWVEMzdCcUxaWnBvaHVpMTJNNS05eV9wYXI0Rm5aZzY3N2Iyc0I4OUROQlFRaGsxVEhyU05mZHZodV9VdW01R3RqbVpLM2pHMkZQdjNmMkJPTmVPelU0X2VjSWEwRk9TQ0FHajAtZl9ZekdLMjhrdzgtSFd3aG1sN1JhcmZTUUJHUmFSSllFUk04anZLUFkzVTNuVU1lS1E?oc=5" target="_blank">Azilen Technologies Recognized Across Two Leading AI Economies – USA & UK – for Enterprise AI Excellence</a> <font color="#6f6f6f">The National Law Review</font>

The AI Doc's director was "scared shitless" by AI, so he made a movie about it

If you're feeling anxious about AI and what it means for the future of humanity, you should watch The AI Doc: Or, How I Became an Apocaloptimist . As I noted in my review , the film aims to deliver some clarity amid all the hype. Now that it's in theaters, we sat down with director Daniel Roher, who won an Oscar for his film Navalny , to dive deeper into his complicated feelings around AI. The entire topic made him nervous, Roher said, so he decided to team up with similarly anxious colleagues to demystify AI using film. He describes the goal of the project to be a sort of "first date" with AI, a way to hear about its potential benefits from AI boosters, while also taking in the many negatives brought up by critics. It’s probably too late to stop AI entirely, but he thinks we can at least

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Products

Apple turns 50: 8 of the company’s biggest tech milestones

With Apple turning 50 years old today, Northumbria University’s Nick Dalton goes through some of the tech giant’s most notable tech milestones. Read more: Apple turns 50: 8 of the company’s biggest tech milestones

The AI Revolution in Development: Why Outer Loop Agents Are the Next Big Thing

Editor’s note: Robert Brennan is speaking at ODSC AI East this April 28th-30th in Boston! Check out his talk, “ Agents for the Inner Loop and Outer Loop ,” there! If you’re using AI to code, you’re probably doing what most developers do: running it inside your IDE or as a CLI on your laptop. You make a few changes, the AI helps out, and you iterate until it’s working. That’s the inner loop of development — and it’s where AI has already made a massive impact. But here’s the thing most people are missing: AI is about to revolutionize what happens after you push your code. The Shift to Outer Loop Agents Think about everything that happens after you git push: CI/CD runs, code gets reviewed, issues get tracked, vulnerabilities get flagged. That’s the outer loop — and traditionally, it’s been pr

How I built a browser-based video editor with FFmpeg.wasm (no backend, no server costs)

<p>I got tired of opening CapCut every time I needed to quickly join 2-3 clips. Too many menus, too many features I'll never use. So I built my own.<br> <strong><a href="https://www.2minclip.com" rel="noopener noreferrer">2minclip.com</a></strong> — a free online video editor that runs entirely in the browser. No install, no signup, no watermark.<br> Here's how I built it and what I learned.</p> <h2> The core idea </h2> <p>The concept is simple: ilovepdf but for video. You open the browser, upload your clips, edit, export. That's it. No account, no server processing, no storage costs.<br> The key technical decision was using <strong>FFmpeg.wasm</strong> — a WebAssembly port of FFmpeg that runs entirely in the browser. This means:</p> <ul> <li>Zero server costs (users process video on their

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!