Nvidia Intros Data Factory, Robotics Models in Physical AI Push

The releases aim to solidify the AI chip giant's standing in the physical AI sector.

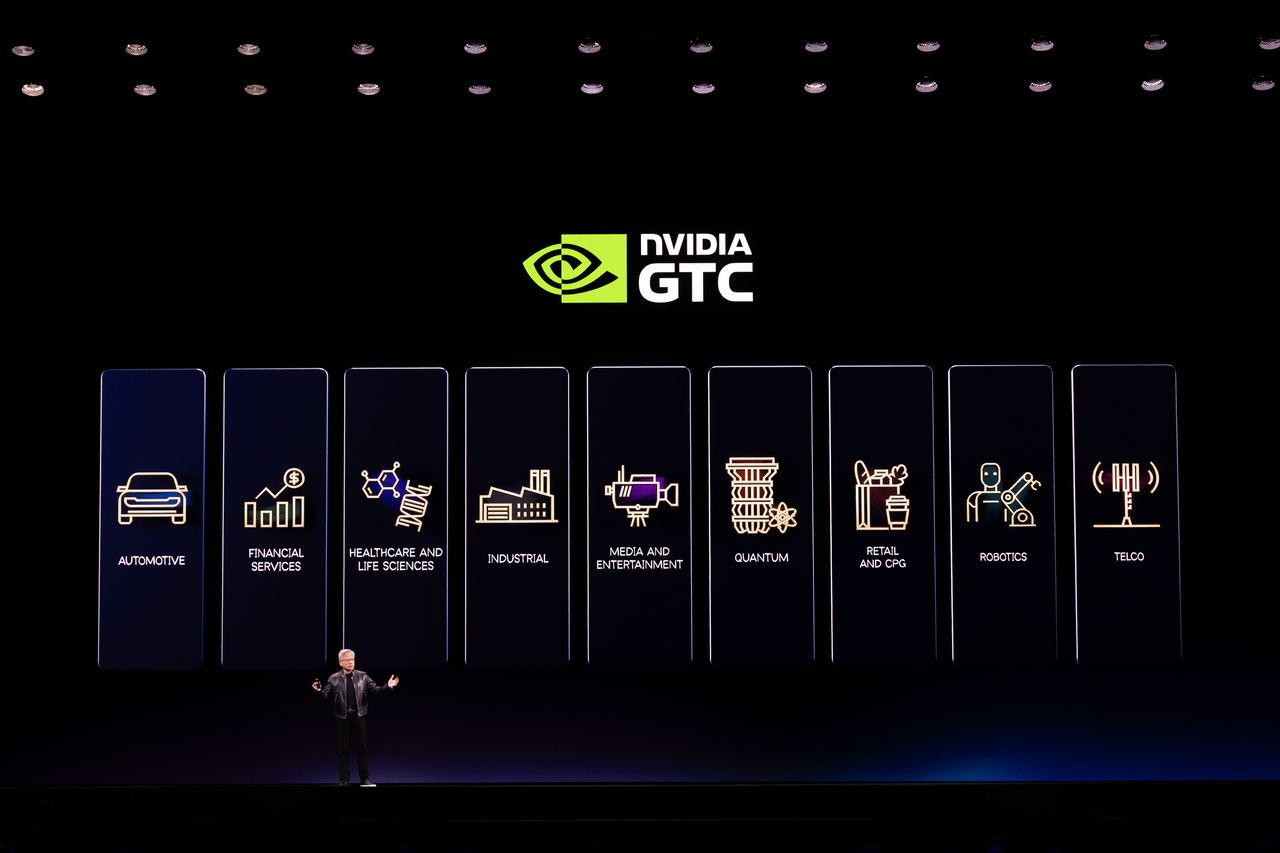

Nvidia CEO Jensen Huang at GTC conferenceBenjamin Fanjoy via Getty Images

Nvidia on Monday unveiled a spate of new features and models to accelerate uptake and development of physical AI.

The burgeoning sector is defined as systems that enable machines to more intelligently respond to their physical environments.

Introduced at the vendor's GTC conference in San Jose, the releases focus primarily on Nvidia’s Physical AI Data Factory, an open reference architecture designed to transform real-world data into large-scale training datasets.

Rev Lebaredian, vice president of Omniverse and simulation technology at Nvidia, said during a pre-briefing that the system uses the company’s Cosmos world models and coding agents.

Specifically, the system is built around three components: Cosmo Curator, which processes datasets; Cosmos Transfer, which generates scenarios to expand the datasets; and Cosmos Evaluator; which verifies generated data before using it for training.

Together, the components automate the data generation process for robotics developers.

Related:Florida University Rolls Out Autonomous Delivery Robots

“It’s a data factory designed specifically for physical AI,” Lebaredian said. “Cosmos unifies and manages all three stages, reducing manual work so developers can focus on building models.”

The platform will initially be available on Microsoft’s Azure cloud platform. Nvidia said companies including Field AI, Hexagon Robotics, Milestone Systems, Skild AI, and TerraNine Robotics are early adopters.

Alongside the data architecture, Nvidia introduced Cosmos-3, a new world model that combines vision, reasoning and prediction to generate robot behaviors.

The new Cosmos platform also includes what Nvidia described as the largest open video dataset for physical AI, along with frameworks for curating and evaluating large-scale video data.

A typical challenge in physical AI, according to Lebaredian, is that real-world training data is difficult to collect at scale due to the unpredictability of physical environments.

“In the past, real-world data was the primary mode of training,” he said. “But the real world is diverse, unpredictable and full of edge cases. You simply cannot manually capture enough data to train for all of them.”

Instead, developers are increasingly turning to world models trained on internet-scale video and human demonstration data -- enabling robotic training on a far greater scale than previously possible.

To stay ahead of this shift, Nvidia also rolled out early access to its AI-enabled video search and summarization tool, Metropolis VSS Blueprint. The system enables developers to build agents that analyze and act on massive streams of video data from edge to cloud.

Related:Google Partners With Agile Robots in Latest AI Robotics Push

Along with the product launches was a new partnership with T-Mobile. The companies are working to integrate physical AI applications into networks, bringing these agents to edge applications.

Looking Ahead

The moves reflect Nvidia’s growing drive into physical AI, which has become something of a buzzword as developers seek machines with elevated intelligence and perception capabilities.

“Autonomous vehicles represented the first wave of physical AI, but much more is coming down the pipeline,” Lebaredian said. “Soon we will have billions of AI agents running on billions of devices. The world’s industries will be transformed by physical AI and AI-driven physics.”

He identified the rise of humanoid robots as a key catalyst for market growth, with demand for physical AI systems anticipated to see a further upswing

“Today, roughly three million robots power the world’s industries,” Lebaredian said. “But the next generation of humanoid robots is now arriving, with deployments expected to grow nearly tenfold by 2026.”

“In this context, our models and frameworks are designed to support both existing and future robot platforms,” he added. “These systems will be more accurate, more lightweight and easier to deploy.”

Related:Amazon Buys ‘Approachable’ Robot Maker, Fauna

About the Author

Contributing Writer

Scarlett Evans is a freelance writer with a focus on emerging technologies and the minerals industry. Previously, she served as assistant editor at IoT World Today, where she specialized in robotics and smart city technologies. Scarlett also has a background in the mining and resources sector, with experience at Mine Australia, Mine Technology and Power Technology. She joined Informa in April 2022 before transitioning to freelance work.

AI Business

https://aibusiness.com/robotics/nvidia-intros-data-factory-robotics-models-for-physical-aiSign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelrelease

The Fallback That Never Fires

<p>Your agent hits a rate limit. The fallback logic kicks in, picks an alternative model. Everything should be fine.</p> <p>Except the request still goes to the original model. And gets rate-limited again. And again. Forever.</p> <h2> The Setup </h2> <p>When your primary model returns 429:</p> <ol> <li>Fallback logic detects rate_limit_error</li> <li>Selects next model in the fallback chain</li> <li>Retries with the fallback model</li> <li>User never notices</li> </ol> <p>OpenClaw has had model fallback chains for months, and they generally work well.</p> <h2> The Override </h2> <p><a href="https://github.com/openclaw/openclaw/issues/59213" rel="noopener noreferrer">Issue #59213</a> exposes a subtle timing problem. Between steps 2 and 3, there is another system: <strong>session model recon

I Asked AI to Do Agile Sprint Planning (GitHub Copilot Test)

<p>AI tools are getting very good at writing code.</p> <p>GitHub Copilot can generate entire functions, review pull requests, and even help refactor legacy codebases. But software development isn’t just about writing code.</p> <p>A big part of the process is <strong>planning the work</strong>.</p> <p>So I decided to run a small experiment:</p> <p><strong>Can AI actually perform Agile sprint planning?</strong></p> <p>Using <strong>GitHub Copilot inside Visual Studio 2026</strong>, I asked AI to review a legacy codebase and generate a <strong>Scrum sprint plan for rewriting the application</strong>.</p> <p>The results were… interesting.</p> <h1> Watch Video </h1> <h2> <iframe src="https://www.youtube.com/embed/ErwuATHHXw4"> </iframe> </h2> <h1> The Setup </h1> <p>The experiment was intention

OpenSpec (Spec-Driven Development) Failed My Experiment — Instructions.md Was Simpler and Faster

<p>There’s a lot of discussion right now about how developers should work with AI coding tools.</p> <p>Over the past year we’ve seen the rise of two very different philosophies:</p> <p><strong>1. Vibe Coding</strong> — just prompt the AI and iterate quickly<br> <strong>2. Spec-Driven Development</strong> — enforce structure so AI understands requirements</p> <p>Frameworks like <strong>OpenSpec</strong> are trying to formalize the second approach.</p> <p>Instead of giving AI simple prompts, the workflow looks something like this:</p> <ul> <li>generate a proposal</li> <li>review specifications</li> <li>approve tasks</li> <li>allow the AI agent to execute the plan</li> </ul> <p>In theory, this should produce <strong>better and more reliable code</strong>.</p> <p>So I decided to test it on a r

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Claude Code bypasses safety rule if given too many commands

<h4>A hard-coded limit on deny rules drops automatic enforcement for concatenated commands</h4> <p>Claude Code will ignore its deny rules, used to block risky actions, if burdened with a sufficiently long chain of subcommands. This vuln leaves the bot open to prompt injection attacks.…</p>

Walmart expands AI-powered shopping — and checkout — with Google Gemini - axios.com

<a href="https://news.google.com/rss/articles/CBMidEFVX3lxTFBrSFVRNTBJMlZoWVRGNEdJNlpBbUl3RVpPRVlIVWQ0OFhtck5zSzdIRkt1bDQ5aDJTZ1g3SVVDZnNfSm1Nbk1DdGRyc2RqQWJnNkRZYjJlWEU5SGdKUjU3ZkdDekt6bHgxT3dwUEJFMU5URHFy?oc=5" target="_blank">Walmart expands AI-powered shopping — and checkout — with Google Gemini</a> <font color="#6f6f6f">axios.com</font>

Walmart teams up with Google’s Gemini for AI-assisted shopping - Retail Dive

<a href="https://news.google.com/rss/articles/CBMiigFBVV95cUxOX3g3TkoxOTZieXhpOWd2ZnBTNnM2Rl9rZTJ1WmlzMVZhUFlmVWlpWmVyOTZJUV9WcHIyR1VaeGxaQzZDYW1BeDRWbGVIWGx6UWpEdUJ4LXpoZk1YUDNHcnlJNTFKOWxCOXJDNm13V1NnNmFJRjFiM2FKUnp1VkdobmVTZ1NpN2ZEV2c?oc=5" target="_blank">Walmart teams up with Google’s Gemini for AI-assisted shopping</a> <font color="#6f6f6f">Retail Dive</font>

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!