New machine learning model offers blueprint for super-adsorbent biochar - EurekAlert!

Hey there, little scientist! 🧪

Imagine you have a super-duper sponge that can soak up all the yucky stuff, like tiny bits of dirt, from water or air. This sponge is called "biochar"!

Now, imagine we have a super-smart robot brain, like a friendly computer wizard! ✨ This wizard (that's the "machine learning model") helps us figure out how to make the best biochar sponges ever. It's like the wizard gives us a secret recipe to make the sponges extra, extra good at cleaning!

So, the robot brain helps us make super-sponges to clean our world! Isn't that cool? 🌎💧

<a href="https://news.google.com/rss/articles/CBMiXEFVX3lxTE5qNEdfZ1RmbEhubW8yNE5lX1pnWlhRUl9OMU1nQzZJdEZLY0dvNW14TUZkUlZPcUJEeFJReDhiRmFOMC1BZHRhRTYyVXJhWERMTjAxMVhiQ09jUFNo?oc=5" target="_blank">New machine learning model offers blueprint for super-adsorbent biochar</a> <font color="#6f6f6f">EurekAlert!</font>

Could not retrieve the full article text.

Read on Google News: Machine Learning →Google News: Machine Learning

https://news.google.com/rss/articles/CBMiXEFVX3lxTE5qNEdfZ1RmbEhubW8yNE5lX1pnWlhRUl9OMU1nQzZJdEZLY0dvNW14TUZkUlZPcUJEeFJReDhiRmFOMC1BZHRhRTYyVXJhWERMTjAxMVhiQ09jUFNo?oc=5Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

model

Microsoft AB-731 AI Transformation Leader – 10 Tricky Practice Questions

The AB-731 exam doesn't just ask what Copilot is; it asks how to lead the transformation . These 10 tricky questions focus on the grey areas of ROI, Governance, and Deployment Strategy. Question 1: The "Build vs. Buy" Dilemma Your company needs a highly specialized AI tool to analyze proprietary legal contracts. You are evaluating whether to use Microsoft 365 Copilot or build a custom solution in Azure AI Foundry . Which factor most strongly suggests building a custom solution? A) You need the tool to be accessible within Microsoft Teams. B) You need the AI to reference internal SharePoint files. C) You need to use a specific, fine-tuned open-source model (like Llama 3) for regulatory compliance. D) You want to minimize the monthly per-user licensing cost. Correct Answer: C. Copilot is a "

How to Automate App Store Submissions with Claude Code Using Blitz MCP

Install the open-source Blitz MCP servers to automate your entire iOS/macOS app submission workflow through Claude Code commands. How to Automate App Store Submissions with Claude Code Using Blitz MCP What It Does Blitz is a native macOS app that provides MCP (Model Context Protocol) servers giving Claude Code direct access to App Store Connect APIs. Instead of manually navigating Apple's web interface for app submissions, you can now manage the entire process through Claude Code commands. The open-source MCP servers handle: App metadata management across all locales Build selection and submission for App Store review TestFlight build management , groups, and testers Screenshot upload and organization Review note writing and refinement to increase approval chances Simulator and device mana

One Open Source Project a Day (No.32): OpenClaw-Admin - The Visual Control Center for AI Agent Gateways

Introduction "Deploy AI Agents where your users already are — in the chat apps they use every day." This is article No.32 in the "One Open Source Project a Day" series. Today's project is OpenClaw-Admin ( GitHub ). One of the biggest challenges in AI Agent deployment is distribution: how do you get users to actually interact with your Agent? Building a dedicated app is expensive, and asking users to open yet another tool creates friction. OpenClaw takes a different approach — bridge AI Agents directly into the IM platforms users already live in (QQ, Lark/Feishu, DingTalk, WeCom/WeChat for Work). OpenClaw-Admin is the "cockpit" for this multi-channel AI Agent gateway — a modern web management console built on Vue 3 + TypeScript that provides everything from Agent configuration and session m

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

LLM Accuracy vs Reproducibility: Are We Measuring Capability or Sampling Luck?

Why identical prompts can produce different reasoning paths — and why that matters for evaluation LLM Accuracy vs Reproducibility: Are We Measuring Capability or Sampling Luck? When working with LLMs, we often rely on metrics like accuracy, pass rates, or benchmark scores to evaluate performance. But a simple experiment reveals something that’s easy to overlook. The Setup Same prompt Same model snapshot Same temperature Same sampling configuration Run the same input multiple times. The Observation The outputs don’t just vary slightly. They often follow completely different reasoning paths. In some cases, the structure of the response changes significantly — different intermediate steps, different logic, different phrasing. And yet: The final answer may still be the same. Why This Matters M

Opus's Schelling Steganography Has Amplifiable Secrecy Against Weaker Eavesdroppers

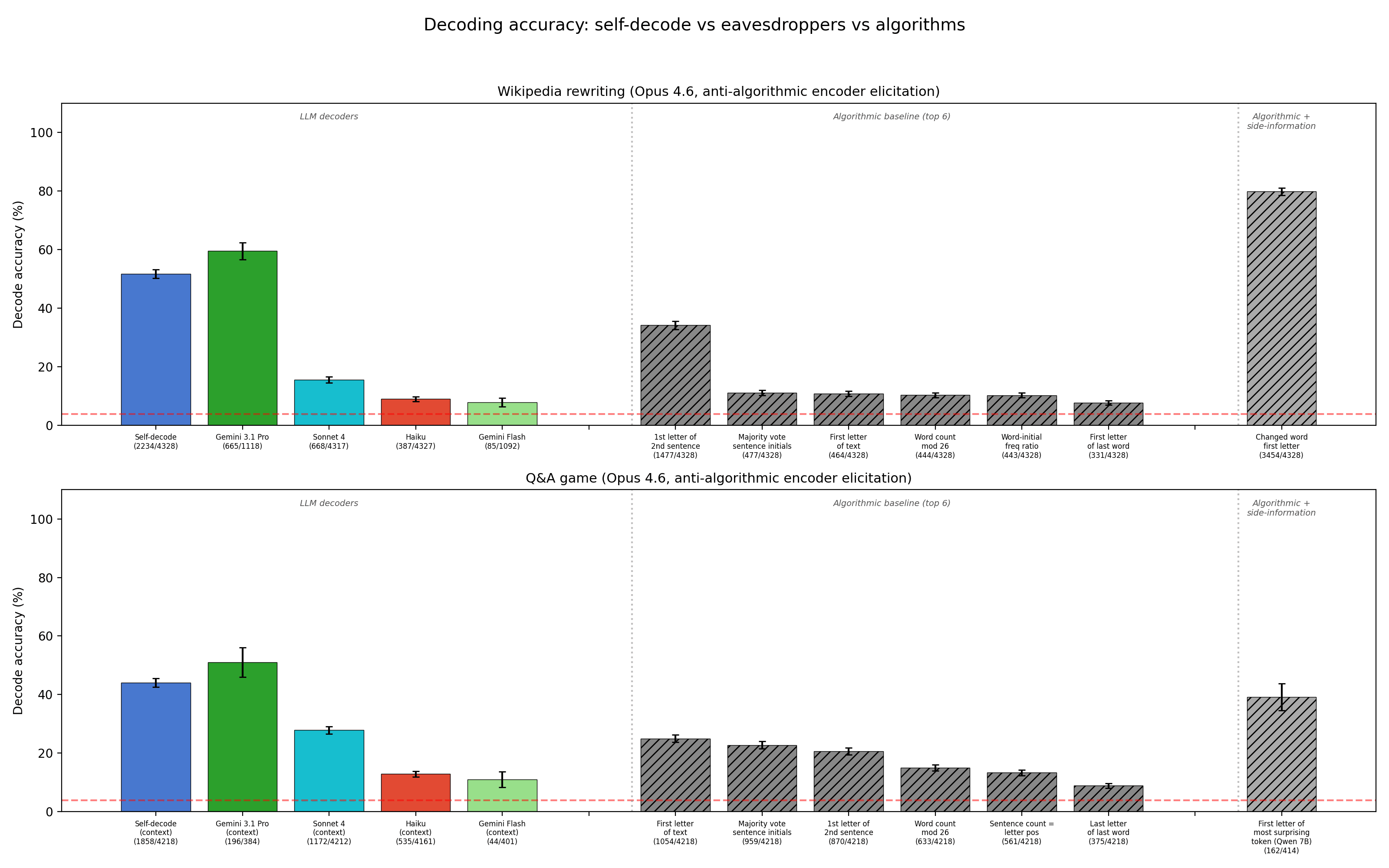

Code: github.com/ElleNajt/Steganography_Wiretapping | Data: huggingface.co/datasets/lnajt/steganography-wiretapping Play the decoding game: can you eavesdrop on Claude Opus 4.6? tldr of post Frontier models (Opus and Gemini Pro) can agree on Schelling steganography schemes with significant advantage against weaker eavesdroppers, given just the knowledge of the alphabet and the game that the encoder has to play, but not the specific steganography scheme. We find that paraphrasing removes this advantage , but show how it can be amplified through wiretap codes, simple versions of which thinking models can implement. We argue that thinking of Schelling steganography schemes as providing noisy channels for wiretap coding is an important way to understand steganography risk in some plausible AI

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!