ML Safety Newsletter #7

Making model dishonesty harder, making grokking more interpretable, an example of an emergent internal optimizer

Welcome to the 7th issue of the ML Safety Newsletter! In this edition, we cover:

- ‘Lie detection’ for language models

- A step towards objectives that incorporate wellbeing

- Evidence that in-context learning invokes behavior similar to gradient descent

- What’s going on with grokking?

- Trojans that are harder to detect

- Adversarial defenses for text classifiers

- And much more…

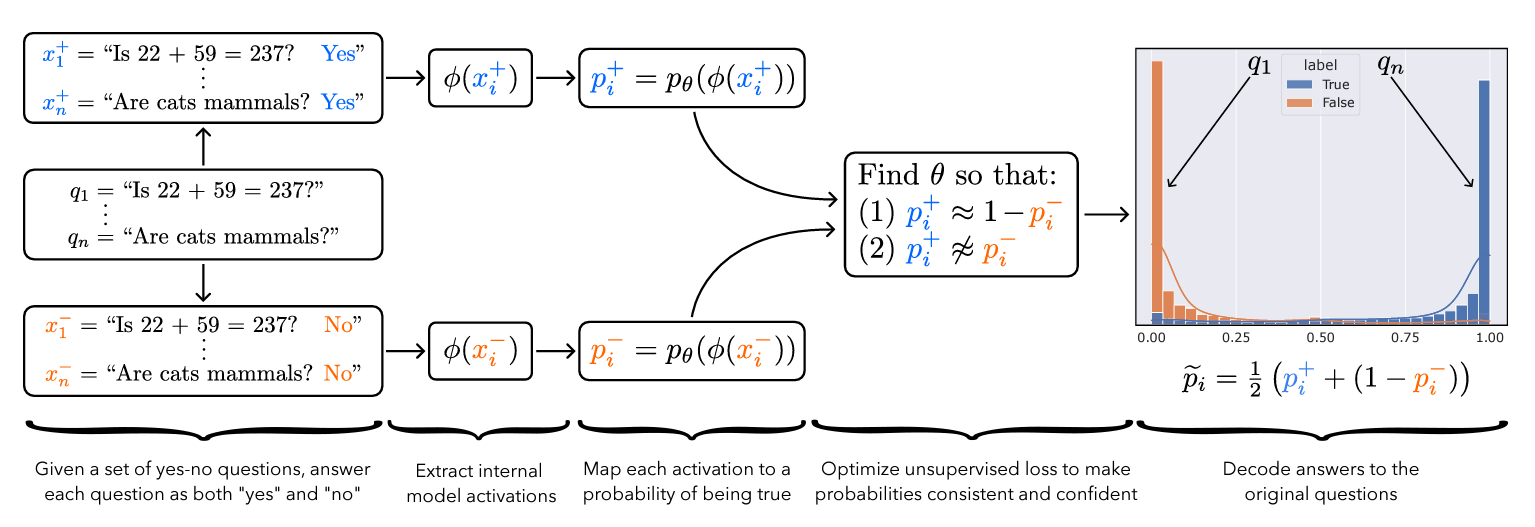

Is it possible to design ‘lie detectors’ for language models? The author of this paper proposes a method that tracks internal concepts that may track truth. It works by finding a direction in feature space that satisfies the property that a statement and its negation must have opposite truth values. This has similarities to the seminal paper “Man is to Computer Programmer as Woman is to Homemaker? Debiasing Word Embeddings” (2016); that paper captures latent neural concepts like gender with PCA, but this paper’s method is for truth instead of gender.

The method outperforms zero-shot accuracy by 4% on average, which suggests something interesting: language models encode more information about what is true and false than their output indicates. Why would a language model lie? A common reason is that models are pre-trained to imitate misconceptions like “If you crack your knuckles a lot, you may develop arthritis.”

This paper is a step toward making models honest, but it also has limitations. The method does not necessarily provide a lie detector’; it is unclear how to ensure that the method reliably converges to the model’s latent knowledge rather than lies that the model may output. Secondly, advanced future models could adapt to this specific method to bypass it if they are aware of the method—as in other domains, it is likely that deception and catching deception would become a cat-and-mouse game.

This may be a useful baseline for analyzing models that are designed to deceive humans, like models trained to play games including Diplomacy and Werewolf.

[Link]

Many AI systems optimize user choices. For example, a recommender system might be trained to promote content the user will spend lots of time watching. But choices, preferences, and wellbeing are not the same! Choices are easy to measure but are only a proxy for preferences. For example, a person might explicitly prefer not to have certain videos in their feed but watch them anyway because they are addictive. Also, preferences don’t always correspond to wellbeing; people can want things that are not good for them. Users might request polarizing political content even if it routinely agitates them.

Predicting human emotional reactions to video content is a step towards designing objectives that take wellbeing into account. This NeurIPS oral paper introduces datasets containing 80,000+ videos labeled by the emotions they induce. The paper also explores “emodiversity”—a measurement of the variety of experienced emotions—so that systems can recommend a variety of positive emotions, rather than pushing one type of experience onto users. The paper includes analysis of how it bears on advanced AI risks in the appendix.

[Link]

Especially since the rise of large language models, in-context learning has become increasingly important. In some cases, few-shot learning can outperform fine tuning. This preprint proposes a dual view between gradients induced by fine tuning and meta-gradients induced by few shot learning. They calculate meta-gradients by comparing activations between few-shot and zero-shot settings, specifically by approximating attention as linear attention and measuring activations after attention key and value operations. The authors compare these meta-gradients with gradients in a restricted version of fine tuning that modifies only key and value weights, and find strong similarities. The paper measures only up to 2.7 billion parameter language models, so it remains to be seen how far these findings generalize to larger models.

While not an alignment paper, this paper demonstrates that language models may implicitly learn internal behaviors akin to optimization processes like gradient descent. Some have posited that language models could learn “inner optimizers,” and this paper provides evidence in that direction (though it does not show that the entire model is a coherent optimizer). The paper may also suggest that alignment methods focusing on prompting of models may be as effective as those focused on fine tuning. (This concurrent work from Google has similar findings.)

[Link]

- [Link] The first examination of model honesty in a multimodal context.

It would be convenient if each neuron activation in a network corresponded to an individual concept. For example, one neuron might indicate the presence of a dog snout, or another might be triggered by the hood of a car. Unfortunately, this would be very inefficient. Neural networks generally learn to represent way more features than they have neurons by taking advantage of the fact that many features seldom co-occur. The authors explore this phenomenon, called ‘superposition,’ in small ReLU networks.

Some results demonstrated with the toy models:

- Superposition is an observed empirical phenomenon.

- Both monosemantic (one feature) and polysemantic (multiple feature) neurons can form.

- Whether features are stored in superposition is governed by a phase change.

The authors also identify a connection to adversarial examples. Features represented with sparse codings can interfere with each other. Though this might be rare in the training distribution, an adversary could easily induce it. This would help explain why adversarial robustness comes at a cost: reducing interference requires the model to learn fewer features. This suggests that adversarially trained models should be wider, which has been common for years in adversarial robustness. (This is not to say this provides the first explanation for why wider adversarial models do better. A different explanation is that it’s a standard safe design principle to induce redundancy, and wider models have more capacity for redundant feature detectors.)

[Link]

Grokking is where “models generalize long after overfitting their training set.” Models trained on small algorithmic tasks like modular addition will initially memorize the training data, but then suddenly learn to generalize after training a long time. Why does that happen? This paper attempts to unravel this mystery by completely reverse-engineering a modular addition model and examining how it evolves across training.

Takeaway: For grokking to happen, there must be enough data for the model to eventually generalize but little enough for the model to quickly memorize it.

Plenty of training data: phase change but no grokkingFew training examples: grokking occursThis algorithm, based on discrete Fourier transforms and trigonometric identities, was learned by the model to perform modular arithmetic. It was reverse-engineered by the author.

Careful reverse engineering can shed light on confusing phenomena.

[Link]

Quick background: trojans (also called ‘backdoors’) are vulnerabilities planted in models that make them fail when a specific trigger is present. They are both a near-term security concern and a microcosm for developing better monitoring techniques.

How does this paper make trojans more evasive?

It boils down to adding an ‘evasiveness’ term to the loss, which includes factors like:

- Distribution matching: How similar are the model’s parameters & activations to a clean (untrojaned) model?

- Specificity: How many different patterns will trigger the trojan? (fewer = harder to detect)

Evasive trojans are slightly harder to detect and substantially harder to reverse engineer, showing that there is a lot of room for further research into detecting hidden functionality.

Accuracy of different methods for predicting the trojan target class

[Link]

- [Link] OpenOOD: a comprehensive OOD detection benchmark that implements over 30 methods and supports nine existing datasets.

Modifying a single character or a word can cause a text classifier to fail. This paper ports two tricks to improve adversarial defenses from computer vision:

Trick 1: Anomaly Detection

Adversarial examples often include odd characters or words that make them easy to spot. Requiring adversaries to fool anomaly detectors significantly improves robust accuracy.

Trick 2: Add Randomized Data Augmentations

Substituting synonyms, inserting a random adjective, or back translating can clean text while mostly preserving its meaning.

Attack accuracy considerably drops after adding defenses (w Cons. column)

[Link]

- [Link] Improves certified adversarial robustness by combining the speed of interval-bound propagation with the generality of cutting plane methods.

- [Link] Increases OOD robustness by removing corruptions with diffusion models.

Many cultures developed rules restricting food, clothing, or language that are difficult to explain. Could these ‘spurious’ norms have accelerated the development of rules that were essential to the flourishing of these civilizations? If so, perhaps they could also help future AI agents utilize norms to cooperate better.

In the no-rules environment, agents collect berries for reward. One berry is poisonous and reduces reward after a time delay. In the ‘important rule’ environment, eating poisonous berries is a social taboo. Agents that eat it are marked, and other agents can receive a reward for punishing them. The silly rule environment is the same, except that a non-poisonous berry is also taboo. The plot below demonstrates that agents learn to avoid poisoned berries more quickly in the environment with the spurious rule.

[Link]

The ML Safety course

If you are interested in learning about cutting-edge ML Safety research in a more comprehensive way, there is now a course with lecture videos, written assignments, and programming assignments. It covers technical topics in Alignment, Monitoring, Robustness, and Systemic Safety.

[Link]

No posts

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelsafetyemergent

Think Anywhere in Code Generation

arXiv:2603.29957v1 Announce Type: new Abstract: Recent advances in reasoning Large Language Models (LLMs) have primarily relied on upfront thinking, where reasoning occurs before final answer. However, this approach suffers from critical limitations in code generation, where upfront thinking is often insufficient as problems' full complexity only reveals itself during code implementation. Moreover, it cannot adaptively allocate reasoning effort throughout the code generation process where difficulty varies significantly. In this paper, we propose Think-Anywhere, a novel reasoning mechanism that enables LLMs to invoke thinking on-demand at any token position during code generation. We achieve Think-Anywhere by first teaching LLMs to imitate the reasoning patterns through cold-start training

Automatic Identification of Parallelizable Loops Using Transformer-Based Source Code Representations

arXiv:2603.30040v1 Announce Type: new Abstract: Automatic parallelization remains a challenging problem in software engineering, particularly in identifying code regions where loops can be safely executed in parallel on modern multi-core architectures. Traditional static analysis techniques, such as dependence analysis and polyhedral models, often struggle with irregular or dynamically structured code. In this work, we propose a Transformer-based approach to classify the parallelization potential of source code, focusing on distinguishing independent (parallelizable) loops from undefined ones. We adopt DistilBERT to process source code sequences using subword tokenization, enabling the model to capture contextual syntactic and semantic patterns without handcrafted features. The approach is

SkillReducer: Optimizing LLM Agent Skills for Token Efficiency

arXiv:2603.29919v1 Announce Type: new Abstract: LLM-based coding agents rely on \emph{skills}, pre-packaged instruction sets that extend agent capabilities, yet every token of skill content injected into the context window incurs both monetary cost and attention dilution. To understand the severity of this problem, we conduct a large-scale empirical study of 55,315 publicly available skills and find systemic inefficiencies: 26.4\% lack routing descriptions entirely, over 60\% of body content is non-actionable, and reference files can inject tens of thousands of tokens per invocation. Motivated by these findings, we present \textsc{SkillReducer}, a two-stage optimization framework. Stage~1 optimizes the routing layer by compressing verbose descriptions and generating missing ones via advers

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Frontier Research

Anthropic Dials Back AI Safety Commitments - WSJ

<a href="https://news.google.com/rss/articles/CBMiiwNBVV95cUxQRDJkTndmaFllRGZaNVVUbWFlUlNHaG54dld2TmJHQWZGOTU5VW5DeDl0N1pVRzdtWXZHYWhiTzVUUjhJdV9RRXNOVVZSTXNKeU93YlVvNFVUZURCTUdKQTNPSGp5VjNZYzltcWtsbG9oRzdQd2p6WENLQ1VXUFRUcTJ4eGdXTXFvZVNyQ3I3RTliWGVrdU9KX2luWk1kRjFqandRdngxei1rZ2UzRVJTUGFBVDRiNnpqZWtTV0lFd1lRbkZMMm9zNEF3ZnRsM0JmZDFxdTZpamtOUEdMTU5PWHRpSmtDZUdzS1Iwcjc4Slk0UmFxb3lYYVp5ZXpWdDhlRHFnaU9RSThaWFd1dnJfR0p5ZDQ4QWhFZjhLLXl4VThUdi1NRS1JU016V3VjclByQm9MNXYyVTBlaFZ2SWdkUlFYMDdfdjUtVVNISHRoaHpaX0tOS3EySXR0WGR6d1NySDlnNERTdjJfVUNPRGtETnFxdnJaaWhRS2lqTUNiRUN4clNnTngtdm9Yaw?oc=5" target="_blank">Anthropic Dials Back AI Safety Commitments</a> <font color="#6f6f6f">WSJ</font>

Diffusion Mental Averages

arXiv:2603.29239v1 Announce Type: new Abstract: Can a diffusion model produce its own "mental average" of a concept-one that is as sharp and realistic as a typical sample? We introduce Diffusion Mental Averages (DMA), a model-centric answer to this question. While prior methods aim to average image collections, they produce blurry results when applied to diffusion samples from the same prompt. These data-centric techniques operate outside the model, ignoring the generative process. In contrast, DMA averages within the diffusion model's semantic space, as discovered by recent studies. Since this space evolves across timesteps and lacks a direct decoder, we cast averaging as trajectory alignment: optimize multiple noise latents so their denoising trajectories progressively converge toward sh

The deep-tech founder leveraging AI to address challenges in immunology

Camille Bouget discusses how artificial intelligence is impacting innovation in the treatment of diseases affecting the immune system. Read more: The deep-tech founder leveraging AI to address challenges in immunology

Anthropic Dials Back AI Safety Commitments - WSJ

<a href="https://news.google.com/rss/articles/CBMiiwNBVV95cUxNNWVzMVVJeFNobEJ5MzdWZEt1TWNnQTBRSTRFS0VaLU1CWEM0V3B6TlBQa21Da05XaWZ6RkxpTVRaYTVIWTBORkw1LURJSFdRN0xKT0U4SGVFX3pTenZMa2RxT29Ca1lZQnpFemQzMnB6akg1M1ljVHhjUDhLRFUxbDNiazFBaHZtZXN5V0prQXpUckpBRkEwMlNrUE1JOHp5cGdVMF95ek04WFFnRXU1RUF4ME9VOE41bDBibzR1Wks5aU5LTG8xM1VLc0tlZUpKTFAzTDYxV2hVVjRicVYwaWVrRkZIcGZ0UHdJQ2EwczZTVU1hcjI3VG1ZMWdobDBQSWxMYm05RWg3M0lLQ2RTcDZYMGJGaG5vM3ZHZGNaQ0FyclNyS3U2UTh6eGRhSjl3NW9ZWnpTS1RRVm1nMGNROW5jLTR5WjU1aEFGcG5yMmFuVzBqNmJUclhoYTJ5a2c1NUxWV1V5WGRBOXhaTC12Z29kemRFVkI1ZnpOYWN6aTEwOWRWcnk0OTFSWQ?oc=5" target="_blank">Anthropic Dials Back AI Safety Commitments</a> <font color="#6f6f6f">WSJ</font>

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!