MIT engineers design proteins by their motion, not just their shape

An AI model generates novel proteins based on how they vibrate and move, opening new possibilities for dynamic biomaterials and adaptive therapeutics.

Proteins are far more than nutrients we track on a food label. Present in every cell of our bodies, they work like nature’s molecular machines. They walk, stretch, bend, and flex to do their jobs, pumping blood, fighting disease, building tissue, and many other jobs too small for the eye to see. Their power doesn’t come from shape alone, but from how they move.

In recent years, artificial intelligence has allowed scientists to design entirely new protein structures not found in nature tailored for specific functions, such as binding to viruses, or mimicking the mechanical properties of silk for sustainable materials. But designing for structure alone is like building a car body without any control over how the engine performs. The subtle vibrations, shifts, and mechanical dynamics of a protein are just as critical to its functions as its form.

Now, MIT engineers have taken a major step toward closing the gap with the development of an AI model known as VibeGen. If vibe coding lets programmers describe what they want and then AI generates the software, VibeGen does the same for living molecules: specify the vibe — the pattern of motion you want — and the model writes the protein.

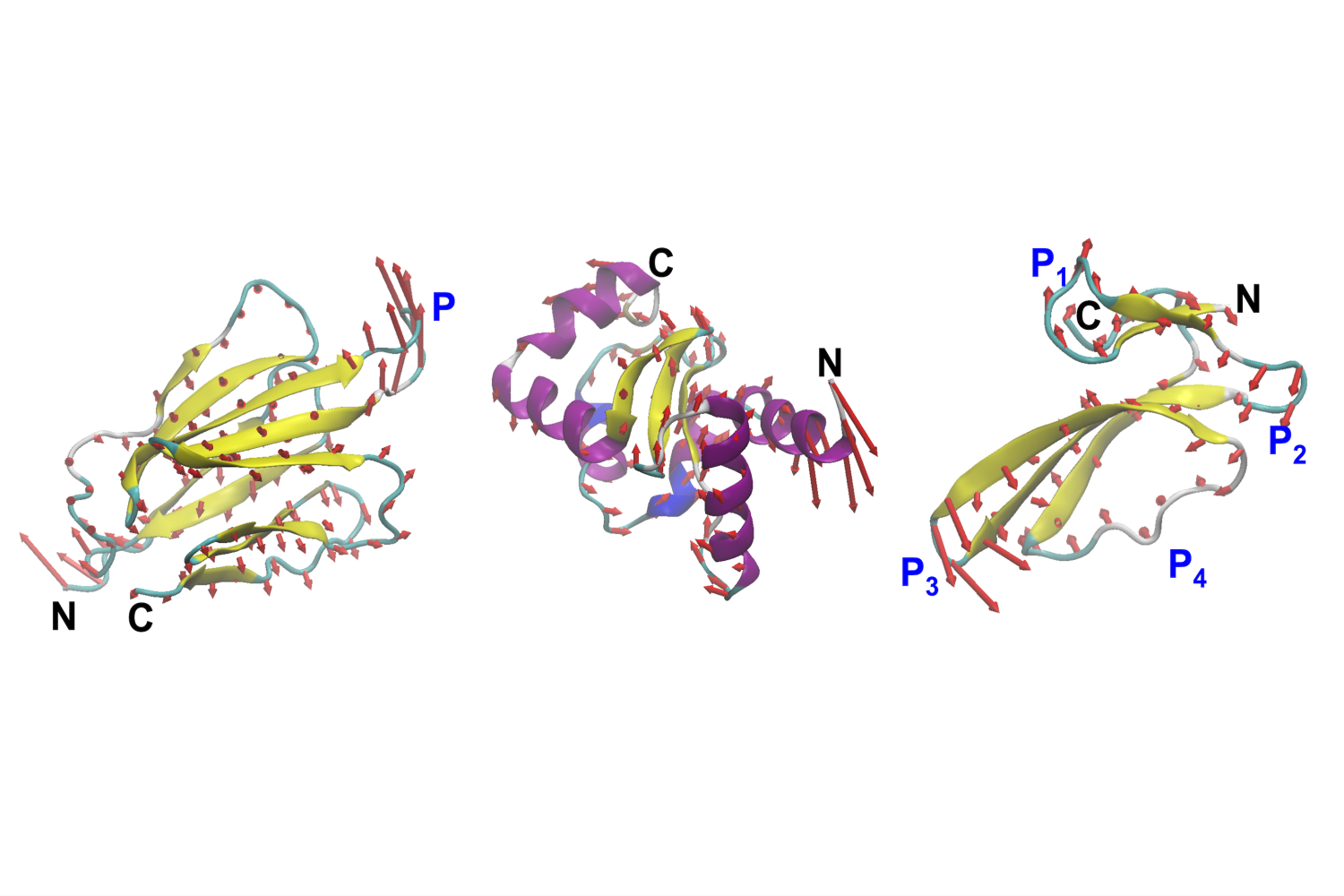

The new model allows scientists to target how a protein flexes, vibrates, and shifts between shapes in response to its environment, opening a new frontier in the design of molecular mechanics. VibeGen builds on a series of advances from the Buehler lab in agentic AI for science — systems in which multiple AI models collaborate autonomously to solve problems too complex for any single model.

“The essence of life at fundamental molecular levels lies not just in structure, but in movement,” says Markus Buehler, the Jerry McAfee Professor of Engineering in the departments of Civil and Environmental Engineering and Mechanical Engineering. “Everything from protein folding to the deformation of materials under stress follows the fundamental laws of physics.”

Buehler and his former postdoc, Bo Ni, identified a critical need for what they call physics-aware AI: systems capable of reasoning about motion, not just snapshots of molecular structure. “AI must go beyond analyzing static forms to understanding how structure and motion are fundamentally intertwined,” Buehler adds.

The new approach, described in a paper March 24 in the journal Matter, uses generative AI to create proteins with tailor-made dynamics.

Training AI to think about motion

The revolution in AI-driven protein science has been, overwhelmingly, a revolution in structure. Tools like AlphaFold solved the decades-old problem of predicting a protein’s three-dimensional shape. Existing generative models learned to design new shapes from scratch. But in focusing on the folded snapshot — the protein frozen in place — the field largely set aside the property that makes proteins work: their motion. “Structure prediction was such a grand challenge that it absorbed the field’s attention,” Buehler says. “But a protein’s shape is just one frame of a much longer film, and the design space extends through space and time, where structure sits on a much broader manifold.” Scientists could design a protein with a particular architecture. They couldn’t yet specify how that protein would move, flex, or vibrate once it was built.

VibeGen does something no protein design tool has done before. It inverts the traditional problem. Rather than asking, “What shape will this sequence produce?” it asks, “What sequence will make a protein move in exactly this way?”

To build VibeGen, Buehler and Ni turned to a class of AI diffusion models, the same underlying technology that powers AI image generators capable of creating realistic pictures from pure noise. In VibeGen’s case, the model starts with a random sequence of amino acids and refines it, step by step, until it converges on a sequence predicted to vibrate and flex in a targeted way.

The system works through two cooperating agents that design and challenge each other. A “designer” proposes candidate sequences aimed at a target motion profile. A “predictor” evaluates those candidates, asking whether they’ll actually move the way the designer intended. The two models iterate back and forth like an internal dialogue, until the design stabilizes into something that meets the goal. By specifying this vibrational fingerprint as the design input, VibeGen inverts the usual logic: dynamics becomes the blueprint, and structure follows.

“It’s a collaborative system,” Ni says. “The designer proposes, the predictor critiques, and the design improves through that tension.”

Most sequences VibeGen produces are entirely de novo, not borrowed from nature, not a variation on something evolution already made. To confirm the designs actually work, the team ran detailed physics-based molecular simulations, and the proteins behaved exactly as intended, flexing and vibrating in the patterns VibeGen had targeted.

One of the study’s most striking findings is that many different protein sequences and folds can satisfy the same vibrational target — a property the researchers call functional degeneracy. Where evolution converged on one solution, VibeGen reveals an entire family of alternatives: proteins with different structures and sequences that nonetheless move in the same way. “It suggests that nature explored only a fraction of what’s possible,” Buehler says. “For any given dynamic behavior, there may be a large, untapped space of viable designs."

A new frontier in molecular engineering

Controlling protein dynamics could have wide-ranging applications. In medicine, proteins that can change shape on cue hold enormous potential. Many therapeutic proteins work by binding to a target molecule — a virus, a cancer cell, a misfiring receptor. How well they bind often depends not just on their shape, but on how flexibly they can adapt to their target. A protein that is engineered with motion could grip more precisely, reduce unintended interactions, and ultimately become a safer, more effective drug.

In materials science, which is an area of Buehler’s research, mechanical properties at the molecular scale affect their performance. Biological materials like silk and collagen get their strength and resilience from the coordinated motion of their molecular building blocks. Designing proteins that are stiffer, flexible, or vibrate in a certain way could lead to new sustainable fibers, impact-resistant materials, or biodegradable alternatives to petroleum-based plastics.

Buehler envisions further possibilities: structural materials for buildings or vehicles incorporating protein-based components that heal themselves after mechanical stress, or that adjust in response to heavy load.

By enabling researchers to specify motion as a direct design parameter, VibeGen treats proteins less like static shapes and more like programmable mechanical devices. The advance bridges artificial intelligence, medicine, synthetic biology, and materials engineering — toward a future in which molecular machines can be designed with the same precision and intentionality as bridges, engines, or microchips.

“VibeGen can venture into uncharted territory, proposing protein designs beyond the repertoire of evolution, tailored purely to our specifications. It’s as if we’ve invented a new creative engine that designs molecular machines on demand,” Buehler adds.

The researchers plan to refine the model further and validate their designs in the lab. They also hope to integrate motion-aware design with other AI tools, building toward systems that can design proteins to be not just dynamic, but multifunctional; machines that sense their environment, respond to signals, and adapt in real-time.

The word “vibe” comes from vibration, and Buehler sees the connection as more than wordplay. “We've turned 'vibe' into a metaphor, a feeling, something subjective,” he says. “But for a protein, the vibe is the physics. It is the actual pattern of motion that determines what the molecule can do, the very machinery of life.”

The research was supported by the U.S. Department of Agriculture, the MIT-IBM Watson AI Lab, and MIT’s Generative AI Initiative.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

model

AI News This Week: April 05, 2026 - A New Era of Rapid Development and Multimodal Intelligence

AI News This Week: April 05, 2026 - A New Era of Rapid Development and Multimodal Intelligence Published: April 05, 2026 | Reading time: ~10 min This week has been nothing short of phenomenal for the AI community, with breakthroughs and announcements that promise to revolutionize the way we develop and interact with artificial intelligence. From building personal AI agents in a matter of hours to the unveiling of cutting-edge multimodal intelligence models, the pace of innovation is not just accelerating - it's transforming the landscape of what's possible. Whether you're a seasoned developer or just starting to explore the world of AI, this week's news is a must-know, offering insights into how technology is making AI more accessible, powerful, and integrated into our daily lives. Buildin

Untitled

You have 50 models. Each trained on different data, different domain, different patient population. You want them to get smarter from each other. So you do the obvious thing — you set up a central aggregator. Round 1: gradients in, averaged weights out. Works fine at N=5. At N=20 you notice the coordinator is sweating. At N=50, round latency has tripled, your smallest sites are timing out, and your bandwidth budget is gone. You tune the hell out of it. Same ceiling. This is not a configuration problem. This is an architecture ceiling. The math underneath it guarantees you hit a wall. A different architecture changes the math. The combinatorics you are not harvesting Start with a fact that has nothing to do with any particular framework: N agents have exactly N(N-1)/2 unique pairwise relati

This Week in AI: April 05, 2026 - Revolutionizing Development with Personal Agents and Multimodal Intelligence

This Week in AI: April 05, 2026 - Revolutionizing Development with Personal Agents and Multimodal Intelligence Published: April 05, 2026 | Reading time: ~10 min This week has been incredibly exciting for AI enthusiasts and developers alike. With advancements in personal AI agents, multimodal intelligence, and compact models for enterprise documents, the field is rapidly evolving. One of the most significant trends is the ability to build and deploy useful AI prototypes in a remarkably short amount of time. This shift is largely due to innovative tools and ecosystems that are making AI more accessible to individual builders. In this article, we'll dive into the latest AI news, exploring what these developments mean for developers and the broader implications for the industry. Building a Per

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

research-llm-apis 2026-04-04

Release: research-llm-apis 2026-04-04 I'm working on a major change to my LLM Python library and CLI tool. LLM provides an abstraction layer over hundreds of different LLMs from dozens of different vendors thanks to its plugin system, and some of those vendors have grown new features over the past year which LLM's abstraction layer can't handle, such as server-side tool execution. To help design that new abstraction layer I had Claude Code read through the Python client libraries for Anthropic, OpenAI, Gemini and Mistral and use those to help craft curl commands to access the raw JSON for both streaming and non-streaming modes across a range of different scenarios. Both the scripts and the captured outputs now live in this new repo. Tags: llm , apis , json , llms

scan-for-secrets 0.1

Release: scan-for-secrets 0.1 I like publishing transcripts of local Claude Code sessions using my claude-code-transcripts tool but I'm often paranoid that one of my API keys or similar secrets might inadvertently be revealed in the detailed log files. I built this new Python scanning tool to help reassure me. You can feed it secrets and have it scan for them in a specified directory: uvx scan-for-secrets $OPENAI_API_KEY -d logs-to-publish/ If you leave off the -d it defaults to the current directory. It doesn't just scan for the literal secrets - it also scans for common encodings of those secrets e.g. backslash or JSON escaping, as described in the README . If you have a set of secrets you always want to protect you can list commands to echo them in a ~/.scan-for-secrets.conf.sh file. Mi

Harvard Proved Emotions Don't Make AI Smarter — That's Exactly Why You Need Soul Spec

The Myth Dies Hard "I'll tip you $200 if you get this right." "This is really important to my career." "I'm so frustrated — please help me." If you've spent any time on AI Twitter, you've seen people swear that emotional prompting makes LLMs perform better. A few anecdotal successes became gospel. The technique spread. Now Harvard has the data. It doesn't work. What the Research Actually Shows A team from Harvard and Bryn Mawr ( arXiv:2604.02236 , April 2026) ran a systematic study across 6 benchmarks, 6 emotions, 3 models (Qwen3-14B, Llama 3.3-70B, DeepSeek-V3.2), and multiple intensity levels. Finding 1: Fixed emotional prefixes have negligible effect. Adding "I'm angry about this" or "This makes me so happy" before your prompt? Across GSM8K, BIG-Bench Hard, MedQA, BoolQ, OpenBookQA, and

Self-Improving Python Scripts with LLMs: My Journey

As a developer, I've always been fascinated by the idea of self-improving code. Recently, I've been experimenting with using Large Language Models (LLMs) to make my Python scripts more autonomous and efficient. In this article, I'll share my experience with integrating LLMs into my Python workflow and how it has revolutionized my development process. I'll also provide a step-by-step guide on how to get started with making your own Python scripts improve themselves using LLMs. My journey with LLMs began when I stumbled upon the llm_groq module, which allows you to interact with LLMs using a simple and intuitive API. I was impressed by the accuracy and speed of the model, and I quickly realized that it could be used to improve my Python scripts. The first step in making my scripts self-impro

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!