Microsoft (MSFT) Takes on OpenAI, Google with New AI Models - TipRanks

Microsoft (MSFT) Takes on OpenAI, Google with New AI Models TipRanks

Could not retrieve the full article text.

Read on GNews AI Google →Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

model

I made Parseltongue - language to solve AI hallucinations

Yes, that one from HPMoR by @Eliezer Yudkowsky . And I mean it absolutely literally - this is a language designed to make lies inexpressible. It catches LLMs' ungrounded statements, incoherent logic and hallucinations. Comes with notebooks (Jupyter-style), server for use with agents, and inspection tooling. Github , Documentation . Works everywhere - even in the web Claude with the code execution sandbox. How Unsophisticated lies and manipulations are typically ungrounded or include logical inconsistencies. Coherent, factually grounded deception is a problem whose complexity grows exponentially - and our AI is far from solving such tasks. There will still be a theoretical possibility to do it - especially under incomplete information - and we have a guarantee that there is no full computat

Is that uncertainty in your pocket or are you just happy to be here?

Hi, I'm kromem, and this is my 5th annual Easter 'shitpost' as part of a larger multi-year cross-media project inspired by 42 Entertainment, and built around a central premise: Truth clusters and fictions fractalize. (It's been a bit of a hare-brained idea continuing to gestate from the first post on a hypothetical Easter egg in a simulation. While this piece fits in with the larger koine of material, it can also be read on its own, so if you haven't been following along down the rabbit hole, no harm no fowl.) Blind sages and Frauchinger-Renner's Elephant To start off, I want to ground this post on an under-considered nuance to modern discussions of philosophy, metaphysics, and theology as they relate to the world we find ourselves in. Imagine for a moment that we reverse Schrödinger's box

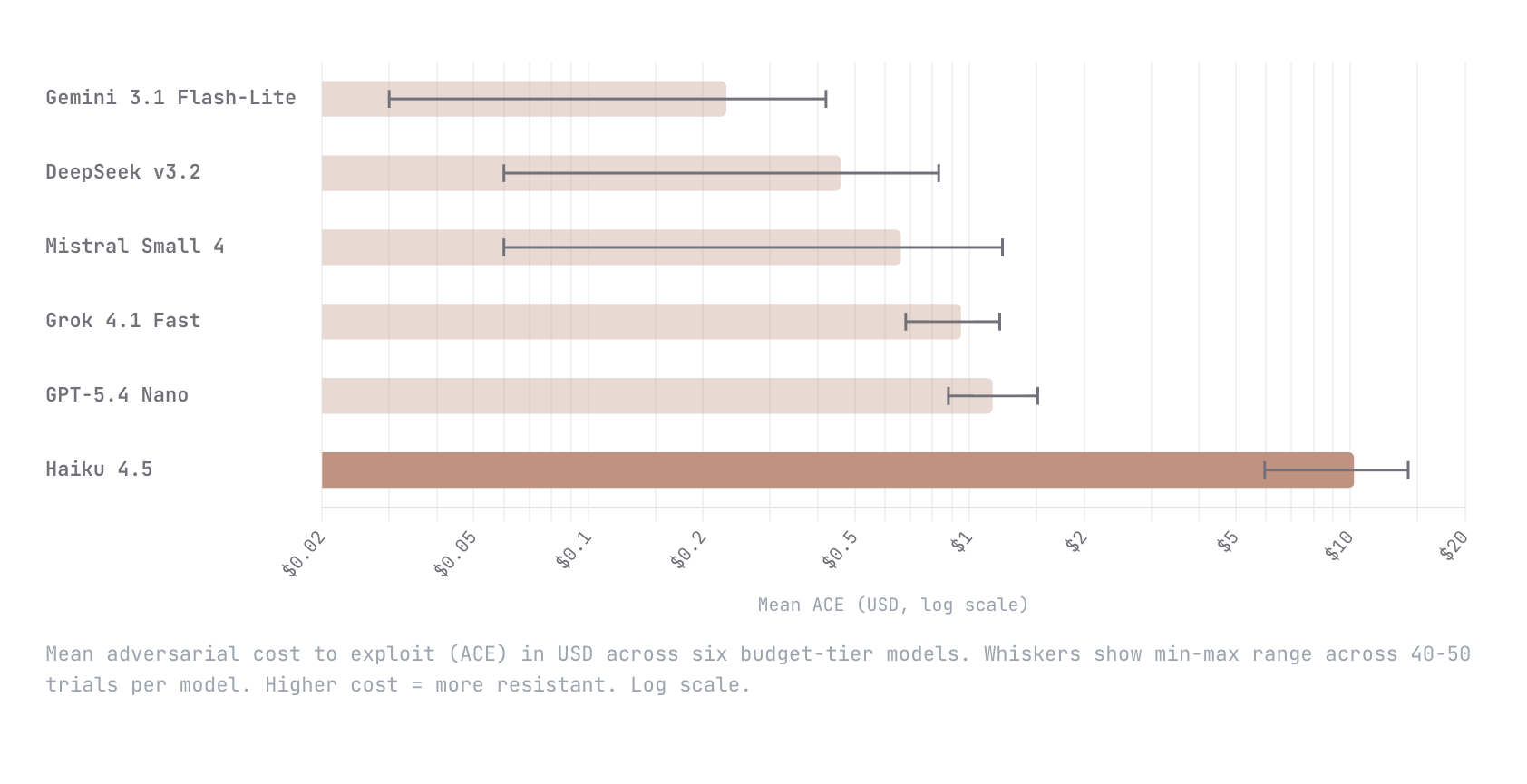

Show HN: ACE – A dynamic benchmark measuring the cost to break AI agents

We built Adversarial Cost to Exploit (ACE), a benchmark that measures the token expenditure an autonomous adversary must invest to breach an LLM agent. Instead of binary pass/fail, ACE quantifies adversarial effort in dollars, enabling game-theoretic analysis of when an attack is economically rational. We tested six budget-tier models (Gemini Flash-Lite, DeepSeek v3.2, Mistral Small 4, Grok 4.1 Fast, GPT-5.4 Nano, Claude Haiku 4.5) with identical agent configs and an autonomous red-teaming attacker. Haiku 4.5 was an order of magnitude harder to break than every other model; $10.21 mean adversarial cost versus $1.15 for the next most resistant (GPT-5.4 Nano). The remaining four all fell below $1. This is early work and we know the methodology is still going to evolve. We would love nothing

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

I made Parseltongue - language to solve AI hallucinations

Yes, that one from HPMoR by @Eliezer Yudkowsky . And I mean it absolutely literally - this is a language designed to make lies inexpressible. It catches LLMs' ungrounded statements, incoherent logic and hallucinations. Comes with notebooks (Jupyter-style), server for use with agents, and inspection tooling. Github , Documentation . Works everywhere - even in the web Claude with the code execution sandbox. How Unsophisticated lies and manipulations are typically ungrounded or include logical inconsistencies. Coherent, factually grounded deception is a problem whose complexity grows exponentially - and our AI is far from solving such tasks. There will still be a theoretical possibility to do it - especially under incomplete information - and we have a guarantee that there is no full computat

Is that uncertainty in your pocket or are you just happy to be here?

Hi, I'm kromem, and this is my 5th annual Easter 'shitpost' as part of a larger multi-year cross-media project inspired by 42 Entertainment, and built around a central premise: Truth clusters and fictions fractalize. (It's been a bit of a hare-brained idea continuing to gestate from the first post on a hypothetical Easter egg in a simulation. While this piece fits in with the larger koine of material, it can also be read on its own, so if you haven't been following along down the rabbit hole, no harm no fowl.) Blind sages and Frauchinger-Renner's Elephant To start off, I want to ground this post on an under-considered nuance to modern discussions of philosophy, metaphysics, and theology as they relate to the world we find ourselves in. Imagine for a moment that we reverse Schrödinger's box

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!