LLM Embeddings Enable Variant-Level Genomic Representations - letsdatascience.com

<a href="https://news.google.com/rss/articles/CBMiowFBVV95cUxPT29HV0M4d2lfel8zVFRSc2VmU2ktYUF3cGZzQTVYUzVZZjZDNldpNWZoYUxFTzgxWUZ0SWcyanFPVzJYa1o2U3NhaXhsaGVJMEpxWTF0SUFnSWVNLU5XUUdWb2ZlMWhOeE14ZkUxVDJ4c3E2NFlrdGRfR2xZMXlnUlM4dkoydk9hTGZhSmUyWWtseG8tVW05d3pmclhjVUtxSE0w?oc=5" target="_blank">LLM Embeddings Enable Variant-Level Genomic Representations</a> <font color="#6f6f6f">letsdatascience.com</font>

Could not retrieve the full article text.

Read on Google News: LLM →Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Q&A with Simon Willison on the November release of GPT-5.1 and Opus 4.5 as the inflection point for coding, exhaustion due to managing coding agents, and more (Lenny Rachitsky/Lenny s Newsletter)

Lenny Rachitsky / Lenny's Newsletter : Q&A with Simon Willison on the November release of GPT-5.1 and Opus 4.5 as the inflection point for coding, exhaustion due to managing coding agents, and more Simon Willison is a prolific independent software developer, a blogger, and one of the most visible and trusted voices on the impact AI is having on builders.

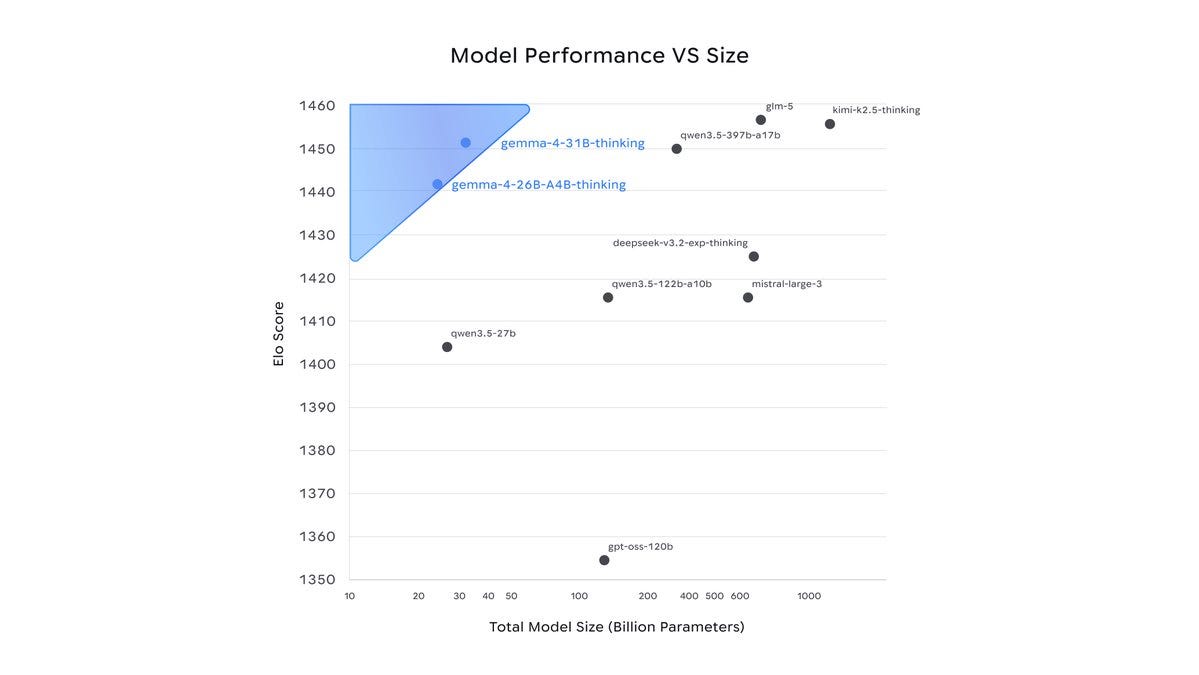

Google open sources Gemma 4 AI models that outperform models 20x their size | The models work with near-zero latency | Inshorts - inshorts.com

Google open sources Gemma 4 AI models that outperform models 20x their size | The models work with near-zero latency | Inshorts inshorts.com

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!