How AI-powered echolocation is giving small drones night vision

To help small aerial robots navigate in the dark and other low-visibility environments, my colleagues and I developed an ultrasound-based perception system inspired by bat echolocation. Current robots rely heavily on cameras or light detection and ranging , known as lidar, or both. But these sensors fail in visually challenging conditions, such as smoke, fog, dust, snow, or complete darkness. I’m a scientific engineer who develops bio-inspired microrobots. To solve this challenge, my research team looked at nature’s experts at navigating in poor visibility: bats. They thrive in dark, damp, and dusty caves and can detect obstacles as thin as a human hair using echolocation while weighing as little as two paper clips. They emit sound waves and listen to weak echoes reflected from objects. Ho

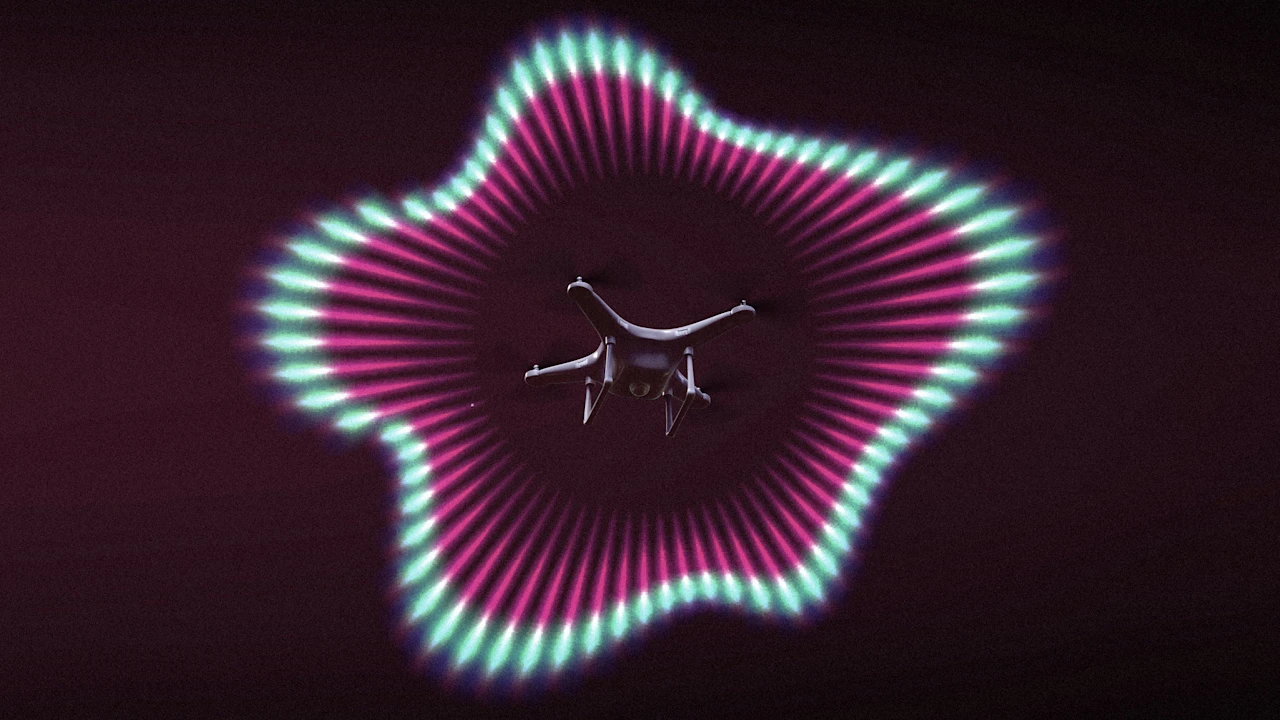

To help small aerial robots navigate in the dark and other low-visibility environments, my colleagues and I developed an ultrasound-based perception system inspired by bat echolocation.

Current robots rely heavily on cameras or light detection and ranging, known as lidar, or both. But these sensors fail in visually challenging conditions, such as smoke, fog, dust, snow, or complete darkness.

I’m a scientific engineer who develops bio-inspired microrobots. To solve this challenge, my research team looked at nature’s experts at navigating in poor visibility: bats. They thrive in dark, damp, and dusty caves and can detect obstacles as thin as a human hair using echolocation while weighing as little as two paper clips. They emit sound waves and listen to weak echoes reflected from objects.

However, enabling this sensing on aerial robots is extremely challenging because propellers generate a lot of noise. It is a bit like trying to listen to your friend while a jet engine is taking off next to you.

To overcome this issue, we present two key ideas. First, a physical acoustic shield inspired by bat’s ear cartilage reduces propeller noise around the acoustic sensors, which act like the robot’s ears. Second, a neural network called Saranga recovers weak echo signals from very noisy measurements by learning patterns over time, inspired by how bats process sound.

Together, these enable the robot to estimate obstacle locations in 3D and navigate safely using milliwatt-level sensing power.

Why it matters

These types of drones are very useful for search and rescue, especially in confined, dynamic, and dangerous environments, because they’re small and inexpensive. Search-and-rescue operations often happen in environments where visibility is very poor, such as forest fires, collapsed buildings, caves, or dusty outdoor conditions. In these scenarios, traditional sensors like cameras and lidar often become unreliable.

Explore Topics

- Artificial Intelligence

- drones

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelneural networkapplication

GPT-5.1 Codex, GPT-5.1-Codex-Max, and GPT-5.1-Codex-Mini deprecated

We have deprecated the following models across all GitHub Copilot experiences (including Copilot Chat, inline edits, ask and agent modes, and code completions) on April 1, 2026. Model Deprecation date The post GPT-5.1 Codex, GPT-5.1-Codex-Max, and GPT-5.1-Codex-Mini deprecated appeared first on The GitHub Blog .

Claude AI Just Leveled Up: Why Its New Features Actually Matter for Creators

If you’re like me, you’re probably suffering from “AI fatigue.” Every week, there’s a new model, a new benchmark, and a new “ChatGPT… Continue reading on Medium »

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!