Generate short videos with the Replicate playground

Create AI videos with a convenient workflow.

Posted January 17, 2025 by

- deepfates

AI video generation is here, but it’s not always easy to get the results you want. In this guide, I’ll share a convenient workflow for creating AI video with the Replicate playground.

The playground is an experimental web interface that gives you a scrapbook-style UI for testing different models, comparing their outputs, and keeping a record of your experiments. We built it for quick iteration with image models, but we’ve found it works great for video models too.

Step 1: Start with an image

Text-to-video generation is not yet as fast as text-to-image. You should start with an image for more predictable video output, instead of starting with a text prompt, waiting a few minutes for each output, and hoping to luck into a good result.

You might find an existing image in your phone or family photo album, or generate one with a Replicate image model. You could even fine-tune a model to match a specific character, style, or aesthetic.

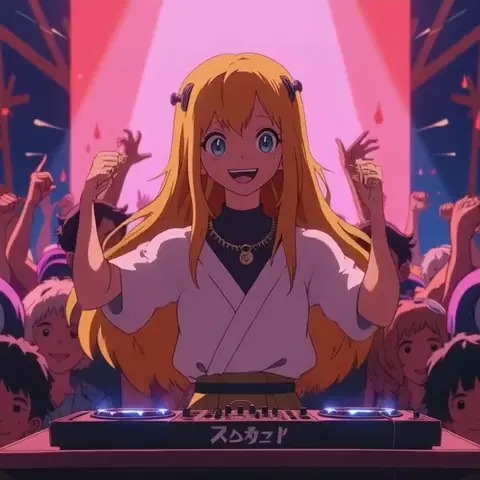

The datacte/flux-aesthetic-anime model is a popular fine-tune with a Studio Ghibli-inspired anime style. Let’s import it into the playground.

Open the playground and click on the model selector, then “Manage models”. Input the model name and hit Enter to add it to the playground.

Now you can make as many images as you want, adjusting the prompt and parameters until you get the look you want. Here I used the prompt “a blonde DJ performing for a crowd of happy dancing people”. This will be the starting point for your video.

Step 2: Generate and refine your video

Once the image is finalized, it’s time to bring it to life.

Replicate’s playground has some video models included by default. Let’s use minimax/video-01-live, as it’s especially good for consistency with animated characters.

Download your favorite image from the previous step, then drag it to the first_frame_image field.

Put a prompt in the text box that represents the way you want the video to move. This could be a description of the characters, the background, or the camera movement.

In this case, I tried a couple things before settling on “a blonde DJ performs for a crowd of happy dancing people, smiling and moving her head and arms to the music”. This gets the character moving and interacting with the crowd.

There aren’t a lot of inputs for this model, but the prompt_optimizer can be helpful. You can try the same image with and without the prompt optimizer enabled and see how the results differ.

Click “Run” to generate a video. It will take a couple minutes to generate. If you want, you can queue up more runs to get multiple outputs for the same inputs, or try different models.

Step 3: Add sound

Once you have a video you like, it’s easy to add sound with the zsxkib/mmaudio model. Add this model to the list like we did before. Download your video, then drag it to the video field.

Add a prompt like “people cheering, electronic music”. The MMAudio model will try to match the video as well as the prompt, so the music will hopefully be on beat with the motion of the video. This step is much quicker and cheaper than generating the video. Try a few different prompts to see what you like.

Once the video and sound are polished, get it out there! Share it on social media, or use it as part of a larger project. You can grab the code snippet from the playground and generate images, video, and audio with the API.

This workflow makes AI video creation more structured and repeatable. Try it with different styles, characters, and prompts, and let us know what you create!

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in AI Tools

“Make Venezuela great again:” AI-generated Trump video goes viral - Cybernews

<a href="https://news.google.com/rss/articles/CBMiWkFVX3lxTE5HaFg4VFpFaXR2bXZrNUZaWENTdkVET0NnQUQ1OUNoNlBld2pJYXZQNlZPeWdLazhKcGQ0MzVNR1hvczF6ODNqSEJZVVpuOU92bVZHNVgtNVp1QQ?oc=5" target="_blank">“Make Venezuela great again:” AI-generated Trump video goes viral</a> <font color="#6f6f6f">Cybernews</font>

Meta considers layoffs of up to 20% to fund AI data center buildout - Data Center Dynamics

<a href="https://news.google.com/rss/articles/CBMirwFBVV95cUxONjJBNjJEaWJIYmc4eTUtX2gtaFZKU18wYlZ2VGpoN3VMZms0U0FnbVpVWGlHRG5aMlMwY2tPNnU2YWk4SkZJcXpFRWI1S00wRWZoR0pWaXIwQlF5aWZldkFsZXhvX1UxeTNEanYxWThBOWdud2txZUhGbGFhUWdCQ3VqbTR1NngyVFEzczlXd3lTU0UwZFBPakpXamFnUm1lWHQ5OVhySVNFcWNFWDZZ?oc=5" target="_blank">Meta considers layoffs of up to 20% to fund AI data center buildout</a> <font color="#6f6f6f">Data Center Dynamics</font>

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!