Gemma 4: first LLM to 100% my multi lingual tool calling tests

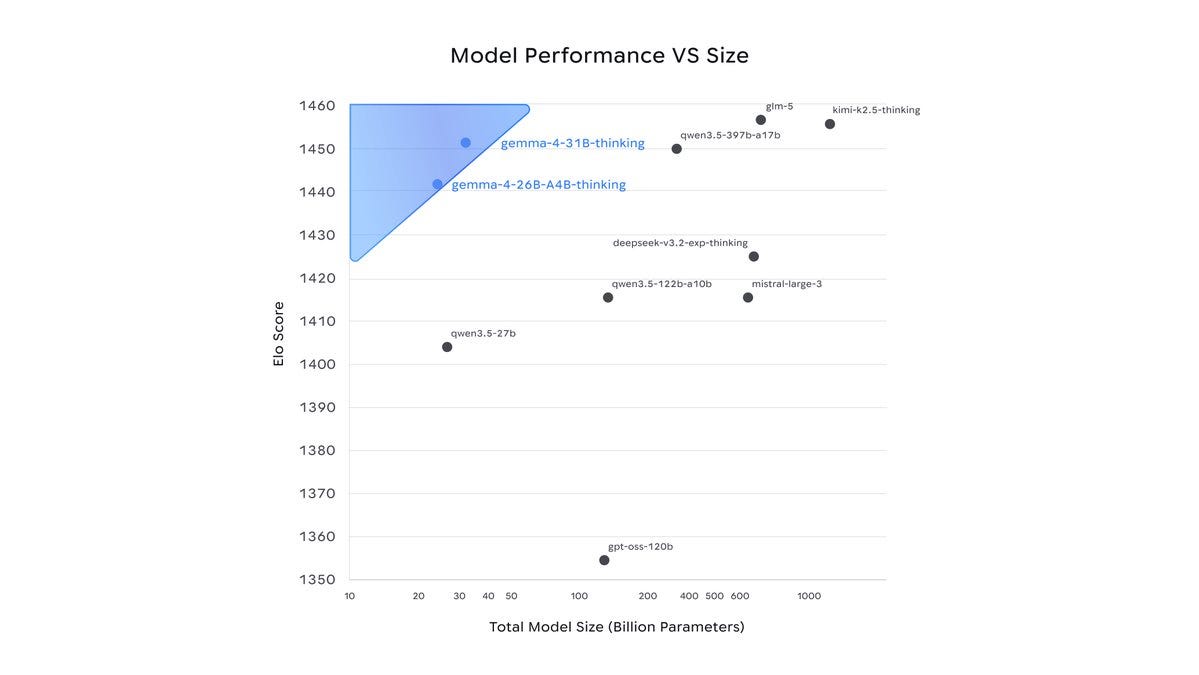

I have been self hosting LLMs since before llama 3 was a thing and Gemma 4 is the first model that actually has a 100% success rate in my tool calling tests. My main use for LLMs is a custom built voice assistant powered by N8N with custom tools like websearch, custom MQTT tools etc in the backend. The big thing is my household is multi lingual we use English, German and Japanese. Based on the wake word used the context, prompt and tool descriptions change to said language. My set up has 68 GB of VRAM (double 3090 + 20GB 3080) and I mainly use moe models to minimize latency, I previously have been using everything from the 30B MOEs, Qwen Next, GPTOSS to GLM AIR and so far the only model which had a 100% success rate across all three languages in tool calling is Gemma4 26BA4B. submitted by

Could not retrieve the full article text.

Read on Reddit r/LocalLLaMA →Reddit r/LocalLLaMA

https://www.reddit.com/r/LocalLLaMA/comments/1sbd1t7/gemma_4_first_llm_to_100_my_multi_lingual_tool/Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

llamamodelassistantKnowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Q&A with Simon Willison on the November release of GPT-5.1 and Opus 4.5 as the inflection point for coding, exhaustion due to managing coding agents, and more (Lenny Rachitsky/Lenny s Newsletter)

Lenny Rachitsky / Lenny's Newsletter : Q&A with Simon Willison on the November release of GPT-5.1 and Opus 4.5 as the inflection point for coding, exhaustion due to managing coding agents, and more Simon Willison is a prolific independent software developer, a blogger, and one of the most visible and trusted voices on the impact AI is having on builders.

Google open sources Gemma 4 AI models that outperform models 20x their size | The models work with near-zero latency | Inshorts - inshorts.com

Google open sources Gemma 4 AI models that outperform models 20x their size | The models work with near-zero latency | Inshorts inshorts.com

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!