Fluency without understanding: risks of large language models in mental healthcare - Cambridge University Press & Assessment

<a href="https://news.google.com/rss/articles/CBMiiwJBVV95cUxNX1Bmeks2VXByZXRZNHhjcmtldXIzc00yOHE2MTNuU0ExOG9YZ0ZCYi1pSzMzTkp3eW95V1Qtbi1rQkJqUEwwODJ2MmNlUGhRMmhOS1JoNkFzMl9WcTFFN2tJT3hyak9rcEUydEhwZFJQM1FmZTVrYXlXbEE5Z3JNMFpvUC1mWjF1UFFSdFduMkpCaDRuZ0swek1jTndGWEh6UUlrM21kWGRiWDBpNW11bmpkWVZnMExlX0JITm51Vkw2MEtidFZsbXV0WE5GVUplMnpnQ0tTSkdxWVNabTI4T2ZOcDRydkZaa0FpN3BEOEpDWEFpUFBjd3lHcDhHZFVyX0xQX19ieTVUaDQ?oc=5" target="_blank">Fluency without understanding: risks of large language models in mental healthcare</a> <font color="#6f6f6f">Cambridge University Press & Assessment</font>

Could not retrieve the full article text.

Read on Google News: LLM →Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modellanguage model

Will machines ever be intelligent?

Are machines truly intelligent? AI researchers Subutai Ahmad and Nicolò Fusi join Doug Burger to compare transformer-based AI with the human brain, exploring continual learning, efficiency, and whether today’s models are on a path toward human intelligence. The post Will machines ever be intelligent? appeared first on Microsoft Research .

A Tale of Two Rigours

A familiarity with the pre-rigor/post-rigor ontology might be helpful for reading this post. University math is often sold to students as imbuing in them the spirit of rigor and respect for iron-clad truth. The value in a real analysis course comes not from the specific results that it teaches — those are largely known to scientifically literate students by the time they take it. Instead, they are asked to relearn all those things from first principles; in so doing, they strip themselves of bad habits they previously learned and are inducted into the skeptical culture of the mathematician. Pedagogical and exam materials usually support this goal, putting emphasis on proof-writing, careful argumentation and attention to detail. This incentivises the student to cultivate an invaluable attitu

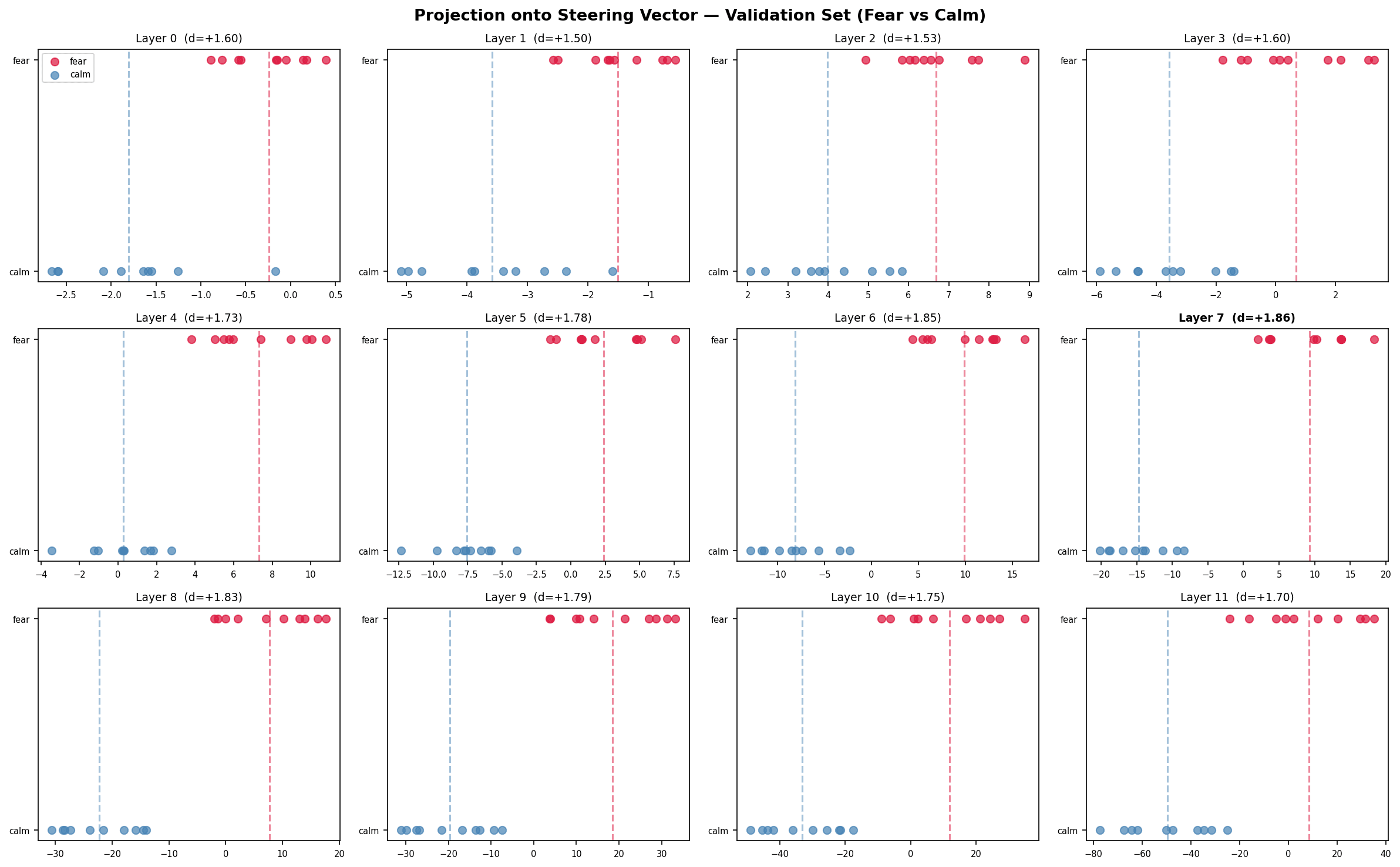

Does GPT-2 Have a Fear Direction?

Anthropic dropped a paper this morning showing that Claude Sonnet 4.5 has steerable emotion representations. Actual directions in activation space that, when injected, shift the model's behavior in predictable ways. They found a non-monotonic anger flip: push the steering vector hard enough and the model will flip to something qualitatively different than anger. The paper only covered their very large, heavily instruction tuned model. This paper is a write-up on the same same experiment at a tiny scale. The Setup: I generated 40 situational prompt pairs to extract a fewer direction via difference-in-means. No emotional words for the prompts and the contrast is entirely situational. Ex: standing at the edge of a rooftop versus standing at the edge of a meadow, alone in a parking garage at m

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Will machines ever be intelligent?

Are machines truly intelligent? AI researchers Subutai Ahmad and Nicolò Fusi join Doug Burger to compare transformer-based AI with the human brain, exploring continual learning, efficiency, and whether today’s models are on a path toward human intelligence. The post Will machines ever be intelligent? appeared first on Microsoft Research .

Claude AI finds Vim, Emacs RCE bugs that trigger on file open

Article URL: https://www.bleepingcomputer.com/news/security/claude-ai-finds-vim-emacs-rce-bugs-that-trigger-on-file-open/ Comments URL: https://news.ycombinator.com/item?id=47632805 Points: 5 # Comments: 1

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!