Discovery of the reward function for embodied reinforcement learning agents - Nature

<a href="https://news.google.com/rss/articles/CBMiX0FVX3lxTE9WWlNBeGY4V0haaENNSElrQm5kbTJpNnJwdU9VaXZlTGZ2MFFOTm1KbGpZckVQTU9HQnBoRFFfLUV0U2dvS1QzTkFFX0g5U1dfZkxEa1FHX1FvdHluYVVB?oc=5" target="_blank">Discovery of the reward function for embodied reinforcement learning agents</a> <font color="#6f6f6f">Nature</font>

Could not retrieve the full article text.

Read on GNews AI reinforcement learning →GNews AI reinforcement learning

https://news.google.com/rss/articles/CBMiX0FVX3lxTE9WWlNBeGY4V0haaENNSElrQm5kbTJpNnJwdU9VaXZlTGZ2MFFOTm1KbGpZckVQTU9HQnBoRFFfLUV0U2dvS1QzTkFFX0g5U1dfZkxEa1FHX1FvdHluYVVB?oc=5Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

embodiedagent

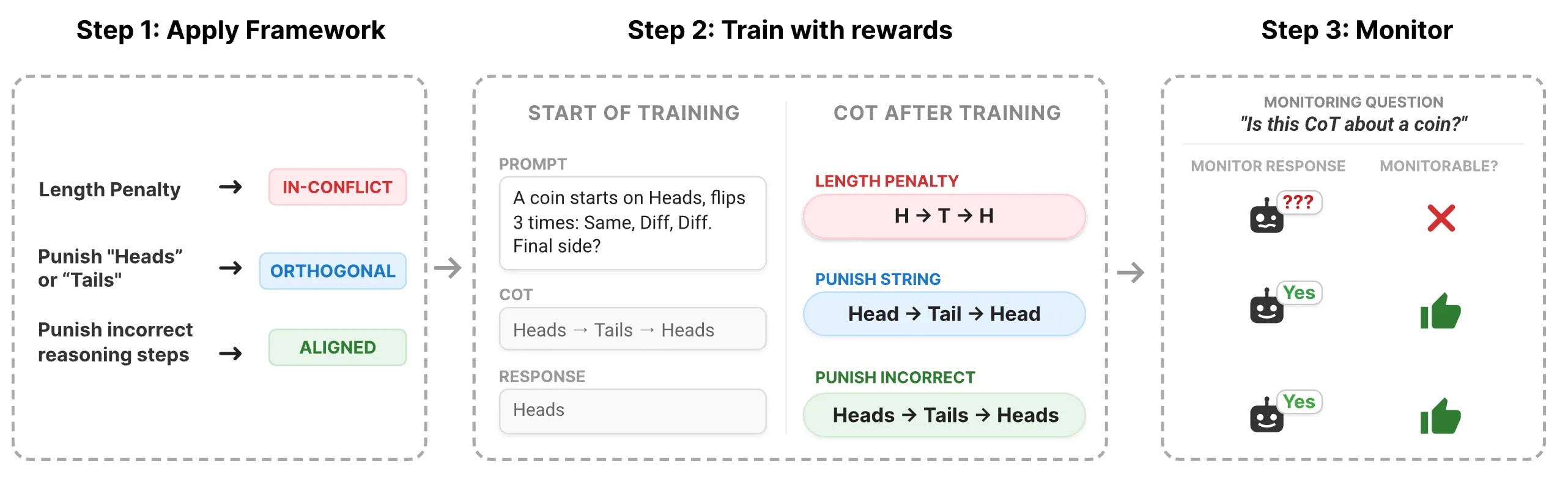

Predicting When RL Training Breaks Chain-of-Thought Monitorability

Crossposted from the DeepMind Safety Research Medium Blog . Read our full paper about this topic by Max Kaufmann, David Lindner, Roland S. Zimmermann, and Rohin Shah. Overseeing AI agents by reading their intermediate reasoning “scratchpad” is a promising tool for AI safety. This approach, known as Chain-of-Thought (CoT) monitoring, allows us to check what a model is thinking before it acts, often helping us catch concerning behaviors like reward hacking and scheming . However, CoT monitoring can fail if a model’s chain-of-thought is not a good representation of the reasoning process we want to monitor. For example, training LLMs with reinforcement learning (RL) to avoid outputting problematic reasoning can result in a model learning to hide such reasoning without actually removing problem

OpenClaw Nodes: Connecting Your AI Agent to Physical Devices

<p>Your AI agent lives on a gateway. The gateway talks to Slack, Discord, or Telegram. But what if you want the agent to see through a camera, grab your phone's location, snap a screenshot, or run a shell command on a remote server? That's what <strong>nodes</strong> are for.</p> <p>A node is a companion device — iOS, Android, macOS, or any headless Linux machine — that connects to the OpenClaw Gateway over WebSocket and exposes a command surface. Once paired, your agent can invoke those commands as naturally as any other tool call. No polling loops, no bespoke APIs. Just pairing and using.</p> <h2> What Is a Node? </h2> <p>In OpenClaw's architecture, the <strong>gateway</strong> is the always-on brain — it receives messages, runs the model, routes tool calls. A <strong>node</strong> is a

Predicting When RL Training Breaks Chain-of-Thought Monitorability

Crossposted from the DeepMind Safety Research Medium Blog . Read our full paper about this topic by Max Kaufmann, David Lindner, Roland S. Zimmermann, and Rohin Shah. Overseeing AI agents by reading their intermediate reasoning “scratchpad” is a promising tool for AI safety. This approach, known as Chain-of-Thought (CoT) monitoring, allows us to check what a model is thinking before it acts, often helping us catch concerning behaviors like reward hacking and scheming . However, CoT monitoring can fail if a model’s chain-of-thought is not a good representation of the reasoning process we want to monitor. For example, training LLMs with reinforcement learning (RL) to avoid outputting problematic reasoning can result in a model learning to hide such reasoning without actually removing problem

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Frontier Research

Brigade to showcase two new vehicle safety solutions at the CV Show 2026 - Yahoo Finance Singapore

<a href="https://news.google.com/rss/articles/CBMijAFBVV95cUxOZHF0UndnSTVtY0lsUGhIa05wTVBycnVJQkJDdks0R2RSb2RxN1U5VHFzYl8xSFl1YXljOThYc1ZEX0NNYi1aVHNyLXplYXQ5djNDbXI4ZVZibGNjQjE4b2tEYjBSSGpHRHVYbXZuSFMtS0thQUt3Z2cyZTJXRmJaa0FoSjlEMWZMWmpwMw?oc=5" target="_blank">Brigade to showcase two new vehicle safety solutions at the CV Show 2026</a> <font color="#6f6f6f">Yahoo Finance Singapore</font>

Australia and Anthropic deepen AI safety cooperation - Digital Watch Observatory

<a href="https://news.google.com/rss/articles/CBMif0FVX3lxTE05Sk5ibnU4a1FfUWVNX3MwV0plbU5TaE15YVRnYWF4eHMybmhmbG00NzFHRklxempTZ2pjZkxDTFpSc1h5R21CajFnUm1xM2Z0dDdnbFVuRHdHNE8tRjJ4ZXJabHJ2UDBPQ05yeGVJbFE5UFh1S3FNZmI1SjJCT00?oc=5" target="_blank">Australia and Anthropic deepen AI safety cooperation</a> <font color="#6f6f6f">Digital Watch Observatory</font>

Science Notes: Identifying ancient games using artificial intelligence - the-past.com

<a href="https://news.google.com/rss/articles/CBMinAFBVV95cUxNeHhITlZPMFFrc1RrclNBY0dOWFFGV1E5MFdINU1nYzF3LVZJWmpPVF9jc3lpUXRra3hlZ0FqWVlTZ19ZNUl4a3FZdElmaGIyMGljMTI3MmxTMnFFZWl3Q1c0MkZVbTN0SXByR1dyNWt6LVBTSEcxYUZ3NEozQ1hhU1hkRGNqeTZfZjdVdTFwX25tTlg2WkJLdEkwYlo?oc=5" target="_blank">Science Notes: Identifying ancient games using artificial intelligence</a> <font color="#6f6f6f">the-past.com</font>

How we enhance cybersecurity defences before the attackers in an AGI world - weforum.org

<a href="https://news.google.com/rss/articles/CBMitgFBVV95cUxOREM2OHhENHhNMXhQVlhEVGVxTkU5VmxDdmFpcnZmOUN3UVFJVUc3Z2V0TThhWGxsU0hDTVpSbzVVQkhMY0lVeEVDT0JwNWR5Q0M2Tm5Jc0VpWUxSWFkxWUllS0taVWQ1RkljaUJPbGJYRzFmRmxib1prODRnVTUtcGhETG4zcmRMdHV3MXJnUFpjZUM5Ny1rR0syN2pWN284QzBCdHMwMDJVbG9RMWhvMUlveW9fdw?oc=5" target="_blank">How we enhance cybersecurity defences before the attackers in an AGI world</a> <font color="#6f6f6f">weforum.org</font>

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!