Deepfakes of Maduro are a reminder that AI content thrives in political chaos - fastcompany.com

<a href="https://news.google.com/rss/articles/CBMihAFBVV95cUxNX2Fod1RBU0xfSHVnTFVrN3BsTWZxT0tVaUVkQ096bGZyY2xoMUpsaF9WYTRfeE9mZF9FekM1a2k4N3Vud2xESzZBcjVJa0tGM1lEU3VGbVZ6WTlPc1Jxa0xTc2hha25GSDAtNFBWd3RYNnVYZE84SXRzbzVCcm1LSWVDZjA?oc=5" target="_blank">Deepfakes of Maduro are a reminder that AI content thrives in political chaos</a> <font color="#6f6f6f">fastcompany.com</font>

Could not retrieve the full article text.

Read on Google News - AI Venezuela →Google News - AI Venezuela

https://news.google.com/rss/articles/CBMihAFBVV95cUxNX2Fod1RBU0xfSHVnTFVrN3BsTWZxT0tVaUVkQ096bGZyY2xoMUpsaF9WYTRfeE9mZF9FekM1a2k4N3Vud2xESzZBcjVJa0tGM1lEU3VGbVZ6WTlPc1Jxa0xTc2hha25GSDAtNFBWd3RYNnVYZE84SXRzbzVCcm1LSWVDZjA?oc=5Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

company

Google and Amazon: Acknowledged Risks, And Ignored Responsibilities

In late 2024, we urged Google and Amazon to honor their human rights commitments, to be more transparent with the public, and to take meaningful action to address the risks posed by Project Nimbus, their cloud computing contract that includes Israel’s Ministry of Defense and the Israeli Security Agency. Since then, a stream of additional reporting has reinforced that our concerns were well-founded. Yet despite mounting evidence of serious risk, both companies have refused to take action. Amazon has completely ignored our original and follow-up letters. Google, meanwhile, has repeatedly promised to respond to our questions. Yet more than a year and a half later, we have seen no meaningful action by either company. Neither approach is acceptable given the human rights commitments these compa

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Products

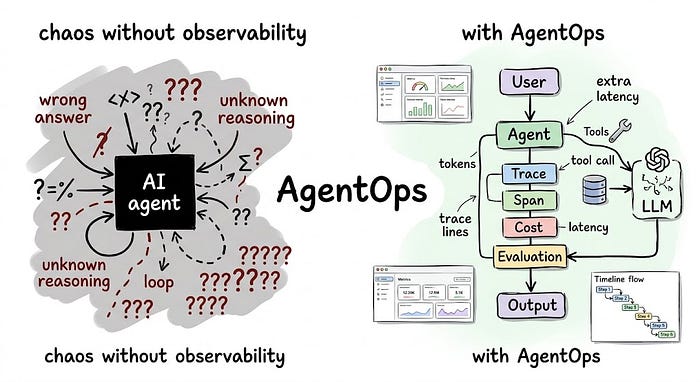

AgentOps: Your AI Agent Is Already Failing in Production. You Just Can’t See It

Last Updated on April 2, 2026 by Editorial Team Author(s): Divy Yadav Originally published on Towards AI. The practical guide to monitoring, debugging, and governing AI agents before they become a liability You shipped an AI agent. It worked in staging. Photo by authorFollowing the introduction, the article delves into the challenges faced by teams operating AI agents in production, emphasizing the inadequacy of traditional monitoring systems that fail to capture the nuanced failures of these agents. It introduces AgentOps as a necessary discipline for managing the lifecycle of AI agents, outlining five critical functions that enhance observability, control costs, evaluate performance, and ensure compliance in real-world applications. By sharing practical examples and potential pitfalls, t

A Plateau Plan to Become AI-Native

Last Updated on April 2, 2026 by Editorial Team Author(s): Bram Nauts Originally published on Towards AI. AI will not transform because it’s deployed – it will transform because the way of operating is redesigned. The tricky part? Transformations rarely fail at the start, they fail in the middle – when organisations try to scale. In a previous article I defined the concept of the AI-native bank. A bank where decisions, processes and customer interactions are continuously driven by AI. Since publishing that article, one question came up repeatedly: “How do we actually get there?” Before exploring that question, it is important to acknowledge something. The idea of AI-native organisations is still largely a promise. The potential of AI is enormous, but the long-term economics and risk profil

.jpg)

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!