Critical Vulnerability in Claude Code Emerges Days After Source Leak - SecurityWeek

Critical Vulnerability in Claude Code Emerges Days After Source Leak SecurityWeek

Could not retrieve the full article text.

Read on Google News: Claude →Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

claudeclaude code

Analysis of LLM Performance on AWS Bedrock: Receipt-item Categorisation Case Study

arXiv:2604.01615v1 Announce Type: cross Abstract: This paper presents a systematic, cost-aware evaluation of large language models (LLMs) for receipt-item categorisation within a production-oriented classification framework. We compare four instruction-tuned models available through AWS Bedrock: Claude 3.7 Sonnet, Claude 4 Sonnet, Mixtral 8x7B Instruct, and Mistral 7B Instruct. The aim of the study was (1) to assess performance across accuracy, response stability, and token-level cost, and (2) to investigate what prompting methods, zero-shot or few-shot, are especially appropriate both in terms of accuracy and in terms of incurred costs. Results of our experiments demonstrated that Claude 3.7 Sonnet achieves the most favourable balance between classification accuracy and cost efficiency.

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Gemma 4 is good

Waiting for artificialanalysis to produce intelligence index, but I see it's good. Gemma 26b a4b is the same speed on Mac Studio M1 Ultra as Qwen3.5 35b a3b (~1000pp, ~60tg at 20k context length, llama.cpp). And in my short test, it behaves way, way better than Qwen, not even close. Chain of thoughts on Gemma is concise, helpful and coherent while Qwen does a lot of inner-gaslighting, and also loops a lot on default settings. Visual understanding is very good, and multilingual seems good as well. Tested Q4_K_XL on both. I wonder if mlx-vlm properly handles prompt caching for Gemma (it doesn't work for Qwen 3.5). Too bad it's KV cache is gonna be monstrous as it did not implement any tricks to reduce that, hopefully TurboQuant will help with that soon. I expect censorship to be dogshit, I s

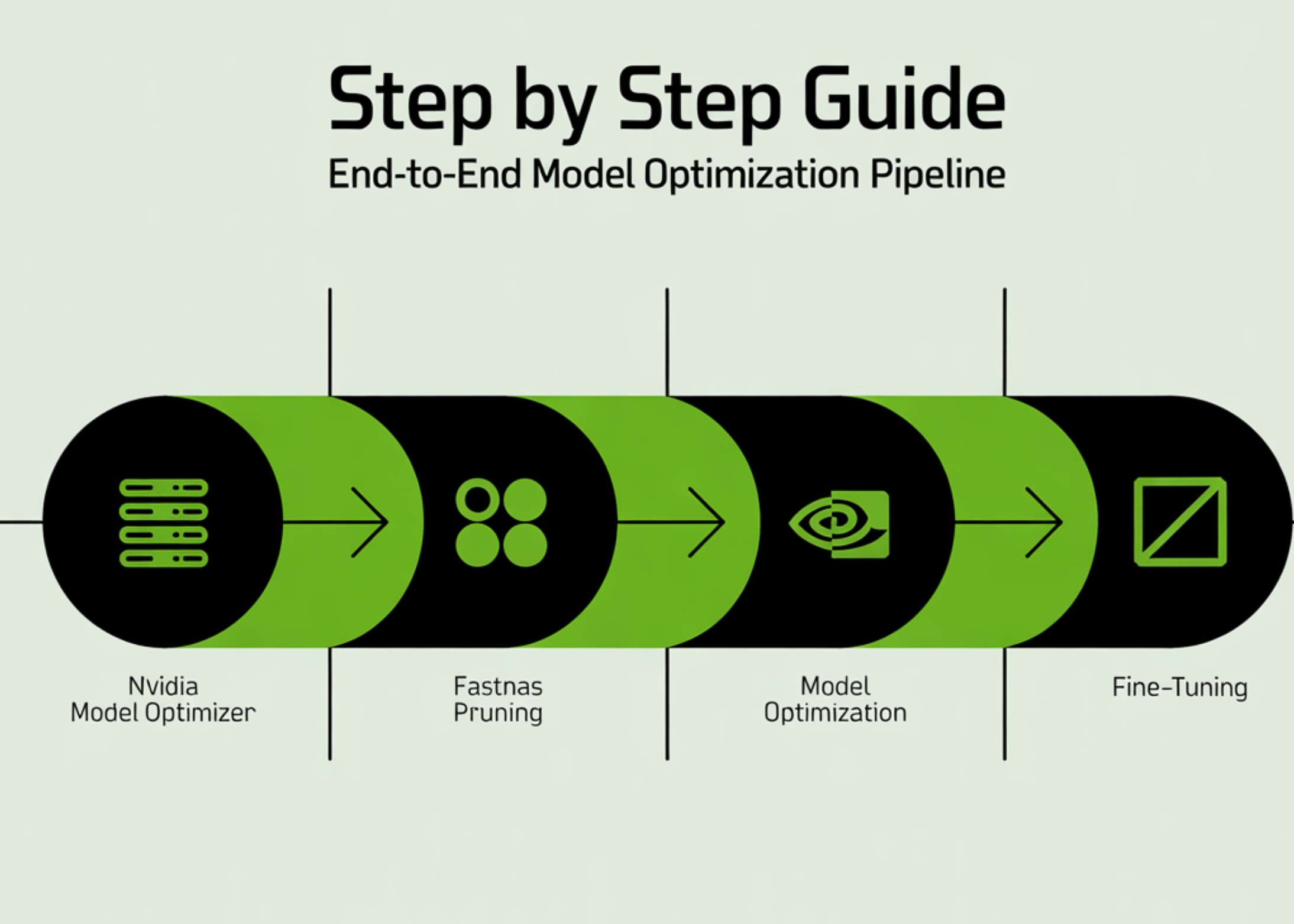

Step by Step Guide to Build an End-to-End Model Optimization Pipeline with NVIDIA Model Optimizer Using FastNAS Pruning and Fine-Tuning

In this tutorial, we build a complete end-to-end pipeline using NVIDIA Model Optimizer to train, prune, and fine-tune a deep learning model directly in Google Colab. We start by setting up the environment and preparing the CIFAR-10 dataset, then define a ResNet architecture and train it to establish a strong baseline. From there, we apply [ ] The post Step by Step Guide to Build an End-to-End Model Optimization Pipeline with NVIDIA Model Optimizer Using FastNAS Pruning and Fine-Tuning appeared first on MarkTechPost .

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!