Cleaned 10k customer records. One emoji crashed my entire pipeline.

Cleaned 10k customer records. One emoji crashed my entire pipeline. Was scraping ecommerce product reviews last month. Got 10k records, ran a cleaning script to normalize text before feeding it to a sentiment analysis tool. Script ran fine on test data (500 rows). Pushed it to production. 48 minutes in, the whole thing just stops. No error message. Just frozen. Thought it was memory. 10k rows shouldn't be a problem, but maybe something leaked. Restarted the process, added memory tracking. Same thing. Froze at exactly the same spot (row 6,842). Checked the CSV manually. Row 6,842 looked fine. Customer name, review text, rating. Nothing weird. Then I noticed it. The review had a 💩 emoji in it. Specifically: "This product is 💩 don't buy it" Encoding hell My script was using basic text encod

Cleaned 10k customer records. One emoji crashed my entire pipeline.

Was scraping ecommerce product reviews last month. Got 10k records, ran a cleaning script to normalize text before feeding it to a sentiment analysis tool. Script ran fine on test data (500 rows). Pushed it to production.

48 minutes in, the whole thing just stops. No error message. Just frozen.

Thought it was memory. 10k rows shouldn't be a problem, but maybe something leaked. Restarted the process, added memory tracking. Same thing. Froze at exactly the same spot (row 6,842).

Checked the CSV manually. Row 6,842 looked fine. Customer name, review text, rating. Nothing weird.

Then I noticed it.

The review had a 💩 emoji in it. Specifically: "This product is 💩 don't buy it"

Encoding hell

My script was using basic text encoding. UTF8, right? Wrong. I was reading the CSV with encoding='latin-1' because an earlier version of the data had some Spanish characters that broke with utf8.

Emojis are multibyte UTF8 characters. Latin1 can't handle them. Python's csv reader just... stopped. No exception, no warning. Just hung there trying to decode something it couldn't.

Ended up doing this:

`import pandas as pd

Read with errors='replace' to handle encoding issues

df = pd.read_csv( 'reviews.csv', encoding='utf-8', encoding_errors='replace' # Replace bad chars with � )

Clean out replacement chars

df['review_text'] = df['review_text'].str.replace('�', '', regex=False)

Remove emojis if you don't need them

import re df['review_text'] = df['review_text'].apply( lambda x: re.sub(r'[^\x00-\x7F]+', '', str(x)) )

df.to_csv('cleaned_reviews.csv', index=False, encoding='utf-8')`

Enter fullscreen mode

Exit fullscreen mode

That regex strips anything outside basic ASCII range. Emojis, accents, special characters gone.

If you need to keep emojis (some sentiment analysis tools actually use them), just stick with utf8 and don't strip them:

`df = pd.read_csv('reviews.csv', encoding='utf-8')

That's it. Just use utf-8 consistently.`

Enter fullscreen mode

Exit fullscreen mode

What would've saved me time

My 500 row test set had zero emojis. Production data had 147 emojis across 10k rows. Testing with real data would've caught this immediately.

Also added logging after this mess:

`for idx, row in df.iterrows(): if idx % 1000 == 0: print(f"Processing row {idx}...")

process row`

Enter fullscreen mode

Exit fullscreen mode

Now if it breaks, I know exactly where.

Didn't know the encoding_errors parameter existed. Would've caught the issue immediately instead of silent failure.

What I ended up doing

Kept emojis in the final dataset. The sentiment tool I was using (TextBlob) actually interprets 💩 correctly as negative sentiment. Stripping them would've lost signal.

Just had to commit to utf8 everywhere. CSV export, database inserts, API responses all utf8. No more mixing encodings.

Still annoyed it took 48 minutes to find a single emoji tho.

DEV Community

https://dev.to/nicodev__/cleaned-10k-customer-records-one-emoji-crashed-my-entire-pipeline-3n1bSign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

versionproductanalysis

It's no longer free to use Claude through third-party tools like OpenClaw

Anthropic is no longer offering a free ride for third-party apps using its Claude AI. Boris Cherny, Anthropic's creator and head of Claude Code, posted on X that Claude subscriptions will no longer cover using the AI agent for third-party tools, like OpenClaw, for free. As of 3PM ET on April 4, anyone using Claude through third-party apps or software will have to do so with an extra usage bundle or with a Claude API key, according to Cherny. Most of Claude's workload may come from simple user questions, but there are those who use the AI chatbot through OpenClaw, a free and open-source AI assistant from the same developer as Moltbook . Unlike more general AI solutions, OpenClaw is designed to automate personal workflows, like clearing inboxes, sending emails or organizing calendars, but le

Building Production-Ready Agentic AI Systems for Enterprise Software Delivery

Episode 1: From POCs to Production - What I Learned Building Agentic Engineering Workflows 1. Context: The Gap Between Potential and Reality Over the last year, we’ve all seen how rapidly AI capabilities especially Large Language Models (LLM) have advanced. From code generation to reasoning tasks, the progress has been significant and genuinely impressive. Agentic AI: the Gap Between Potential and Reality Agentic AI GAP between Production Ready and Reality In controlled environments: Proof of Concepts (POCs) look promising Concept validations show strong efficiency gains Early experiments demonstrate clear potential However, once you move beyond demos and prototypes, a different challenge emerges: ** How do you make these capabilities reliable, repeatable, and production-ready within real

🚀 Wie ich ein AI Growth System gebaut habe, das konstant Leads liefert (kein Bullshit)

Die meisten Webseiten sind einfach nur digitale Visitenkarten. Schön? Vielleicht. Effektiv? Meistens nicht. Ich habe in den letzten Monaten ein System gebaut, das genau das löst. Kein „nice to have Design“. Sondern ein Setup, das messbar Kunden bringt. ⚠️ Das eigentliche Problem 90% der Businesses haben: ❌ Langsame Antworten (oder gar keine) ❌ Tote Kontaktformulare ❌ Webseiten ohne klare Conversion-Strategie Ergebnis: Traffic kommt rein und geht wieder. 🧠 Mein Ansatz: AI Growth System Ich kombiniere 3 Dinge zu einem System: Landingpages, die verkaufen Keine Spielereien. Keine 100 Unterseiten. 👉 Fokus auf: klare Message starke Hooks psychologische Trigger mobile-first UX Ziel: Conversion maximieren AI Chatbots (WhatsApp > alles andere) Warum WhatsApp? Weil: jeder es nutzt Antwortzeit = Se

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Products

Keeper Security brings zero-trust database access to its PAM platform with KeeperDB

Database credentials remain one of the most common attack vectors in enterprise breaches, yet most organisations still manage them through shared spreadsheets, hardcoded connection strings, or standalone credential vaults with no session oversight. Keeper Security, the Chicago-based cybersecurity company best known for its password management platform, is attempting to close that gap with KeeperDB, a [ ] This story continues at The Next Web

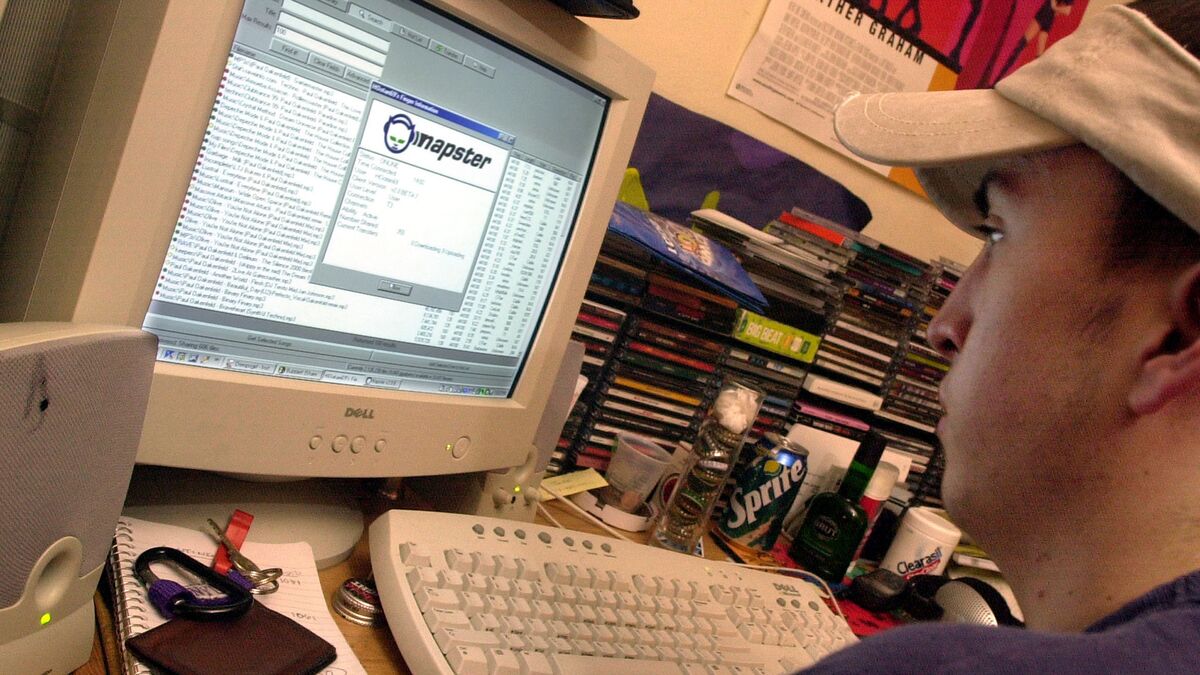

Napster is Evolving in the AI Era

Napster CEO John Acunto explains how the company has been reimagined, shifting focus from traditional music streaming to what they call "streaming intelligence." Watch his full interview on Bloomberg This Weekend with hosts Christina Ruffini and Lisa Mateo. (Source: Bloomberg)

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!