Building a LEGO-like remote Agent - Jean2

<p>I'm a huge fan of coding agents. My daily consumption is at about <strong>7 million tokens</strong> and growing. I started on Cursor, fell in love with OpenCode, got into customizing setups with MCPs, Skills, and subagent orchestration — all while daily-driving GLM, Minimax, GPT models, and everything I could get my hands on at OpenRouter just for the new and shiny.</p> <p>I love squeezing the best possible answers from small models with the right prompts. At some point, I wanted to use OpenCode for <em>everything</em>, not just coding.</p> <p>And then I kinda hit a wall.</p> <h2> The Wall </h2> <h3> Baked-in steering </h3> <p>Coding agents come with baked-in prompts that already steer them in a certain direction — great for an out-of-the-box solution, not so great when you want full co

I'm a huge fan of coding agents. My daily consumption is at about 7 million tokens and growing. I started on Cursor, fell in love with OpenCode, got into customizing setups with MCPs, Skills, and subagent orchestration — all while daily-driving GLM, Minimax, GPT models, and everything I could get my hands on at OpenRouter just for the new and shiny.

I love squeezing the best possible answers from small models with the right prompts. At some point, I wanted to use OpenCode for everything, not just coding.

And then I kinda hit a wall.

The Wall

Baked-in steering

Coding agents come with baked-in prompts that already steer them in a certain direction — great for an out-of-the-box solution, not so great when you want full control. You can create your own agent with custom steering, but it's always appended to whatever the system's baked-in prompt already says.

Rigid tooling

Built-in tools are already great, but you can't alter, remove, or modify them — not their prompts, not the tools themselves. Adding your own usually doesn't feel the same. These systems aren't really built around the concept of bringing your own tools. Want an extra capability? Bring an MCP or a skill.

Session management

The TUI is great, but managing multiple projects or conversations not tied to any specific project just wasn't a comfortable experience. There are now GUI and Web options that handle it much better, but at the time, there weren't really stable, comfortable choices.

Multi-device continuity

Throw the phone into the mix, and it wasn't really there yet either. I just wanted to be able to create a spec doc with the agent on my phone, hand it off for execution, and review it on my laptop — seamlessly.

Enter Jean2

(Why Jean2 and not just Jean? Because Jean was kinda "not good.")

So I started building. Here's what came out of it:

Always running, seamless experience

Jean2 runs as a daemon with sockets and HTTP. The goal is a seamless experience — open a desktop client on your laptop, prompt what you want, pick up your phone, watch progress, and handle permissions. You can close the clients and leave it running with an 80–100 MB memory footprint across 3 different projects.

Absolutely and completely dumb

No baked-in prompts. Your agent system message and your AGENT.md files are the only things that form a system message. This means you're not limited to building "your coding agent" — you can build "your [anything] agent."

No baked-in tools

Well — that's not completely true. Technically, handling MCP, skill lookup, and subagents are tools, but nothing else is baked in. Tools are completely language-agnostic. They're spawned on demand and undergo an explicit, upfront security check that you define (if you want). You can yank anything out and drop anything in. All input is sent to tools via JSON stdin, and all results are expected in JSON stdout.

There's also a built-in visualization property that lets the tool decide how to display its results: diff, code, markdown, table, todo list — you name it. (These data are omitted when making API requests to the LLM, naturally.)

Important: You are in control of the tool definition and its description. If you notice a model not using a tool properly, you can just change it.

What's Next

Jean2 is still in active development — but it's very usable. I've been coding with it for over a week.

The next steps are:

-

SDK & structured output — so you can create your own apps with agent orchestration

-

Multimodal model support

-

Expanded tool offering — fal.ai tools for image generation seem like a natural fit

If this sounds even a little interesting, check out jean2.ai.

Got questions, ideas for tools, or tried it out and got lost in the setup? Hit me up at @danielbilekq0.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelmillionreview

512,000 lines of leaked AI agent source code, three mapped attack paths, and the audit security leaders need now - VentureBeat

<a href="https://news.google.com/rss/articles/CBMipwFBVV95cUxOSUNMNjhmR3dMOHNWalBwYjJScDZIT25DMjdNc1JqLXJPMWJIOFc4akMtMXBMeDRFaTdfalQ2aFdDMmVETmQtd1Rjd2dWSG1zdU1CTzFPTEZDTzY5UU5uVTJzT2I1V0hWRnUwdW1fQW9PYWdkbGJhQUk3a1E5TmpNUTBMUVFlTGYyS2laZGdNZDROOTNNd3VRN3dXZzB3MDdQcWpha1pTUQ?oc=5" target="_blank">512,000 lines of leaked AI agent source code, three mapped attack paths, and the audit security leaders need now</a> <font color="#6f6f6f">VentureBeat</font>

Anthropic Races to Contain Leak of Code Behind Claude AI Agent - wsj.com

<ol><li><a href="https://news.google.com/rss/articles/CBMipgNBVV95cUxQUXVJUFM0dFVHZVJlbFlrbE9VYzNsVURjNTRrRVpUOFhyMUp5Sl9CRlJjOTNaOVZBaFFHalEtSEt4UmVmV19JQUFma3ZGMHZoRTRmQjRCZHJPLXJ0NmtuUzBzNlZEUGgycHNQUVVpbnEyekRMTHVGSDVUNXo2ZUpmdUVYNlJtX3Jpam5Ga19tcTQ3R1kzVlJXRkJQSlFUQmNoUTRJNUFTS01rQUc3a0xKZWlsZ1VRcjFQamY4MGdMYWlleUR6MXNjZ1ppZ014bFlRUjU4cFhram1QUFNoRE44aXN2OV9RVFFDYnRtSHJHTGlQUTlVWS05dENIeDBCVzdwbWlPUF9tZXR6MEZZMzBTajJEUFRESzZhTVJvZDdyWkpnaWlrVjBfeEZEcHp0WXdrcC15cXloRGhBYkNzdGZjRkswLXhmWVo0Sm84Z1RfMTN0ZUZIYnBLWU1OOFJOTFZKd1E4ejNSaDJzUUYzaUdrRjNGZng3OWxicTRrbzdzRnZVNE1reHdrUDBsN1RndEJXb1RHV3AxSXA1MkpIRFBHaHpIYnVpQQ?oc=5" target="_blank">Anthropic Races to Contain Leak of Code Behind Claude AI Agent</a> <font color="#6f6f6f">wsj.com</font></li><li><a href="https://news.google.com/rss/arti

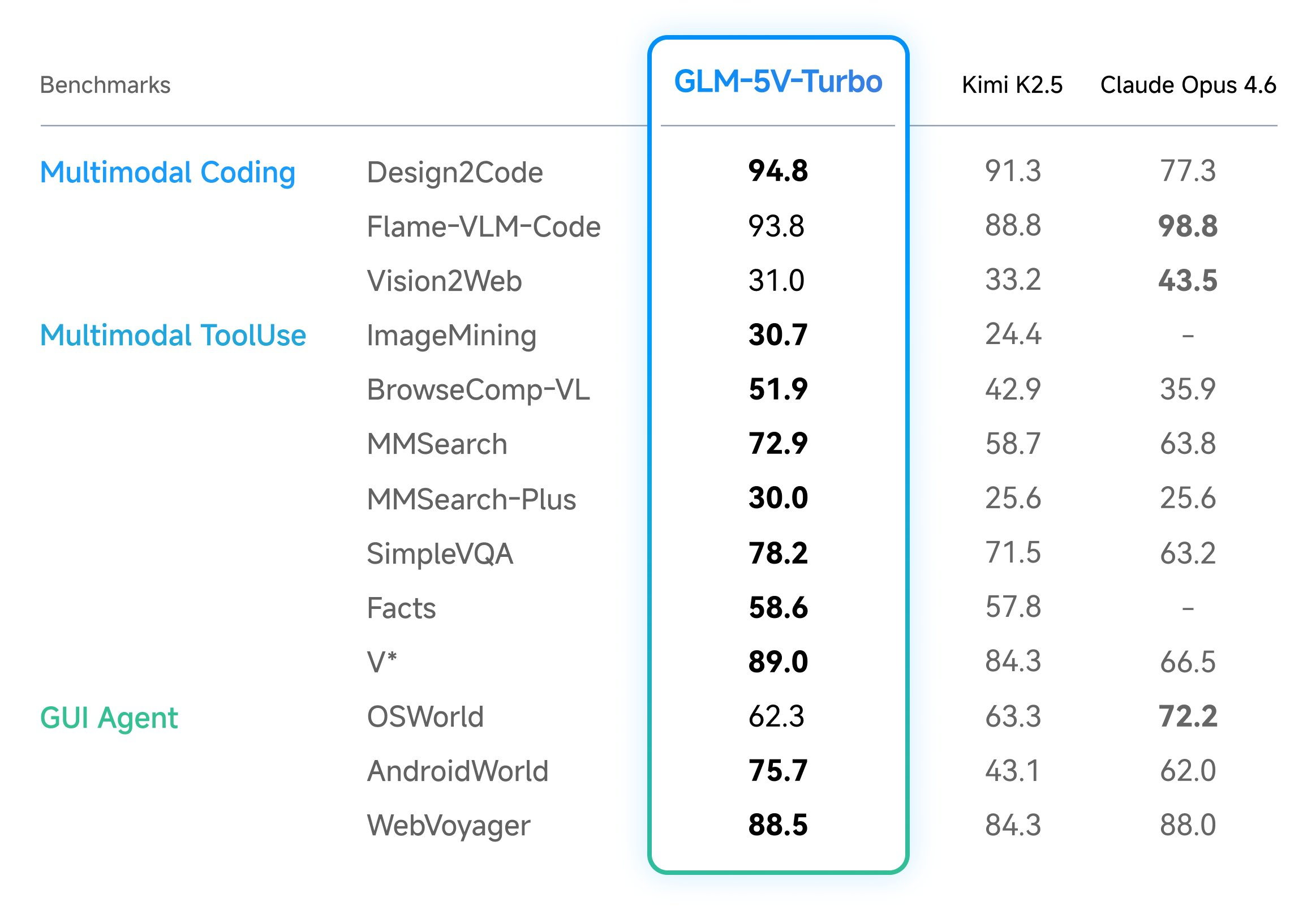

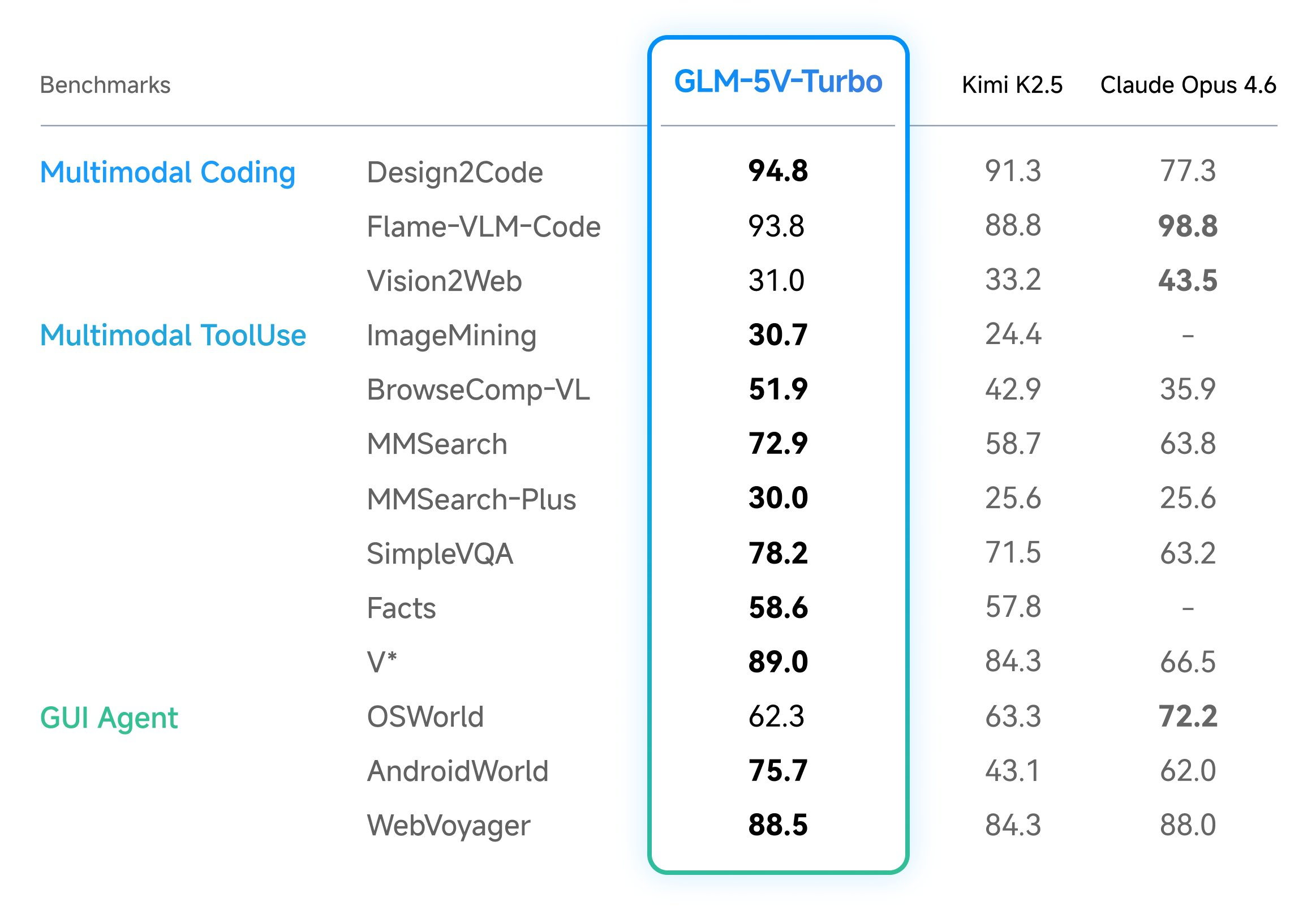

Z.ai Launches GLM-5V-Turbo: A Native Multimodal Vision Coding Model Optimized for OpenClaw and High-Capacity Agentic Engineering Workflows Everywhere

In the field of vision-language models (VLMs), the ability to bridge the gap between visual perception and logical code execution has traditionally faced a performance trade-off. Many models excel at describing an image but struggle to translate that visual information into the rigorous syntax required for software engineering. Zhipu AI’s (Z.ai) GLM-5V-Turbo is a vision […] The post Z.ai Launches GLM-5V-Turbo: A Native Multimodal Vision Coding Model Optimized for OpenClaw and High-Capacity Agentic Engineering Workflows Everywhere appeared first on MarkTechPost .

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Exclusive | The Sudden Fall of OpenAI’s Most Hyped Product Since ChatGPT - wsj.com

<a href="https://news.google.com/rss/articles/CBMiogNBVV95cUxPTmtKMzM0aFA2dXlOc1ZnWlpCN1c0WEotQnNlSENhVlN3S3ZoRmhoeHMzMzU1TWpzTTh2N0Q2OUxkMkNfNF9UVll3WF9DWkJPTGpFOVV0ZXNTWkdLb2lJQk9wcGFHdDVHVE9YeFhrSHJHOTJ4YjFILUltV0V4YUFTaGJjTDNUMHVWSUpQc21pNjVRWUwtYUdvSzA3VS1udmh0MnBuN0ctaE9fWEJuZXpLcUp6OFcxSjZxbmhmMkJhVUlTSGZCSWhhYnVLa21zRVZUMTZleWQzc05rVUZtTDhZTmtPanhQTk01c0VCa3JWVXVTNlR3R19oamx6dE0wSFUxUUhTTEIxSHBwVVZjcm9FbFJHalBKZ29IWmM0aGxhUm5KbS1weVhwWDZHR3c5Q084YWxGanpDQTJySHRxWVFNOFNaZGxMZjBoeUhqcUtPVVRKMHA4Rkl0SmFzalZiamNLTnR0MGpzSTZ1M3hQTXhMUmg0ZVp1MUJFcWZNZ19GT3Zid3JCb2dKZFNVX2EwcWZXMmc1ZEJVbXJUSm9nLTNxWjBB?oc=5" target="_blank">Exclusive | The Sudden Fall of OpenAI’s Most Hyped Product Since ChatGPT</a> <font color="#6f6f6f">wsj.com</font>

Exclusive | The Sudden Fall of OpenAI’s Most Hyped Product Since ChatGPT - wsj.com

<a href="https://news.google.com/rss/articles/CBMiogNBVV95cUxOejRwbUJkOHRhVGRaQ1FLeHQwYjVoakxuTFVPN3c5Z0RIdmdjTW5LdlpSbVppWGl2WTg5dzdEYmpsekFIMWxaamRWRUN3V0NBdS1rYklHT0ktOEZud0tYOHNqc1ZhMmo0UGMybHFWR0RIRWZWMGI3dzF4Umw5ZTNBOXY0OF9PNEJDbWxnUUNzQ3h2U0lubEFUQXpHY1ctSjkxV0pSUGZRTnVZUGdrMndIaGJLcERzM2t6RDA3ZXpYeWU2R3lQVXpUZW91NHpIbTlpbHRMYjg5MGxUa2QzRVJoU09LMjQzc1lEN3VVZ2hzRkxRdUtJVmtKTmMwMHRTUEJaazJmcTMycEdNY3J6T3BCLVBoZGVTV2ZoNXVKS09JUGFuaURobmlmY0l3X0xEVWFfTTBLOHdYVExhcXREcnNWUFZNb1NPR2dKc2ZFcEVrZWltcndFSFZlb0RtSlc1djc3SHlpQVVPUzNaOFpPLVhBeFlLQUxvYnctOG9SRzVTbjJkQVVsaWpIN0dnUDJvdEIzby0tbXhZNy1PQ0NSYy1URThn?oc=5" target="_blank">Exclusive | The Sudden Fall of OpenAI’s Most Hyped Product Since ChatGPT</a> <font color="#6f6f6f">wsj.com</font>

Z.ai Launches GLM-5V-Turbo: A Native Multimodal Vision Coding Model Optimized for OpenClaw and High-Capacity Agentic Engineering Workflows Everywhere

In the field of vision-language models (VLMs), the ability to bridge the gap between visual perception and logical code execution has traditionally faced a performance trade-off. Many models excel at describing an image but struggle to translate that visual information into the rigorous syntax required for software engineering. Zhipu AI’s (Z.ai) GLM-5V-Turbo is a vision […] The post Z.ai Launches GLM-5V-Turbo: A Native Multimodal Vision Coding Model Optimized for OpenClaw and High-Capacity Agentic Engineering Workflows Everywhere appeared first on MarkTechPost .

How AI Is Changing PTSD Recovery — And Why It Matters

<h2> The Silent Epidemic </h2> <p>PTSD affects over 300 million people worldwide. In Poland alone, an estimated 2-3 million people live with trauma-related disorders — and most never seek help. The reasons are universal: stigma, cost, waitlists that stretch for months, and the sheer difficulty of walking into a therapist's office when your nervous system screams <em>danger</em> at every social interaction.</p> <p>I know this because I built ALLMA — an AI psychology coach — not from a business plan, but from personal need.</p> <h2> What Traditional Therapy Gets Right (And Where It Falls Short) </h2> <p>Let me be clear: AI doesn't replace therapists. A good therapist is irreplaceable. But here's what the data shows:</p> <ul> <li> <strong>Average wait time</strong> for a psychiatrist in Polan

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!