Blocking the Internet Archive Won’t Stop AI, But It Will Erase the Web’s Historical Record

Imagine a newspaper publisher announcing it will no longer allow libraries to keep copies of its paper. That’s effectively what’s begun happening online in the last few months. The Internet Archive—the world’s largest digital library—has preserved newspapers since it went online in the mid-1990s . The Archive’s mission is to preserve the web and make it accessible to the public. To that end, the organization operates the Wayback Machine, which now contains more than one trillion archived web p

Imagine a newspaper publisher announcing it will no longer allow libraries to keep copies of its paper.

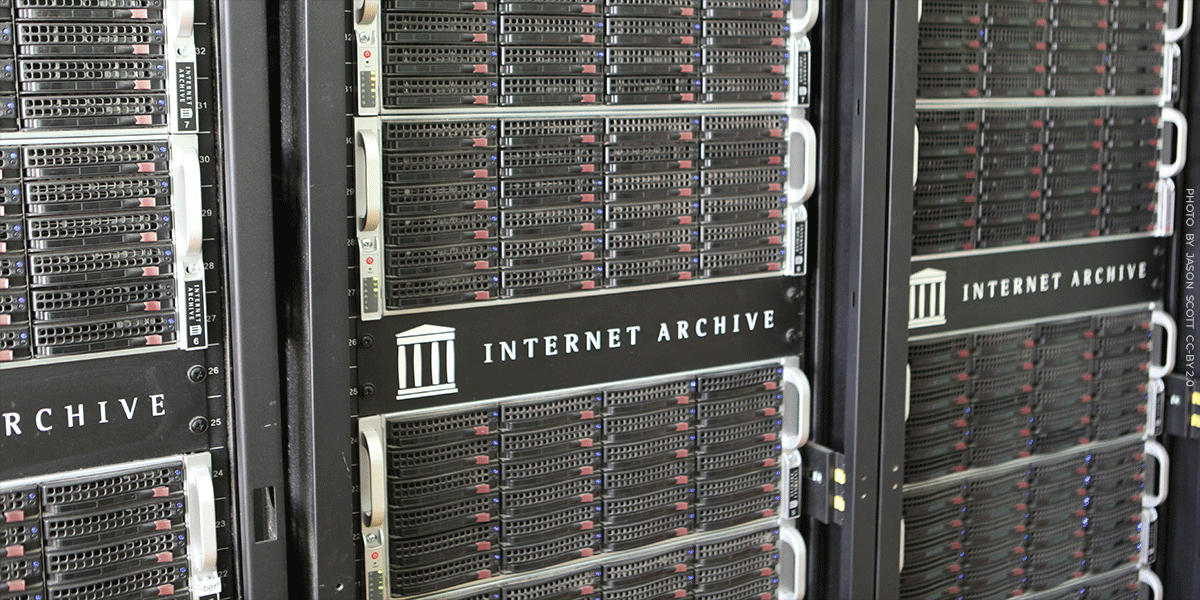

That’s effectively what’s begun happening online in the last few months. The Internet Archive—the world’s largest digital library—has preserved newspapers since it went online in the mid-1990s. The Archive’s mission is to preserve the web and make it accessible to the public. To that end, the organization operates the Wayback Machine, which now contains more than one trillion archived web pages and is used daily by journalists, researchers, and courts.

But in recent months The New York Times began blocking the Archive from crawling its website, using technical measures that go beyond the web’s traditional robots.txt rules. That risks cutting off a record that historians and journalists have relied on for decades. Other newspapers, including The Guardian, seem to be following suit.

For nearly three decades, historians, journalists, and the public have relied on the Internet Archive to preserve news sites as they appeared online. Those archived pages are often the only reliable record of how stories were originally published. In many cases, articles get edited, changed, or removed—sometimes openly, sometimes not. The Internet Archive often becomes the only source for seeing those changes. When major publishers block the Archive’s crawlers, that historical record starts to disappear.

The Times says the move is driven by concerns about AI companies scraping news content. Publishers seek control over how their work is used, and several—including the Times—are now suing AI companies over whether training models on copyrighted material violates the law. There’s a strong case that such training is fair use.

Whatever the outcome of those lawsuits, blocking nonprofit archivists is the wrong response. Organizations like the Internet Archive are not building commercial AI systems. They are preserving a record of our history. Turning off that preservation in an effort to control AI access could essentially torch decades of historical documentation over a fight that libraries like the Archive didn’t start, and didn’t ask for.

If publishers shut the Archive out, they aren’t just limiting bots. They’re erasing the historical record.

Archiving and Search Are Legal

Making material searchable is a well-established fair use. Courts have long recognized it’s often impossible to build a searchable index without making copies of the underlying material. That’s why when Google copied entire books in order to make a searchable database, courts rightly recognized it as a clear fair use. The copying served a transformative purpose: enabling discovery, research, and new insights about creative works.

The Internet Archive operates on the same principle. Just as physical libraries preserve newspapers for future readers, the Archive preserves the web’s historical record. Researchers and journalists rely on it every day. According to Archive staff, Wikipedia alone links to more than 2.6 million news articles preserved at the Archive, spanning 249 languages. And that’s only one example. Countless bloggers, researchers, and reporters depend on the Archive as a stable, authoritative record of what was published online.

The same legal principles that protect search engines must also protect archives and libraries. Even if courts place limits on AI training, the law protecting search and web archiving is already well established.

The Internet Archive has preserved the web’s historical record for nearly thirty years. If major publishers begin blocking that mission, future researchers may find that huge portions of that historical record have simply vanished. There are real disputes over AI training that must be resolved in courts. But sacrificing the public record to fight those battles would be a profound, and possibly irreversible, mistake.

Electronic Frontier Foundation

https://www.eff.org/deeplinks/2026/03/blocking-internet-archive-wont-stop-ai-it-will-erase-webs-historical-recordSign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modeltrainingmillion

Introducing MongoDB Agent Skills and Plugins for Coding Agents

Software engineering is evolving into agentic engineering. According to the Stack Overflow Developer Survey 2025, 84% of respondents use or plan to use AI tools in their development, up from 76% the previous year. At this rate, the tooling needs to keep pace. Last year, we introduced the MongoDB MCP Server to give agents the connectivity they need to interact with MongoDB, helping them generate context-aware code. But connectivity was only the start. Agents are generalists by design, and they don't inherently know the best practices and design patterns that real-world production systems demand. Today, we're addressing this by introducing official MongoDB Agent Skills: structured instructions, best practices, and resources that agents can discover and apply to generate more reliable code ac

Let Distortion Guide Restoration (DGR): A physics-informed learning framework for Prostate Diffusion MRI

arXiv:2601.00226v2 Announce Type: replace Abstract: We present Distortion-Guided Restoration (DGR), a physics-informed hybrid CNN-diffusion framework for acquisition-free correction of severe susceptibility-induced distortions in prostate single-shot EPI diffusion-weighted imaging (DWI). DGR is trained to invert a realistic forward distortion model using large-scale paired distorted and undistorted data synthesized from distortion-free prostate DWI and co-registered T2-weighted images from 410 multi-institutional studies, together with 11 measured B0 field maps from metal-implant cases incorporated into a forward simulator to generate low-b DWI (b = 50 s per mm squared), high-b DWI (b = 1400 s per mm squared), and ADC distortions. The network couples a CNN-based geometric correction module

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Research Papers

VRUD: A Drone Dataset for Complex Vehicle-VRU Interactions within Mixed Traffic

arXiv:2604.01134v1 Announce Type: cross Abstract: The Operational Design Domain (ODD) of urbanoriented Level 4 (L4) autonomous driving, especially for autonomous robotaxis, confronts formidable challenges in complex urban mixed traffic environments. These challenges stem mainly from the high density of Vulnerable Road Users (VRUs) and their highly uncertain and unpredictable interaction behaviors. However, existing open-source datasets predominantly focus on structured scenarios such as highways or regulated intersections, leaving a critical gap in data representing chaotic, unstructured urban environments. To address this, this paper proposes an efficient, high-precision method for constructing drone-based datasets and establishes the Vehicle-Vulnerable Road User Interaction Dataset (VRUD

From Code Changes to Quality Gains: An Empirical Study in Python ML Systems with PyQu

arXiv:2511.02827v3 Announce Type: replace Abstract: In an era shaped by Generative Artificial Intelligence for code generation and the rising adoption of Python-based Machine Learning systems (MLS), software quality has emerged as a major concern. As these systems grow in complexity and importance, a key obstacle lies in understanding exactly how specific code changes affect overall quality-a shortfall aggravated by the lack of quality assessment tools and a clear mapping between ML systems code changes and their quality effects. Although prior work has explored code changes in MLS, it mostly stops at what the changes are, leaving a gap in our knowledge of the relationship between code changes and the MLS quality. To address this gap, we conducted a large-scale empirical study of 3,340 ope

Mitigating Omitted Variable Bias in Empirical Software Engineering

arXiv:2501.17026v5 Announce Type: replace Abstract: Omitted variable bias occurs when a statistical model leaves out variables that are relevant determinants of the effects under study. This results in the model attributing the missing variables' effect to some of the included variables -- hence over- or under-estimating the latter's true effect. Omitted variable bias presents a significant threat to the validity of empirical research, particularly in non-experimental studies such as those prevalent in empirical software engineering. This paper illustrates the impact of omitted variable bias on two illustrative examples in the software engineering domain, and uses them to present methods to investigate the possible presence of omitted variable bias, to estimate its impact, and to mitigate

A Novel Near-Field Dictionary Design for Hybrid MIMO with Uniform Planar Arrays

arXiv:2602.17202v2 Announce Type: replace Abstract: Near-field ultra-massive MIMO (U-MIMO) systems provide enhanced spatial resolution but present challenges for channel estimation, particularly when hybrid architectures are employed. Within this framework, dictionary-based channel estimation schemes are needed to achieve accurate reconstruction from a reduced set of measurements. However, existing near-field dictionaries generally provide full three-dimensional coverage, which is unnecessary when user equipments are primarily located on the ground. In this paper, we propose a novel near-field grid design tailored to this common scenario. Specifically, grid points lie on a reference plane located at an arbitrary height with respect to the U-MIMO system, equipped with a uniform planar array

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!