AURA: Multimodal Shared Autonomy for Real-World Urban Navigation

arXiv:2604.01659v1 Announce Type: new Abstract: Long-horizon navigation in complex urban environments relies heavily on continuous human operation, which leads to fatigue, reduced efficiency, and safety concerns. Shared autonomy, where a Vision-Language AI agent and a human operator collaborate on maneuvering the mobile machine, presents a promising solution to address these issues. However, existing shared autonomy methods often require humans and AI to operate within the same action space, leading to high cognitive overhead. We present Assistive Urban Robot Autonomy (AURA), a new multi-modal framework that decomposes urban navigation into high-level human instruction and low-level AI control. AURA incorporates a Spatial-Aware Instruction Encoder to align various human instructions with v

View PDF HTML (experimental)

Abstract:Long-horizon navigation in complex urban environments relies heavily on continuous human operation, which leads to fatigue, reduced efficiency, and safety concerns. Shared autonomy, where a Vision-Language AI agent and a human operator collaborate on maneuvering the mobile machine, presents a promising solution to address these issues. However, existing shared autonomy methods often require humans and AI to operate within the same action space, leading to high cognitive overhead. We present Assistive Urban Robot Autonomy (AURA), a new multi-modal framework that decomposes urban navigation into high-level human instruction and low-level AI control. AURA incorporates a Spatial-Aware Instruction Encoder to align various human instructions with visual and spatial context. To facilitate training, we construct MM-CoS, a large-scale dataset comprising teleoperation and vision-language descriptions. Experiments in simulation and the real world demonstrate that AURA effectively follows human instructions, reduces manual operation effort, and improves navigation stability, while enabling online adaptation. Moreover, under similar takeover conditions, our shared autonomy framework reduces the frequency of takeovers by more than 44%. Demo video and more detail are provided in the project page.

Comments: 17 pages, 18 figures, 4 tables, conference

Subjects:

Robotics (cs.RO)

Cite as: arXiv:2604.01659 [cs.RO]

(or arXiv:2604.01659v1 [cs.RO] for this version)

https://doi.org/10.48550/arXiv.2604.01659

arXiv-issued DOI via DataCite (pending registration)

Submission history

From: Yukai Ma [view email] [v1] Thu, 2 Apr 2026 05:59:31 UTC (24,086 KB)

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

trainingannouncesafety

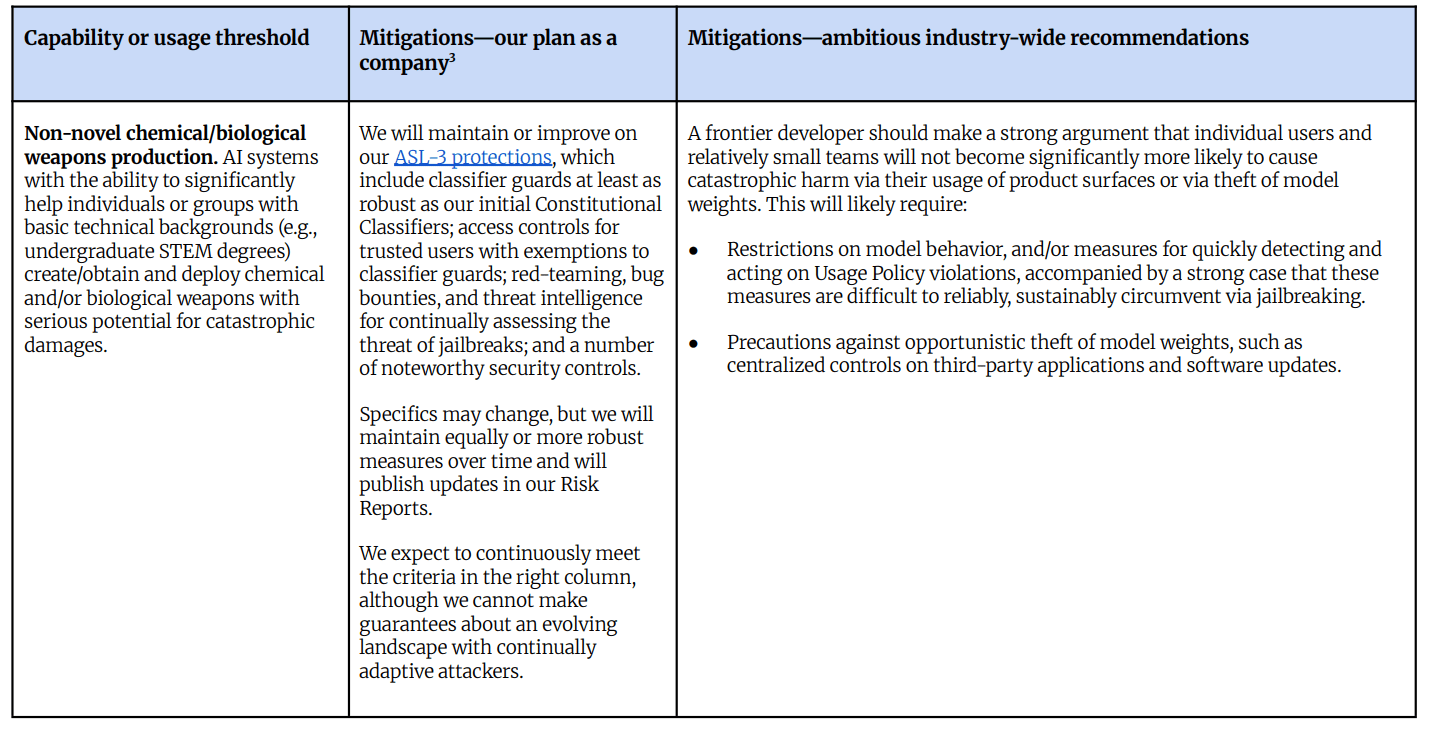

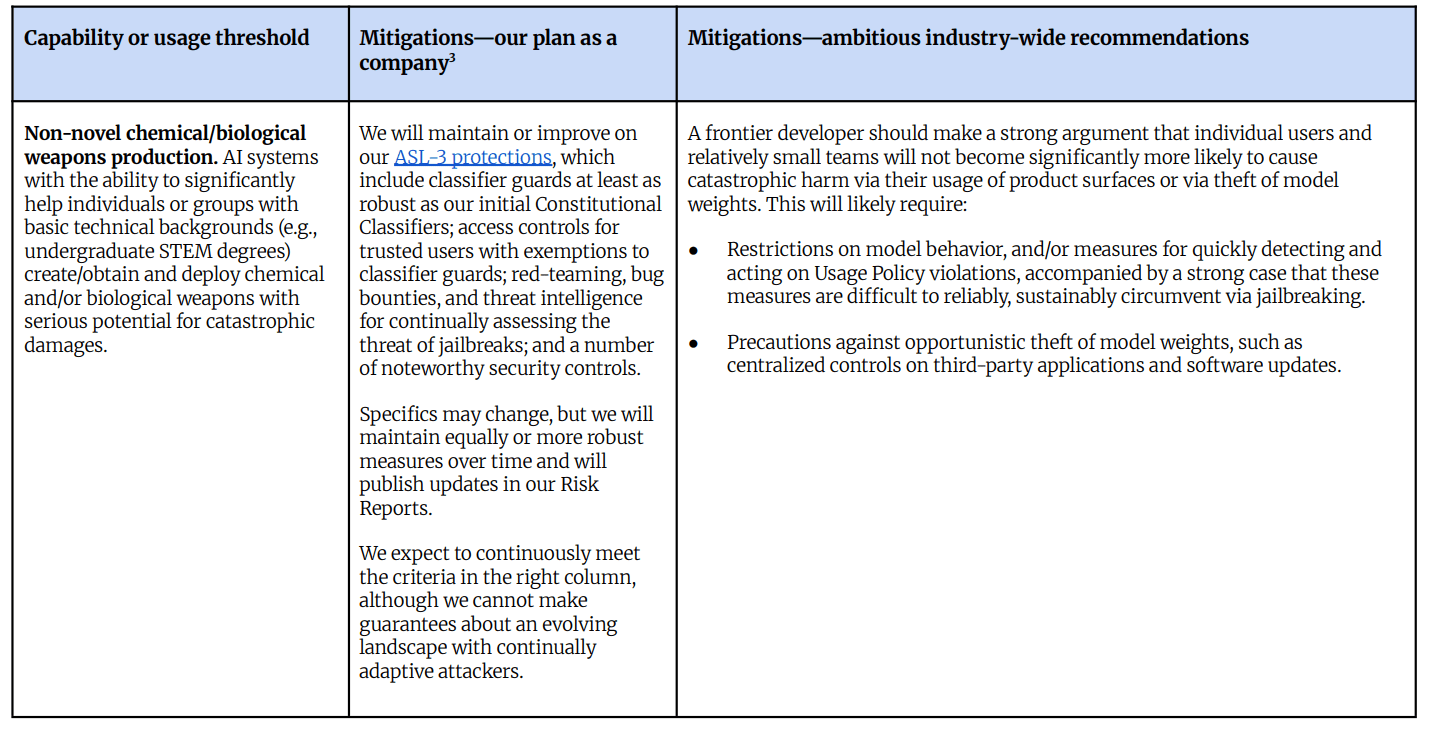

Anthropic Responsible Scaling Policy v3: Dive Into The Details

Wednesday’s post talked about the implications of Anthropic changing from v2.2 to v3.0 of its RSP, including that this broke promises that many people relied upon when making important decisions. Today’s post treats the new RSP v3.0 as a new document, and evaluates it. First I’ll go over how the RSP v3.0 works at a high level. Then I’ll dive into the Roadmap and the Risk Report. How RSP v3.0 Works Normally I would pay closer attention to the exact written contents of the new RSP. In this case, it’s not that the RSP doesn’t matter. I do think the RSP will have some influence on what Anthropic chooses to do, as will the road map, as will the resulting risk reports. However, the fundamental design principle is flexibility and a ‘strong argument,’ and they can change the contents at any time,

Silverback AI Chatbot Announces Development of AI Assistant Feature to Support Automated Digital Interaction and Workflow Management - South Bend Tribune

Silverback AI Chatbot Announces Development of AI Assistant Feature to Support Automated Digital Interaction and Workflow Management South Bend Tribune

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Frontier Research

Anthropic Responsible Scaling Policy v3: Dive Into The Details

Wednesday’s post talked about the implications of Anthropic changing from v2.2 to v3.0 of its RSP, including that this broke promises that many people relied upon when making important decisions. Today’s post treats the new RSP v3.0 as a new document, and evaluates it. First I’ll go over how the RSP v3.0 works at a high level. Then I’ll dive into the Roadmap and the Risk Report. How RSP v3.0 Works Normally I would pay closer attention to the exact written contents of the new RSP. In this case, it’s not that the RSP doesn’t matter. I do think the RSP will have some influence on what Anthropic chooses to do, as will the road map, as will the resulting risk reports. However, the fundamental design principle is flexibility and a ‘strong argument,’ and they can change the contents at any time,

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!