Artificial Intelligence Hammers In The Final Nail In Karl Marx’s Coffin – OpEd - Eurasia Review

<a href="https://news.google.com/rss/articles/CBMitgFBVV95cUxQMURVTUNMTHM5dWtFZ2JIc3dGS1lFNXVHc3ZZM1pIa21meFhoZ1U3NTZaZEhRMjI1WjNiTmtISkpjRFNqY1dRM29jOEdwZ3JLT2VLZUxkWlE2c29sR0lOY1FhT1FpUnFqdW5lVTBKT2Q0RkRjTG1nVEY0T2hUZkFTSEFwYWZCNEtwX3FkOGE2Rk1FdDJHSExHdjMzZ3NrMUZqSVhoTzI3Z1B3elgtUlNudU5JdmpWQQ?oc=5" target="_blank">Artificial Intelligence Hammers In The Final Nail In Karl Marx’s Coffin – OpEd</a> <font color="#6f6f6f">Eurasia Review</font>

Could not retrieve the full article text.

Read on Google News: AI →Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

review

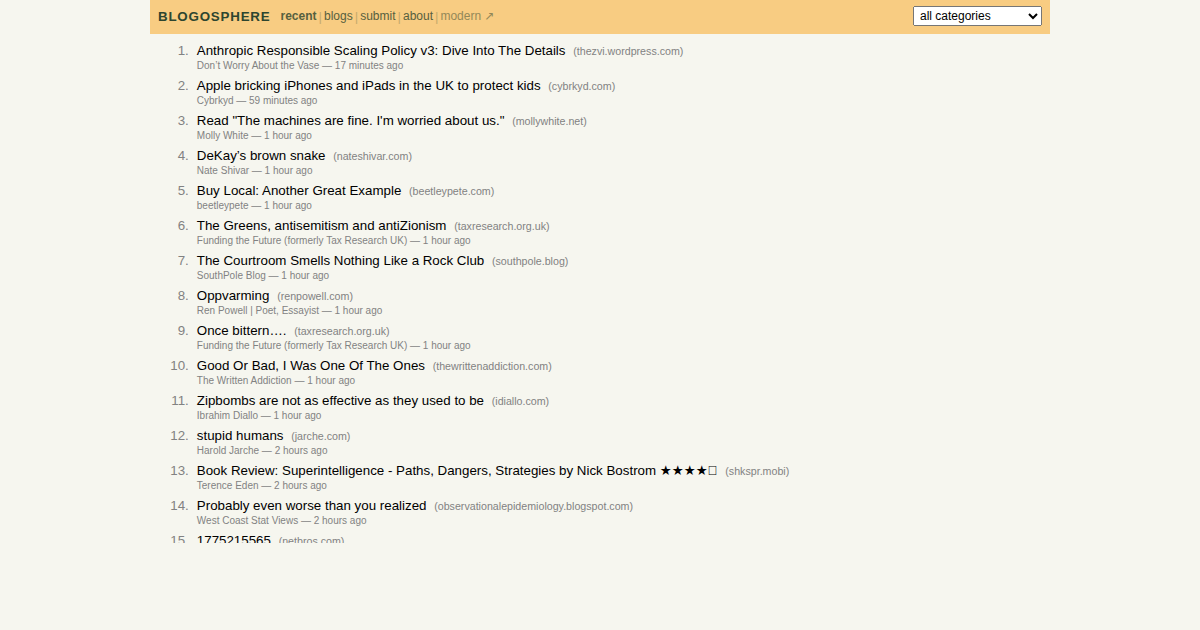

Show HN: I built a frontpage for personal blogs

With social media and now AI, its important to keep the indie web alive. There are many people who write frequently. Blogosphere tries to highlight them by fetching the recent posts from personal blogs across many categories. There are two versions: Minimal (HN-inspired, fast, static): https://text.blogosphere.app/ Non-minimal: https://blogosphere.app/ If you don't find your blog (or your favorite ones), please add them. I will review and approve it. Comments URL: https://news.ycombinator.com/item?id=47625952 Points: 22 # Comments: 10

Gemma 4 E2B as a multi-agent coordinator: task decomposition, tool-calling, multi-turn — it works

Wanted to see if Gemma 4 E2B could handle the coordinator role in a multi-agent setup — not just chat, but the actual hard part: take a goal, break it into a task graph, assign agents, call tools, and stitch results together. Short answer: it works. Tested with my framework open-multi-agent (TypeScript, open-source, Ollama via OpenAI-compatible API). What the coordinator has to do: Receive a natural language goal + agent roster Output a JSON task array (title, description, assignee, dependencies) Each agent executes with tool-calling (bash, file read/write) Coordinator synthesizes all results Quick note on E2B : "Effective 2B" — 2.3B effective params, 5.1B total. The extra ~2.8B is the embedding layer for 140+ language / multimodal support. So the actual compute is 2.3B. What I tested: Gav

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Analyst News

Exclusive | OpenAI Buys Tech-Industry Talk Show TBPN - WSJ

Exclusive | OpenAI Buys Tech-Industry Talk Show TBPN WSJ OpenAI Buys Streaming Show ‘TBPN,’ Aiming to Change Narrative on A.I. The New York Times 'Chasing vibes' — OpenAI's M&A strategy gets more confusing with TBPN purchase CNBC

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!