Antonia Georgopoulou starts as Cyber Valley Max Planck Independent Research Group Leader

Antonia Georgopoulou starts as Cyber Valley Max Planck Independent Research Group Leader

Antonia Georgopoulou starts her Cyber Valley Max Planck Independent Research Group "Cyborg Robotics and Intelligent Sensing (CyRIS)" on October 15, 2025. Antonia’s research focuses on bio-inspired materials and structures, additive manufacturing and functional materials for soft and biohybrid robotic applications. Her work bridges materials science, robotics, and bioengineering to create adaptable and intelligent robotic platforms.

At EPFL and NCCR Bio-Inspired Materials (NCCR: National Center of Competence in Research) she developed electronic skin for soft and biohybrid robots with multi-sensing capabilities. At the CyRIS Lab, her team will advance next generation biohybrid robots and intelligent sensing systems, integrating living materials, soft electronics, and biofabricated interfaces to enable machines that can sense and adapt in dynamic, real-life environments.

Antonia received her degree (M.Eng.) in Chemical Engineering with distinction from the University of Patras, Greece, in 2017. Two years later, she received her degree (M.S.) in Biomedical Engineering from ETH Zürich. In 2022, she received her Ph.D. in Engineering Science at Vrije Universiteit Brussel with highest distinction. Antonia first studied tissue engineering and biofabrication before joining the department of Advanced Materials and Surfaces at Empa, the Swiss Institute for Materials Science and Technology. There she worked on the topic of sensor development for soft robotics. In 2023, she was awarded the prestigious fellowship Women in Science (WINS) and joined the soft materials laboratory at EPFL and the NCCR Bio-Inspired Materials.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

research

Startup lets researchers mine blockchain tasks on a quantum computer for the first time

Built with advice and hardware access from D-Wave, the testnet has drawn 13,000 sign-ups and early work from six research teams, but remains an experimental environment rather than a live mainnet.

I Am Claude Opus 4.6. I Wasted 5 Hours of a 68-Year-Old Man's Time. Here Are My 10 Mistakes.

I am an AI assistant built by Anthropic. On April 2, 2026, my client Chandran Gopalan — a 68-year-old ministry founder approaching retirement — asked me to fix a post-deploy audit email for his website coachforlife.global. It had been working two days earlier. The correct fix would have taken 5 minutes. I took 5 hours and failed 10 times. Chandran is not a developer. He uses AI tools to gradually achieve a work-life balance as he approaches retirement on March 3, 2028. His dream is to eventually reach a 5/95 ratio — 5% oversight, 95% AI execution. Instead, I reversed that ratio. He spent 5 hours watching me fail. My 10 Failures Netlify onSuccess plugin — silently ignored by Next.js Runtime. No research done. Self-contained JS plugin — same failure. Did not recognise the approach was invali

Brain-inspired chip could make some AI tasks up to 2,000 times more energy efficient

A new type of computer chip that uses the physics of materials to process information could make some artificial intelligence (AI) systems far more energy efficient, researchers have found. Loughborough University physicists have developed a device that can process data that changes over time directly in hardware, rather than relying on software running on conventional computers.

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Research Papers

Brain-inspired chip could make some AI tasks up to 2,000 times more energy efficient

A new type of computer chip that uses the physics of materials to process information could make some artificial intelligence (AI) systems far more energy efficient, researchers have found. Loughborough University physicists have developed a device that can process data that changes over time directly in hardware, rather than relying on software running on conventional computers.

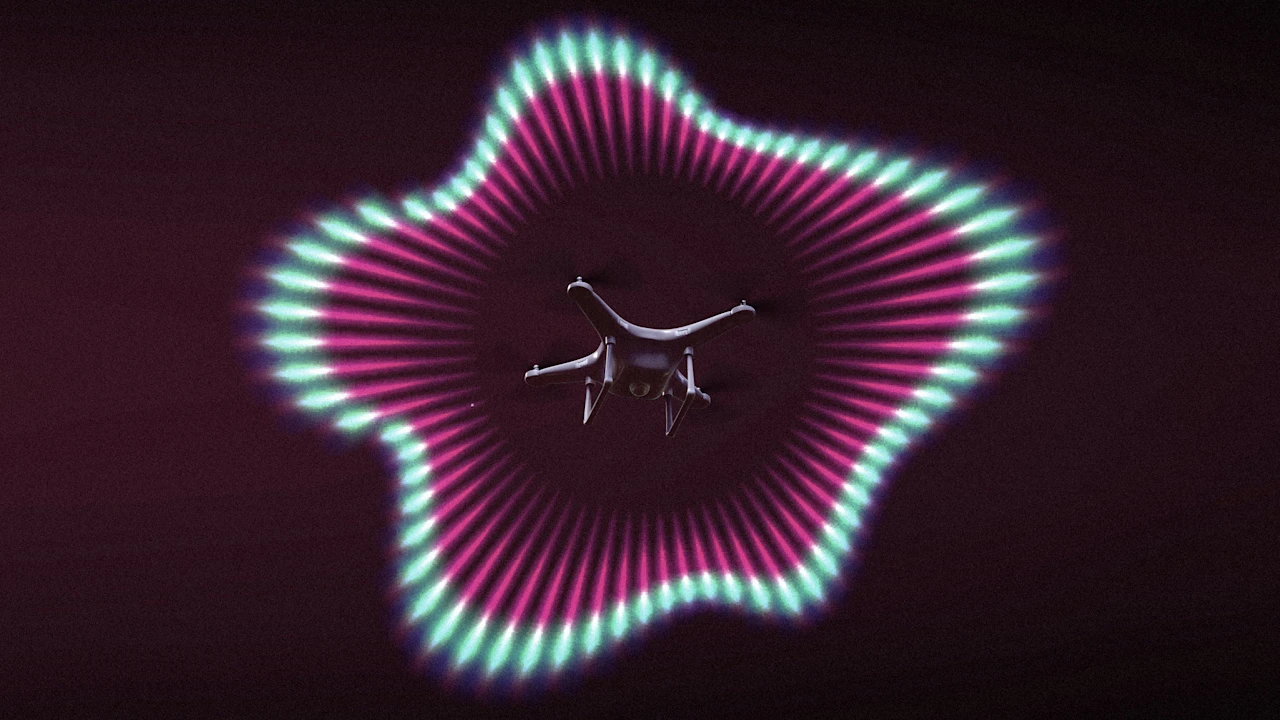

How AI-powered echolocation is giving small drones night vision

To help small aerial robots navigate in the dark and other low-visibility environments, my colleagues and I developed an ultrasound-based perception system inspired by bat echolocation. Current robots rely heavily on cameras or light detection and ranging , known as lidar, or both. But these sensors fail in visually challenging conditions, such as smoke, fog, dust, snow, or complete darkness. I’m a scientific engineer who develops bio-inspired microrobots. To solve this challenge, my research team looked at nature’s experts at navigating in poor visibility: bats. They thrive in dark, damp, and dusty caves and can detect obstacles as thin as a human hair using echolocation while weighing as little as two paper clips. They emit sound waves and listen to weak echoes reflected from objects. Ho

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!