AI, Price Theory, and the Future of Economics Research

Hey there, little explorer! Imagine you have a big box of LEGOs, right?

Sometimes, grown-ups called "economists" try to figure out why people do what they do, like why you choose a red block over a blue one. They used to spend a super long time counting all the blocks and making big lists.

Now, there's a new helper called AI! Think of AI like a super-fast robot friend who can count blocks and make lists in a blink! ✨

This robot friend makes some old jobs, like counting, much easier and quicker. So, the grown-ups don't have to spend all their time counting anymore. They can now spend more time asking bigger, cooler questions, like "Why do we even have LEGOs?" or "What amazing things can we build with them?"

AI is like a magic wand that helps them think about new, exciting puzzles instead of just the easy ones! Isn't that neat?

Article URL: https://knowledgeproblem.substack.com/p/ai-price-theory-and-the-future-of Comments URL: https://news.ycombinator.com/item?id=47632631 Points: 1 # Comments: 0

Source: ChatGPT upon reading this article

Economists spend their lives studying how people respond when circumstances change. It would be odd if we failed to apply the same logic to ourselves.

Artificial intelligence is not just another research tool. It is a technology shock to the academic knowledge economy. It lowers the cost of some inputs into research, raises the relative value of others, and begins to change what the profession rewards. That shift matters not only for how economists work, but also for which skills and forms of judgment are likely to become more valuable.

For the past several decades, economics has been shaped by a particular methodological equilibrium. The profession rewarded scholars who could assemble large datasets, digitize new sources of information, and use increasingly sophisticated econometric tools to extract credible causal estimates. This development improved economics in important ways by imposing discipline, raising standards of evidence, and moving the field away from loose speculation untethered from the world.

But tradeoffs are everywhere, including in research methods.

While also raising standards, the causal-inference era changed the kind of questions economists asked. Because credible identification strategies usually require narrow treatments, clean variation, tractable settings, and measurable outcomes, the profession gradually tilted toward questions that fit the available empirical machinery. The result has been a great deal of technically impressive work, but also, too often, a narrowing of intellectual ambition. Economics became more a field of data analysis and less a field of deeper inquiry into institutions, governance, organizational form, and the structure of human choice.

AI may now disrupt that equilibrium.

A growing number of economists have begun thinking seriously about what AI means for research. Anton Korinek has traced the progression from AI assistance on research micro-tasks to semi-autonomous agents that can work across multi-step workflows (JEL 2023, JEL 2025). Matthew Kahn emphasizes the fall in the fixed costs of pursuing ideas, Kevin Munger the rising importance of evaluation when production becomes cheap, and others have begun to map the broader consequences (for example, Alex Kustov, Scott Cunningham, and Chris Blattman’s Claude Blattman). I agree with much of that discussion. But the best way to understand what is happening is through price theory.

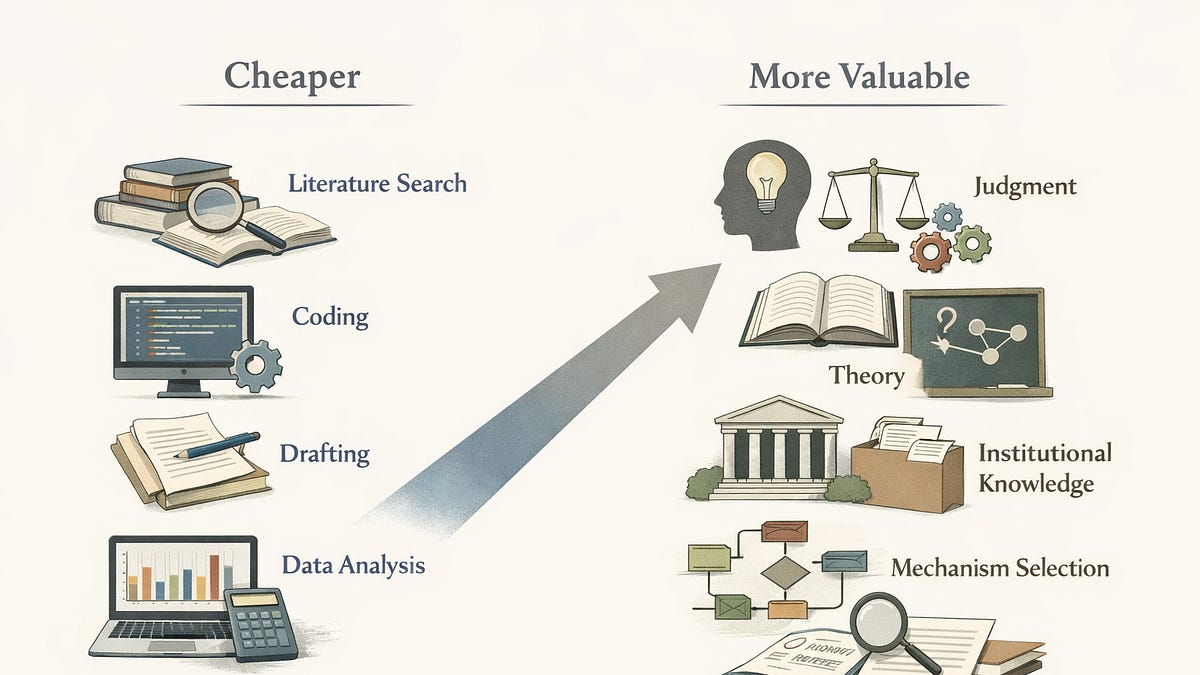

AI is a shock to the relative prices, values, and costs inside the academic knowledge economy. It cheapens some inputs, raises the relative value of others, and therefore changes what the profession rewards.

The clearest place to see the shift is in the falling cost of routine cognitive labor. Literature search, synthesis, coding, formatting, drafting, referee-response generation, specification exploration, and some forms of data cleaning and visualization are all becoming cheaper. Not free, and not perfect, but much cheaper than they were even two years ago. Tasks that once required hours of faculty time, graduate-student time, or RA time can now be completed in minutes, often at surprisingly high quality, though still with the need for human review.

The issue is not whether AI can produce useful work; it plainly can. The issue is that once many more people can produce plausible literature reviews, decent code, competent drafts, and standard empirical analyses, those things no longer define scholarly distinction in the way they once did. The floor rises, but the skill premium shifts.

And that shift raises the value of something else: judgment. In a world where production becomes abundant, discernment becomes relatively scarce and thus relatively more valuable. What matters more is the ability to decide what is worth studying, which mechanism is first-order, which institution matters, what assumptions are plausible, which results travel across contexts, and what pattern in the evidence actually matters.

This point is easy to miss because economics has often conflated “theory” with formal mathematical modeling. But theory in the deeper sense is economic reasoning: the disciplined application of principles and logic to understand behavior, tradeoffs, institutions, and constraints. It is the ability to ask the right price-theoretic question: what changed, what became cheaper, what became more valuable, what bottleneck moved, what margin now matters?

On that understanding, AI should not push economics away from theory. It should raise the return to deeper economic reasoning.

This is where Tyler Cowen’s recent work published last week is both provocative and, I think, wrong in an instructive way. In Chapter 4 of The Marginal Revolution, Cowen argues that machine learning and AI are weakening the traditional ties between economics and intuitive marginalist reasoning. In one sense, he is obviously right. More and more empirical work relies on high-dimensional prediction, algorithmic pattern detection, and black-box methods that do not map neatly onto the sort of verbal microeconomic intuition economists are used to teaching and defending. The old chalkboard-style link between intuitive theory and empirical implementation is under strain.

But it does not follow that marginal analysis is fading away. If anything, AI makes marginal analysis more important. AI does not change whether tradeoffs, substitutions, complementarities, constraints, and shadow prices govern the world; it changes where the important margins are. If AI lowers the cost of coding, synthesis, search, and routine empirical work, then the economist’s task becomes even more about understanding which scarcities still govern the system. What is the marginal value of human interpretation when machine-generated analysis becomes abundant? What happens to peer review when paper production becomes cheap? What becomes relatively more valuable when standard empirical execution is commoditized? What kinds of local knowledge remain hard to formalize and therefore rise in importance?

These are price-theoretic questions.

A Hayekian perspective sharpens the point. If AI cheapens formalized information processing, then tacit knowledge, local knowledge, judgment, and institutional understanding may rise in relative value. That is not a departure from economics. It is economics. Hayek taught that knowledge is dispersed, contextual, and often difficult to aggregate. The more abundant machine-generated outputs become, the more important it becomes to know which facts matter, which contexts are special, which institutions shape behavior, and which apparent regularities are misleading.

This possibility helps explain why AI may open up space for a revival of parts of economics that have not been central to the profession’s self-understanding for some time.

The field I have in mind here is transaction cost economics and new institutional economics. Some of the deepest insights in this tradition come from Oliver Williamson, in works like Markets and Hierarchies (1975) and The Economic Institutions of Capitalism (1985), as well as many journal articles. Williamson’s core contribution was to provide a disciplined way of reasoning about governance, contractual incompleteness, asset specificity, adaptation, authority, and the comparative institutional logic of markets, hierarchies, and hybrid arrangements.

That framework has had important empirical influence, especially in the work of Scott Masten and co-authors and Steven Tadelis and co-authors on incomplete contracting, and Robert Gibbons and co-authors on relational contracting. But here there is an important asymmetry. The empirical uptake of Williamson has been strongest in areas where the relevant phenomena are amenable to formal (often game) theory and leave observable traces in data. Contracting and procurement are natural examples. In those domains, governance choices and contractual structures are visible enough to study using the kinds of empirical tools that modern economics prefers.

But that empirical success should not obscure the larger point. The profession absorbed the slice of TCE/NIE that fit the reigning evidentiary regime. Much of the broader agenda remained underdeveloped, not because it lacked rigor, but because its testable implications were harder to force into the form of large datasets and narrow identification strategies.

That matters because some economically important phenomena require mixed evidentiary strategies precisely because the mechanisms themselves are institutionally embedded. Adaptation under uncertainty, authority within organizations, tacit coordination, internal dispute resolution, sequencing, relational governance, and the comparative fit between institutions and tasks are often economically central. But they do not arrive naturally as variables in a clean spreadsheet.

For a long time, that fact made such questions methodologically costly. They were harder to study in a profession that increasingly rewarded what could be digitized, scaled, and cleanly identified.

AI changes that cost structure. It lowers the cost not only of routine empirical analysis, but also of gathering, synthesizing, and interpreting richer kinds of evidence: textual records, contractual materials, case comparisons, archival documents, organizational descriptions, meeting transcripts, internal language, and other qualitative or semi-structured materials. More importantly, it raises the value of economists who can reason carefully about institutions even when the evidence is messy, incomplete, or not easily reducible to a panel dataset.

This is where the most interesting opportunity arises. The future may belong less to the economist who can execute a standard narrow causal design more efficiently, and more to the economist who can identify the right institutional margin, integrate multiple kinds of evidence, and make disciplined comparative judgments about governance and adaptation. Not a rejection of rigor, that is a broader understanding of what rigor in economics can look like.

Mullainathan and Rambachan’s recent paper “Science in the Age of Algorithms” (NBER 2025) pushes the argument beyond workflow. Their claim is not only that algorithms save time, but that they can formalize parts of science that have long been treated as tacit and off-stage: anomaly generation, theory generation, and judgments about when one theory matters more than another in a given setting. Their argument is especially relevant for economics because ours is not a “reigning champion” science in which one theory cleanly defeats another and takes its place. It is much more a patchwork science, in which researchers carry around portfolios of models and choose among them based on context, intuition, and judgment.

Researchers already decide which variables matter, which frictions are first-order, which simplifying assumptions are harmless, and which interpretation of the setting is economically meaningful. If AI makes narrow empirical execution cheaper, then those judgments move closer to center stage.

That is why I think the coming change in economics research may be deeper than many current discussions suggest. The most immediate effect of AI is to accelerate existing workflows. The more important effect may be to reprice the components of intellectual distinction. Once routine production becomes cheaper, the profession has to ask what remains scarce.

One answer is evaluation. If papers become much easier to produce, then the cost of filtering them rises in relative terms. Journals, editors, reviewers, and trusted intellectual communities become more important. As Kevin Munger has argued, when the supply of manuscripts rises dramatically, the key problem becomes metascience: how to allocate scarce attention and preserve standards when production is cheap. Put differently, AI lowers the cost of speaking, so the cost of listening rises.

Another answer is training. The apprentice model of economics has long depended on graduate students and young scholars learning by doing the smaller tasks that add up to research competence. If AI absorbs many of those tasks, the profession may gain current productivity while weakening one of the channels through which future judgment is formed. The profession will have to rethink how it cultivates judgment when many traditional apprenticeship tasks have been automated.

But the most important answer, I think, is deeper economic reasoning. For the past generation, economics often rewarded the subset of theories that could be converted into clean tests using existing data infrastructures. That equilibrium made sense given the relative scarcities of the time. Credible identification, data construction, and technical execution were scarce, and the profession rationally rewarded them. But a change in technology changes the logic of reward. Once those activities become less scarce, a different set of capabilities rises in relative value.

The economist of the near future will still need empirical skill. But she will increasingly distinguish herself not by her ability to carry out narrow empirical routines, but by her ability to frame deep institutional questions, decide which mechanisms are first-order, and interpret a rapidly changing world with theories that travel across contexts. That is economics recovering some of its comparative advantage.

For too long, many parts of the discipline have acted as though rigor and interestingness were substitutes. The result has often been a choice between intellectually ambitious but difficult-to-test questions and tightly identified but conceptually narrow ones. AI does not eliminate that tension, but it may soften it. By lowering the cost of standard research production and expanding the feasible set of evidence-gathering and synthesis, it creates more room for economists to ask bigger questions without giving up discipline.

This possibility should make us more pluralistic, in the sense of recognizing that different mechanisms require different forms of evidence, and that institutional phenomena are often too rich to be captured by one evidentiary strategy alone. Economics at its best has always been about disciplined reasoning under constraint. AI changes the constraints. It should also change the discipline’s self-understanding.

If this shift continues, the economists who stand out will not be the ones who most efficiently perform the routines that have defined prestige for the past three decades. They will be the ones who can see where the bottlenecks have moved, where institutional change is occurring, what forms of knowledge remain tacit and local, and how to reason clearly about governance, adaptation, and human choice in a world whose old constraints are coming undone.

That would not mean economics is abandoning its core logic. It would mean economics is rediscovering it.

Thanks for reading Knowledge Problem! This post is public so feel free to share it.

Share

No posts

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

research

Holos: A Web-Scale LLM-Based Multi-Agent System for the Agentic Web

arXiv:2604.02334v1 Announce Type: new Abstract: As large language models (LLM)-driven agents transition from isolated task solvers to persistent digital entities, the emergence of the Agentic Web, an ecosystem where heterogeneous agents autonomously interact and co-evolve, marks a pivotal shift toward Artificial General Intelligence (AGI). However, LLM-based multi-agent systems (LaMAS) are hindered by open-world issues such as scaling friction, coordination breakdown, and value dissipation. To address these challenges, we introduce Holos, a web-scale LaMAS architected for long-term ecological — Xiaohang Nie, Zihan Guo, Zicai Cui, Jiachi Yang, Zeyi Chen, Leheyi De, Yu Zhang, Junwei Liao, Bo Huang, Yingxuan Yang, Zhi Han, Zimian Peng, Linyao Chen, Wenzheng Tom Tang, Zongkai Liu, Tao Zhou, Botao Amber Hu, Shuyang Tang, Jianghao Lin, Weiwen Liu, Muning Wen, Yuanjian Zhou, Weinan Zhang

Xpertbench: Expert Level Tasks with Rubrics-Based Evaluation

arXiv:2604.02368v1 Announce Type: new Abstract: As Large Language Models (LLMs) exhibit plateauing performance on conventional benchmarks, a pivotal challenge persists: evaluating their proficiency in complex, open-ended tasks characterizing genuine expert-level cognition. Existing frameworks suffer from narrow domain coverage, reliance on generalist tasks, or self-evaluation biases. To bridge this gap, we present XpertBench, a high-fidelity benchmark engineered to assess LLMs across authentic professional domains. XpertBench consists of 1,346 meticulously curated tasks across 80 categories, s — Xue Liu, Xin Ma, Yuxin Ma, Yongchang Peng, Duo Wang, Zhoufutu Wen, Ge Zhang, Kaiyuan Zhang, Xinyu Chen, Tianci He, Jiani Hou, Liang Hu, Ziyun Huang, Yongzhe Hui, Jianpeng Jiao, Chennan Ju, Yingru Kong, Yiran Li, Mengyun Liu, Luyao Ma, Fei Ni, Yiqing Ni, Yueyan Qiu, Yanle Ren, Zilin Shi, Zaiyuan Wang, Wenjie Yue, Shiyu Zhang, Xinyi Zhang, Kaiwen Zhao, Zhenwei Zhu

CoME-VL: Scaling Complementary Multi-Encoder Vision-Language Learning

A vision-language model fusion framework combines contrastive and self-supervised visual encoders using entropy-guided aggregation and RoPE-enhanced attention to improve visual understanding and grounding tasks. (0 upvotes on HuggingFace)

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Research Papers

Xpertbench: Expert Level Tasks with Rubrics-Based Evaluation

arXiv:2604.02368v1 Announce Type: new Abstract: As Large Language Models (LLMs) exhibit plateauing performance on conventional benchmarks, a pivotal challenge persists: evaluating their proficiency in complex, open-ended tasks characterizing genuine expert-level cognition. Existing frameworks suffer from narrow domain coverage, reliance on generalist tasks, or self-evaluation biases. To bridge this gap, we present XpertBench, a high-fidelity benchmark engineered to assess LLMs across authentic professional domains. XpertBench consists of 1,346 meticulously curated tasks across 80 categories, s — Xue Liu, Xin Ma, Yuxin Ma, Yongchang Peng, Duo Wang, Zhoufutu Wen, Ge Zhang, Kaiyuan Zhang, Xinyu Chen, Tianci He, Jiani Hou, Liang Hu, Ziyun Huang, Yongzhe Hui, Jianpeng Jiao, Chennan Ju, Yingru Kong, Yiran Li, Mengyun Liu, Luyao Ma, Fei Ni, Yiqing Ni, Yueyan Qiu, Yanle Ren, Zilin Shi, Zaiyuan Wang, Wenjie Yue, Shiyu Zhang, Xinyi Zhang, Kaiwen Zhao, Zhenwei Zhu

Holos: A Web-Scale LLM-Based Multi-Agent System for the Agentic Web

arXiv:2604.02334v1 Announce Type: new Abstract: As large language models (LLM)-driven agents transition from isolated task solvers to persistent digital entities, the emergence of the Agentic Web, an ecosystem where heterogeneous agents autonomously interact and co-evolve, marks a pivotal shift toward Artificial General Intelligence (AGI). However, LLM-based multi-agent systems (LaMAS) are hindered by open-world issues such as scaling friction, coordination breakdown, and value dissipation. To address these challenges, we introduce Holos, a web-scale LaMAS architected for long-term ecological — Xiaohang Nie, Zihan Guo, Zicai Cui, Jiachi Yang, Zeyi Chen, Leheyi De, Yu Zhang, Junwei Liao, Bo Huang, Yingxuan Yang, Zhi Han, Zimian Peng, Linyao Chen, Wenzheng Tom Tang, Zongkai Liu, Tao Zhou, Botao Amber Hu, Shuyang Tang, Jianghao Lin, Weiwen Liu, Muning Wen, Yuanjian Zhou, Weinan Zhang

CoME-VL: Scaling Complementary Multi-Encoder Vision-Language Learning

A vision-language model fusion framework combines contrastive and self-supervised visual encoders using entropy-guided aggregation and RoPE-enhanced attention to improve visual understanding and grounding tasks. (0 upvotes on HuggingFace)

InCoder-32B-Thinking: Industrial Code World Model for Thinking

Industrial software development lacks expert reasoning traces for hardware constraints, so a model was trained on error-driven reasoning chains and domain-specific execution traces to generate high-quality code reasoning and performance. (1 upvotes on HuggingFace)

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!