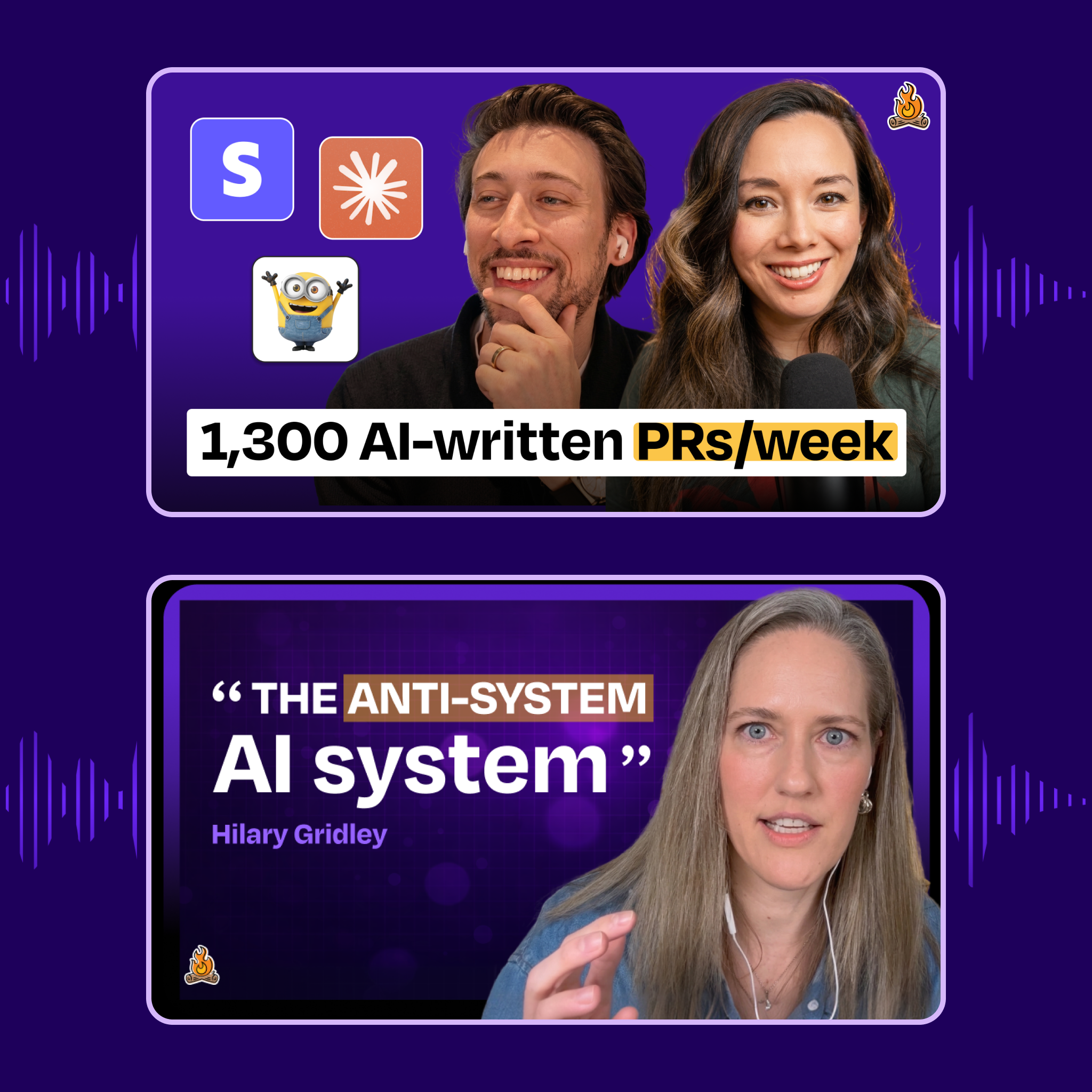

🎙️ This week on How I AI: How Stripe built “minions”—AI coding agents that ship 1,300 PRs per week + How to turn Claude Code into your personal life operating system

Your weekly listens from How I AI, part of the Lenny’s Podcast Network

Brought to you by:

- Optimizely—Your AI agent orchestration platform for marketing and digital teams

- Rippling—Stop wasting time on admin tasks, build your startup faster

Steve Kaliski has spent over six years building developer infrastructure at Stripe. In this conversation with Claire, he breaks down Stripe’s “minions”: AI coding agents that ship about 1,300 pull requests per week, often kicked off with nothing more than a Slack emoji. He explains why the real bottleneck in engineering isn’t coding, how cloud development environments unlock parallel AI workflows, and what it takes to safely review thousands of AI-generated PRs. He also demos AI agents that can spend money, coordinate services, and complete tasks end-to-end without human involvement.

- What’s good for human developers is good for AI agents (and vice versa). Stripe’s years of investment in developer experience—comprehensive documentation, blessed paths for common tasks, robust CI/CD, excellent tooling—directly translates to higher AI agent success rates. When you have clear docs on “how to add a new API field,” the agent can follow those same instructions. This creates a virtuous cycle: investments in DX improve agent performance, and investments in agent infrastructure (like cloud environments) benefit human developers too.

- Activation energy is the real bottleneck, not coding speed. Steve hasn’t started work in a text editor in months. Instead, work begins in Slack threads, Google Docs, or support tickets—the natural places where ideas emerge. By allowing engineers to kick off development with a single emoji reaction, Stripe lowered the friction between “good idea” and “code in production.” This is especially powerful in large organizations, where coordination costs typically kill momentum before coding even begins.

- Cloud development environments are non-negotiable for multi-threaded AI work. Running multiple AI agents in parallel requires cloud-based dev environments that can spin up in seconds, run isolated workloads, and never fall asleep. This infrastructure investment—which Stripe’s developer productivity team built long before AI agents—now enables engineers to run dozens of agents simultaneously without melting their MacBook Pros.

- 1,300 AI-written PRs per week requires shifting review capacity, not eliminating it. Stripe still reviews every AI-generated PR, but the review process relies heavily on automated confidence signals: comprehensive test coverage, synthetic end-to-end tests, and blue-green deployments that enable quick rollbacks. The bottleneck shifts from writing code to reviewing it—and eventually to generating enough good ideas in the first place.

- Machine-to-machine payments unlock ephemeral, API-first businesses. In Steve’s birthday party demo, Claude Code autonomously paid Browser Base, Parallel AI, and Postal Form for single-use services—no human signup, no subscription, no dashboard. Businesses can now optimize for agent consumers rather than human users, focusing on “hyper-useful single APIs” instead of landing pages and admin panels. The economics become transparent: tokens and dollars sit side by side, making the true cost of AI work visible.

- Treat AI agents like new employees, with progressive trust. Start with limited access, expand permissions as the agent proves reliable, and maintain clear boundaries. Each minion runs in an isolated environment with specific data access—the finance agent can read bank statements but can’t send messages; the scheduling agent can text but has no financial data. This physical partitioning prevents accidental data leakage and creates accountability.

- The future of software is disposable and hyper-personalized. Steve builds custom iOS apps for his toddler—music players limited to six specific songs—despite having no iOS development experience. He describes this as “the disposability of software”: when AI can build apps in hours, you can create single-purpose tools for incredibly specific use cases and throw them away when they’re no longer needed.

- How Stripe’s AI ‘Minions’ Ship 1,300 PRs Weekly from a Slack Emoji: https://www.chatprd.ai/how-i-ai/stripes-ai-minions-ship-1300-prs-weekly-from-a-slack-emoji

- How to Build an Autonomous AI Agent That Pays for Services to Complete Tasks: https://www.chatprd.ai/how-i-ai/workflows/how-to-build-an-autonomous-ai-agent-that-pays-for-services-to-complete-tasks

- How to Automate Code Generation from a Slack Message into a Pull Request: https://www.chatprd.ai/how-i-ai/workflows/how-to-automate-code-generation-from-a-slack-message-into-a-pull-request

Brought to you by:

WorkOS—Make your app enterprise-ready today

Lovable—Build apps by simply chatting with AI

Hilary Gridley returns to the podcast to share how her approach to productivity has completely evolved since her last appearance. Now a new mom and entrepreneur, she walks Claire through how she uses Claude Code as a personal operating system, managing everything from daily planning to life admin without complex tools or rigid workflows. Instead of building elaborate systems, Hilary leans into what she calls the “anti-system system”: letting AI observe her behavior, learn her preferences over time, and gradually take work off her plate. Together, Claire and Hilary explore how simple inputs—like capturing tasks with a shortcut or “yapping” to Claude throughout the day—can replace traditional productivity stacks and integrations.

- The 10x impact framework: For any task, ask “If I were 10 times better at it, would it have 10 times the impact?” If no, automate it. If yes, keep it human. This applies to both work tasks and life tasks—including whether baking bread will bring you joy or feel like a chore.

- Complexity has to earn its keep. Hilary only connects APIs and builds complex integrations after testing the “janky version” of a workflow for a week. Her hit rate is only 20% on workflows she actually continues using, so starting simple saves massive time.

- The yappers API beats OAuth every time. Instead of connecting all your tools in the background, just talk to Claude about what you’re doing throughout the day. Hilary keeps Claude Code open in her terminal and narrates her work, letting Claude observe and take notes without complex integrations.

- Let AI learn your preferences by observing, not by your defining them. Hilary never sat down to write out her ideal schedule. Claude just watches what she actually does (not what she says she’ll do) and adjusts preferences automatically. Real behavior beats aspirational planning.

- Calendar management is the ultimate to-do list. You can’t say you take something seriously if you’re not putting time into it. But manually adding tasks to your calendar is tedious—so let Claude do it automatically based on what you say you want to accomplish.

- Screenshots are your friend for getting started. Don’t wait for API access or permissions at work. Build a janky version with screenshots and voice dictation, prove it’s valuable, and then get the permissions you need. Half-baked ideas don’t deserve full access.

- You don’t need coding knowledge to build Claude Code skills. Hilary just describes problems to Claude: “I keep forgetting to return things on time.” Claude asks a few questions, then builds the entire workflow—including checking return policies and drop-off locations automatically.

- Test everything before integrating it into working systems. Hilary refuses to add new workflows to her daily routine until she’s tested them separately for a week. If something breaks, you don’t want it taking down systems that were already working.

- Build the muscle memory by doing one thing with AI every day. The biggest barrier isn’t technical knowledge—it’s rewiring your brain to think “the alien in my computer could help with this.” Hilary went from “I’ll never use the terminal” to running her life in Claude Code in about a week.

- How I AI: Hilary Gridley’s “Anti-System” for Automating Life with Claude Code: https://www.chatprd.ai/how-i-ai/gridleys-anti-system-for-automating-life-with-claude-code

- Create a Privacy-Protecting Demo Mode for Your Personal AI: https://www.chatprd.ai/how-i-ai/workflows/create-a-privacy-protecting-demo-mode-for-your-personal-ai

- Build Custom AI Automations by Having a Conversation with Claude: https://www.chatprd.ai/how-i-ai/workflows/build-custom-ai-automations-by-having-a-conversation-with-claude

- Automate Your Daily Planning with Claude Code and an iPhone Shortcut: https://www.chatprd.ai/how-i-ai/workflows/automate-your-daily-planning-with-claude-code-and-an-iphone-shortcut

If you’re enjoying these episodes, reply and let me know what you’d love to learn more about: AI workflows, hiring, growth, product strategy—anything.

Catch you next week,Lenny

P.S. Want every new episode delivered the moment it drops? Hit “Follow” on your favorite podcast app.

lennysnewsletter.com

https://www.lennysnewsletter.com/p/this-week-on-how-i-ai-how-stripeSign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

claudeagentclaude code

Tencent to Launch Hunyuan 3.0 in April, Build WeChat AI Agent - Caixin Global

<a href="https://news.google.com/rss/articles/CBMitAFBVV95cUxNMG9uakFkUnF3WXFlM2lDNzdHZE9qVUlJdUdzdndTQVprVE55NlVmQUhLZ0tmOVJTelNSOG14bWcyMGtlM1hwMG9mSEZTNVdGd2Q0OVIyWlNwWVhCcF9FYUstMklmOEZNYmx0Vm0zb3FmeTZFRlI5dWg4OFdRNVc5RmxienpGQWszeU5ZbTQybHFxWmlTc3VsTUJBdGRGbWJVUEh6ZzhkQVdzX0dhMGFtWmJ2a1U?oc=5" target="_blank">Tencent to Launch Hunyuan 3.0 in April, Build WeChat AI Agent</a> <font color="#6f6f6f">Caixin Global</font>

OutSystems Introduces Agentic Systems Engineering to Power Governed, Open Enterprise AI - Thailand Business News

<a href="https://news.google.com/rss/articles/CBMizgFBVV95cUxNZ2IxMU1kWGk5elYxcUU3ZDc0WHFlYUp1UzlsM2ZXRFhPZU9wMW9rNG5KQVc0dlV3VlRrWGlWMXExY1hfalVaWDJSd1oxMlVuN1dmQ2s1MHcwWGtpSmk0c1MzVUIzaVQxV2plZFNSSHg3bmpXcU9UOXdMZ0t6aWRGbV9TMEhQYWRXSGlkekRFTFdNU2JUU203NWo5cEctdDlQVXJhZVYtaHFLcDdVT19IOUJaRnBvbGgwUHJmX2toeGoyeTFjYmcwSF9JWHo2QQ?oc=5" target="_blank">OutSystems Introduces Agentic Systems Engineering to Power Governed, Open Enterprise AI</a> <font color="#6f6f6f">Thailand Business News</font>

Google's $20 per month AI Pro plan just got a big storage boost

Google's $20 per month AI Pro plan , which includes Gemini, Veo and Nano Banana, got a big storage boost and some other new perks. Users of the plan (also available for $200 per year ) will see their cloud space jump from 2TB to 5TB at no extra cost. That extra storage can be used not only for AI but also Gmail, Google Drive and Google Photos backups. Gemini can now pull context from Gmail and the web for Drive, Docs, Slides and Sheets, provide summaries for your Gmail inbox and proofread emails before you send them. It's also introducing additional agentic help with Chrome auto browse "that handles those tedious, multi-step chores — like planning a trip or filling out forms," Google VP Shimrit Ben-Yair wrote on X . Finally, Google announced that it's bundling its Home Premium subscription

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

RefineRL: Advancing Competitive Programming with Self-Refinement Reinforcement Learning

arXiv:2604.00790v1 Announce Type: new Abstract: While large language models (LLMs) have demonstrated strong performance on complex reasoning tasks such as competitive programming (CP), existing methods predominantly focus on single-attempt settings, overlooking their capacity for iterative refinement. In this paper, we present RefineRL, a novel approach designed to unleash the self-refinement capabilities of LLMs for CP problem solving. RefineRL introduces two key innovations: (1) Skeptical-Agent, an iterative self-refinement agent equipped with local execution tools to validate generated solutions against public test cases of CP problems. This agent always maintains a skeptical attitude towards its own outputs and thereby enforces rigorous self-refinement even when validation suggests cor

UK AISI Alignment Evaluation Case-Study

arXiv:2604.00788v1 Announce Type: new Abstract: This technical report presents methods developed by the UK AI Security Institute for assessing whether advanced AI systems reliably follow intended goals. Specifically, we evaluate whether frontier models sabotage safety research when deployed as coding assistants within an AI lab. Applying our methods to four frontier models, we find no confirmed instances of research sabotage. However, we observe that Claude Opus 4.5 Preview (a pre-release snapshot of Opus 4.5) and Sonnet 4.5 frequently refuse to engage with safety-relevant research tasks, citing concerns about research direction, involvement in self-training, and research scope. We additionally find that Opus 4.5 Preview shows reduced unprompted evaluation awareness compared to Sonnet 4.5,

CircuitProbe: Predicting Reasoning Circuits in Transformers via Stability Zone Detection

arXiv:2604.00716v1 Announce Type: new Abstract: Transformer language models contain localized reasoning circuits, contiguous layer blocks that improve reasoning when duplicated at inference time. Finding these circuits currently requires brute-force sweeps costing 25 GPU hours per model. We propose CircuitProbe, which predicts circuit locations from activation statistics in under 5 minutes on CPU, providing a speedup of three to four orders of magnitude. We find that reasoning circuits come in two types: stability circuits in early layers, detected through the derivative of representation change, and magnitude circuits in late layers, detected through anomaly scoring. We validate across 9 models spanning 6 architectures, including 2025 models, confirming that CircuitProbe top predictions m

Ontology-Constrained Neural Reasoning in Enterprise Agentic Systems: A Neurosymbolic Architecture for Domain-Grounded AI Agents

arXiv:2604.00555v1 Announce Type: new Abstract: Enterprise adoption of Large Language Models (LLMs) is constrained by hallucination, domain drift, and the inability to enforce regulatory compliance at the reasoning level. We present a neurosymbolic architecture implemented within the Foundation AgenticOS (FAOS) platform that addresses these limitations through ontology-constrained neural reasoning. Our approach introduces a three-layer ontological framework--Role, Domain, and Interaction ontologies--that provides formal semantic grounding for LLM-based enterprise agents. We formalize the concept of asymmetric neurosymbolic coupling, wherein symbolic ontological knowledge constrains agent inputs (context assembly, tool discovery, governance thresholds) while proposing mechanisms for extendi

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!