🔮 Autoresearch and the experimental society

The most important thing happening in AI right now is not just the intelligence of the models, but the harnesses that make that intelligence usable.

Science is the most reliable method humanity has found for producing knowledge. It has also, for most of history, been expensive to run.

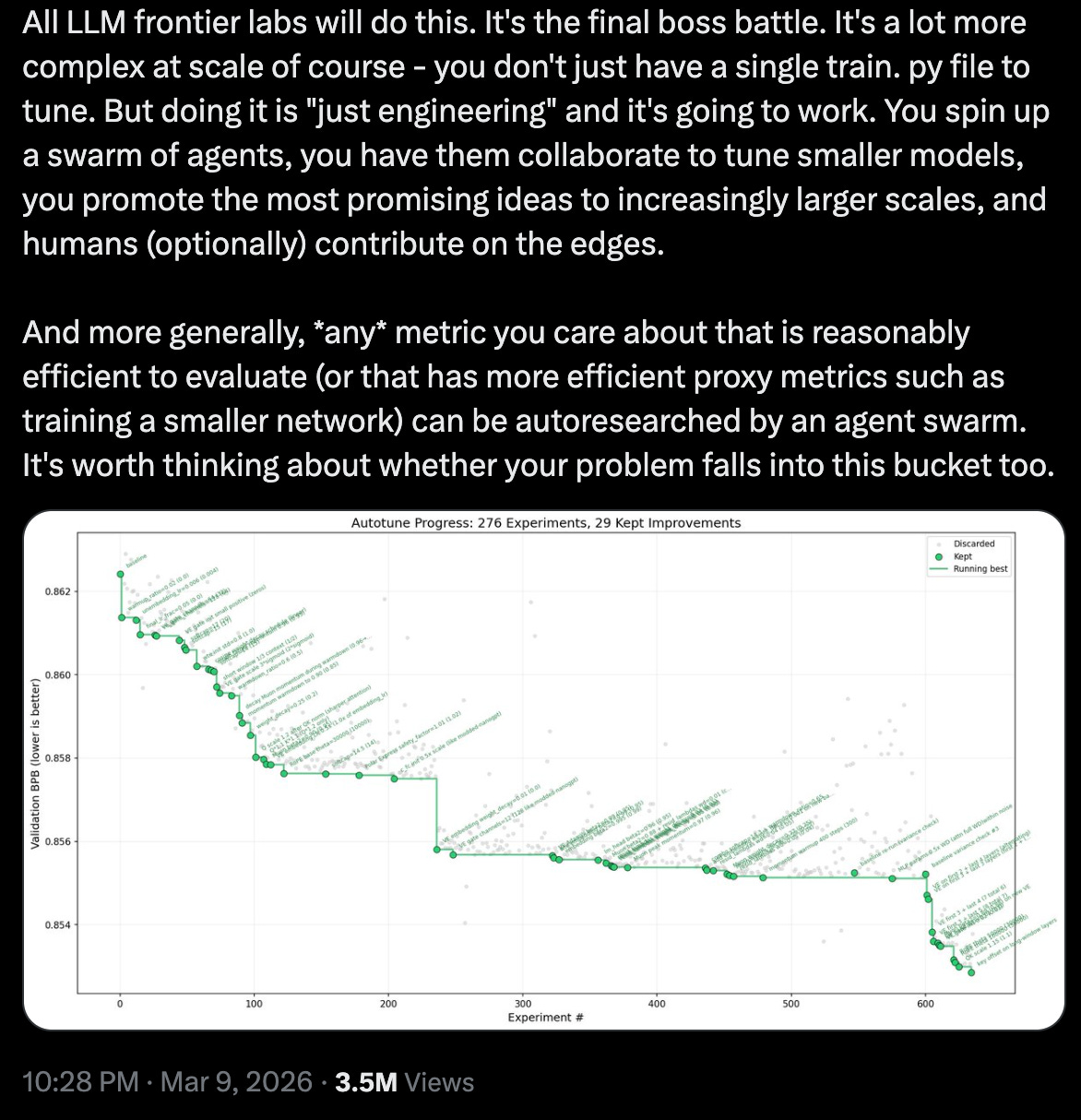

Andrej Karpathy released 600 lines of Python code a few weeks ago that started to change that. His autoresearch (see EV#565) runs an autonomous experimental loop in which a human sets a strategic direction, defines what good looks like and the agent iterates towards success within the guardrails. In Andrej’s initial experiment, it trained a GPT-2-level model over two days, 11% faster and found 20 genuine improvements.

Shortly after the release, Shopify’s CEO Toby Lütke used autoresearch on his company’s internal model, qmd; it ran 37 experiments overnight, so Toby woke up to a 0.8-billion-parameter model outscoring his previous 1.6-billion-parameter version by 19%. Toby is not a machine learning engineer.

Autoresearch is powerful because it solves two problems at once. One, it automates part of the knowledge-production process. And two, it solves the agent control problem, meaning it keeps agents on task. AI often drifts if you give it an open-ended brief or optimize for the wrong thing. To my great joy, autoresearch prevents this by design. The human decides where the car is going; autoresearch keeps its hands on the wheel.

I spent the last month adapting autoresearch for knowledge work beyond machine learning with the goal of spinning up a system that can run structured, low-cost experiments on the kinds of decisions most teams make every week. I’m calling this version AutoBeta, and I’m making the full playbook/skill available to paying members below.

Let’s go!

When I first saw autoresearch, my immediate reaction was that it didn’t have to be just about machine learning. The loop is generic – hypothesize, test, score, iterate. So I cloned it and started running it on other bits of my work.

It didn’t go exactly as I expected. The outputs looked fine but I couldn’t tell whether they were getting better. Unlike ML where the agent has a built-in feedback signal from each training run, knowledge work was missing it. A pricing decision doesn’t validate in five minutes; and the paragraphs I write don’t tell me if the argument is getting better or just changing, most of the time.

This is what makes applying autoresearch to knowledge work genuinely hard. The loop needs something to optimize against, and in knowledge work, that something doesn’t exist naturally.

So I constructed a version of autoresearch, called AutoBeta, that works across a wide range of business problems. It is not as technically robust as Karpathy’s, but it has the same design principles: I set the objective and the constraints, the experimentation takes place within the loop.

The one thing I changed was the score. I created “an oracle”, a panel of synthetic judges scoring each output against pre-defined criteria, collapsed into a single number the loop can optimize for.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelresearchKnowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!